The AI Detection Crisis: What Schools Owe Students They've Wrongly Accused

Vanderbilt University projected that running AI detection on 75,000 annual paper submissions at a 1% false positive rate would produce 750 wrongful academic misconduct charges every year. Not a risk to manage. A mathematical certainty. Their response was to disable detection entirely. MIT, Northwestern, and the University of Waterloo did the same. The students still sitting at institutions that haven't done that calculation deserve to know what the research shows, what the institutions that moved already knew, and what a wrongful accusation actually requires to fight.

- Vanderbilt, MIT, Northwestern, and the University of Waterloo have all disabled AI detection after calculating the institutional cost of systematic false positives.

- At a 1% false positive rate across 2.23 million annual submissions, roughly 223,500 papers are wrongly flagged as AI-generated every year. This is not a software glitch. It is the predictable output of deploying probabilistic tools in high-stakes environments.

- Turnitin's own release notes state detection scores should not be used as the sole basis for adverse actions against a student. Most schools are using them as exactly that.

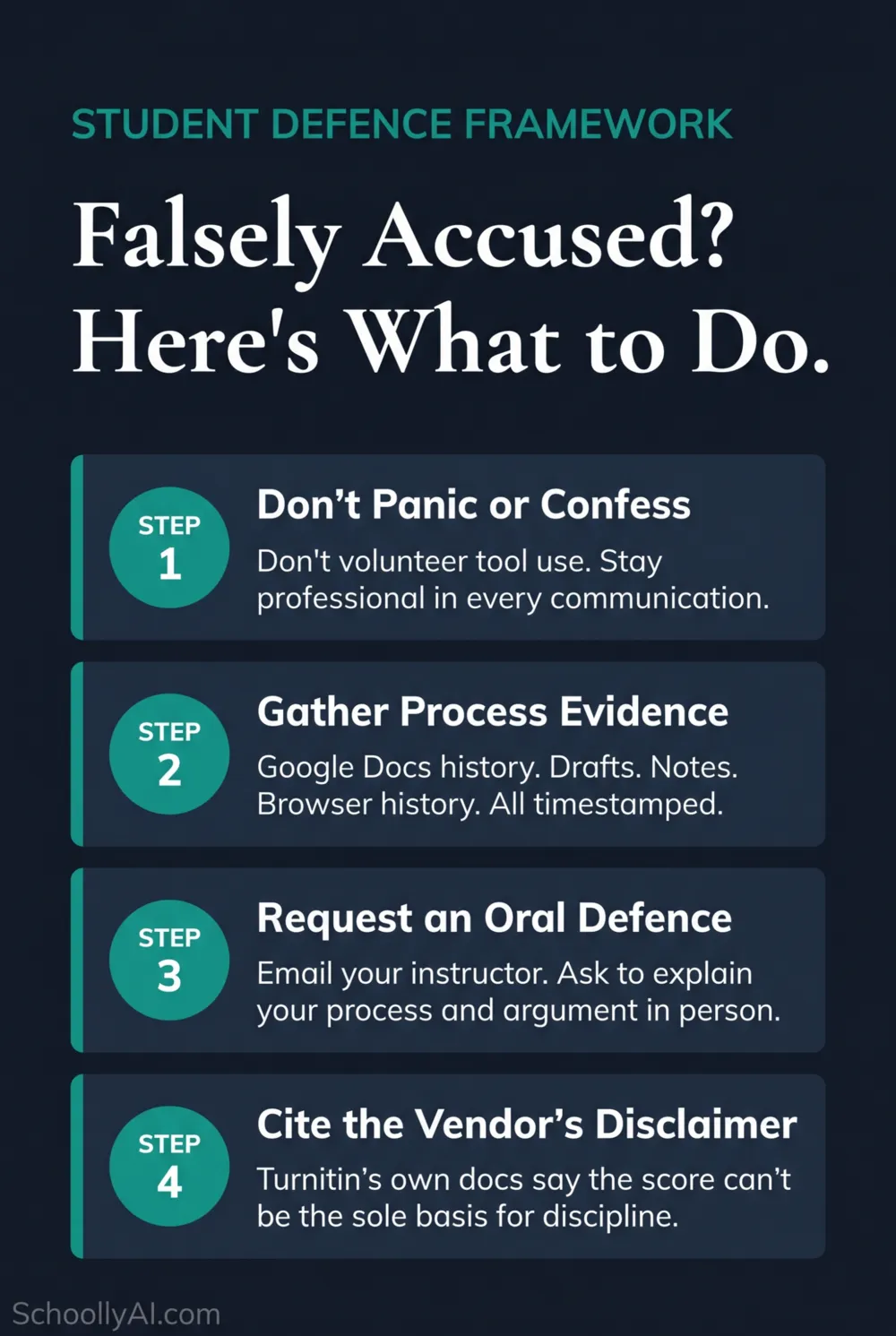

- Students at institutions still running detection can fight a false accusation. The defence is specific: process evidence, an oral defence, and the vendor's own disclaimer cited directly.

- The evidentiary bar for proving AI authorship cannot be met by a probabilistic score. Students who understand this are winning formal appeals at an 89% documented rate.

Process evidence is your strongest asset. A student who drafted, revised, and researched over several days leaves a trail that an AI-generated essay cannot produce.

The Accusation Landscape in 2026

The detection-first approach to AI academic integrity has produced a predictable outcome: mass false accusations at a scale schools were not prepared for. Vanderbilt University ran the numbers on their own submission volume. At a 1% false positive rate applied to 75,000 annual papers, the tool would mathematically guarantee 750 wrongful misconduct charges per year. That calculation is what led Vanderbilt to disable AI detection entirely. MIT, Northwestern, and the University of Waterloo followed.

For students who haven't been at institutions making that calculation, the situation is different. Over 60% of universities still use formal AI detection in disciplinary workflows, according to Thesify.ai's 2026 higher education guide. That means the burden of disproving an algorithmic accusation falls on the student, not the institution.

The burden has quietly reversed. Historically, academic institutions had to prove a student cheated. Today, a detection score functions as a de facto charge, and the student is expected to prove a negative. This is worth knowing clearly before you respond to anything.

RAND Corporation research from 2025 found that 50% of students actively worry they will be falsely accused of AI use. That anxiety is not irrational. The false positive rate on authentic human writing runs between 10% and 20% in independent testing, according to research collated by Structural Learning in 2026. These tools flag formal student writing for the same reason they flag AI text: both score low on perplexity and burstiness, why these tools flag honest students as consistently as they flag AI output.

| The Scale of the Problem | Statistic | Source |

|---|---|---|

| Students worried about false AI accusation | 50% | RAND Corporation, 2025 |

| Falsely flagged papers at 1% false positive rate across 2.23M submissions | 223,500 | NIU CITL / Bloomberg, 2024 |

| Vanderbilt projected wrongful charges at 1% false positive rate | 750 per year | Vanderbilt University calculation, 2025 |

| Turnitin sentence-level false positive rate (vendor admission) | ~4% | Turnitin Official Blog, 2024 |

| Probability of false flag over 100 assignments at 1% rate | 63% | Academic integrity research analysis, 2024 |

| Institutions using AI detection in disciplinary workflows | 60%+ | Thesify.ai Higher Ed Guide, 2026 |

That 63% figure is worth sitting with. Even if a detector were highly accurate, a student submitting 100 assignments over their college career faces a better-than-coin-flip chance of being wrongly flagged at least once. That is not a software glitch. That is basic probability applied to a high-volume, high-stakes system.

Step 1: Don't Panic or Confess to Anything Minor

The impulse when accused is to soften the confrontation by acknowledging something small. "I just used Grammarly to fix my grammar." "I ran it through spell-check." "I asked ChatGPT to explain one concept and then wrote about it myself." All of these are reasonable, widely used practices. They are also how a number of students have walked into formal misconduct charges.

Administrators unfamiliar with how AI tools actually work, or operating under pressure to take a detection score seriously, frequently treat any admission of any AI-adjacent tool use as a blanket confession to AI-generated writing. Unless your institution's policy explicitly permits Grammarly, don't lead with it. If asked directly, give a direct and honest answer. Don't volunteer it as a concession.

Stay calm in every written and verbal communication. Accusatory emails hurt your case. Professional, factual responses that request documentation and a formal hearing help it. The tone you take in your first reply sets the register for everything that follows.

One specific trap worth naming: some institutions hire technical "experts" who retroactively engineer ChatGPT prompts designed to produce essays that resemble the accused student's work, then use the similarity as after-the-fact evidence. This fundamentally misunderstands how generative AI works. An LLM can be coerced into replicating almost any text if prompted specifically enough. It proves nothing about who wrote the original.

Step 2: Gather Process Evidence Immediately

A linguistic argument will not clear you. Your writing style is not evidence of authorship. What clears a false AI accusation is documented proof of cognitive effort over time. That means process evidence, and gathering it fast matters because some of it has a short retrieval window.

Start with your Google Doc. Go to File, then Version History, then See Version History. Every edit, every revision, every paragraph added or deleted is timestamped. Export this or screenshot it thoroughly. This is your strongest single piece of evidence. It shows a drafting process unfolding over hours or days. AI doesn't revise in Google Docs over three days.

Then work through this audit before your first meeting:

Check off each item as you gather it. The more you have before your first meeting, the stronger your position.

The goal is to document a cognitive process that unfolded over time. AI generates an essay in seconds. A student who worked on a paper over several days leaves a different kind of trail. Your job is to make that trail visible. The step-by-step defence guide covers every stage from the first email through to a formal appeal.

Step 3: Request an Oral Defence

An oral defence is the most effective tool available to a falsely accused student. It is also underused because most students don't know they can ask for one.

Send a formal email to your instructor or academic integrity office. Keep it simple: you wrote the work yourself, you are prepared to demonstrate this, and you are formally requesting a meeting to explain your writing process and defend your arguments verbally. Don't be confrontational. Be factual and direct.

In that meeting, be ready for four things. First, walking through the structure of your argument from memory and explaining why you built it that way. Second, describing what was hardest about the assignment. Generative AI removes cognitive friction. A student who genuinely wrote a paper remembers where the friction was. A student who prompted AI cannot reconstruct an experience that didn't happen. Third, pointing to a complex sentence and explaining it in simpler words. Fourth, writing a continuation paragraph in front of your instructor. The live comparison between your in-session writing and your submitted work is strong evidence that no detection algorithm can produce.

This is also the approach that wins formal appeals. The video below, from Dr. Padilla, covers the documentation habits that help students survive exactly this kind of accusation, including the Green, Yellow, and Red zone framework for understanding what kind of AI use crosses an institutional line.

This video from Dr. Padilla covers where the line between helpful and cheating actually falls, and how students can document their process to defend against a false accusation. Highly recommended viewing before your meeting.

Step 4: Cite the Vendor's Own Disclaimer

This is the move most students and parents don't know to make, and it is often the most effective one in a formal appeal.

Turnitin's 2026 release notes state explicitly that the AI detection tool "should not be used as the sole basis for adverse actions against a student" because it misidentifies both human and AI-generated text. Print that page. Highlight that sentence. Bring it to your meeting.

You are not attacking the technology. You are citing the company's own published limitations and pointing out that the school's process has not followed the vendor's own stated guidelines. That shifts the conversation from "did you cheat" to "has the school followed its own process correctly." That is significantly stronger ground.

Turnitin has also updated its interface to suppress numerical scores below 20% behind an asterisk, specifically to reduce false positive escalations. That design decision is itself an acknowledgment of the false positive problem. It belongs in your appeal documentation.

The same logic applies to GPTZero, Copyleaks, and other tools. Every major AI detection platform publishes caveats about false positives. Find the specific documentation for the tool used in your case, locate its published limitations, and bring that to your meeting printed and highlighted.

The four steps work together. Process evidence establishes the timeline. The oral defence establishes comprehension. The disclaimer establishes that the score alone is not sufficient proof.

What Not to Do

Don't run your paper through an "AI humanizer" tool to reduce the detection score after the fact. This makes your situation worse in two ways. First, the tool changes the text, which means the version you submit as "original" no longer matches your drafts and version history. Second, it introduces phrasing you didn't write, which can be identified in a close reading by an instructor who knows your work.

Don't threaten legal action in your first communication. Even when the accusation is genuinely wrong, leading with legal threats puts the institution into defensive mode and makes a fair resolution harder to reach. Escalate to legal advice if the formal process fails, but start with the appeal process.

Don't accept a resolution that includes any admission of guilt, even minor guilt buried in phrasing like "the matter is now resolved." Admissions stay on academic records and can surface in graduate school applications, professional licensing processes, or scholarship reviews. Read everything before signing. Most of the ambiguity that puts students in this position traces back to a school with no written AI policy in the first place.

Don't use the detection score as your only argument against itself. "The detector is wrong" without process evidence to support that claim is hard to sustain. The evidence is what wins. The disclaimer is what reinforces it.

Why Students Are Winning AI Cheating Appeals

The pattern of students winning formal appeals is documented and growing. The core reason is simple: the evidentiary bar for proving AI authorship cannot be met by a probabilistic score alone, and institutions are beginning to recognize that.

Turnitin's own disclaimer is regularly cited in successful appeals. Google Docs version histories have been accepted as primary evidence of human authorship in formal hearings. Oral defences have cleared students who faced 90%+ detection scores. The tools' own limitations are the argument.

The video below, from educator Vaughn Littlejohns, is worth watching if you're a student trying to understand the institutional logic at play, or a parent trying to figure out where to push. He covers why AI policies fail at the institutional level and what transparency-first approaches actually look like from the inside.

This video covers the institutional logic behind universities abandoning AI detection, including what that shift means for students currently navigating the process at schools that haven't made that call yet.

The administrative burden of appeals is also accelerating institutional change. When appeal rates climb, staff hours rise, legal exposure grows, and the institutional relationship with students deteriorates. Commercial defence coaching services have documented students winning formal appeals at an 89% rate in 2026. Schools that have done that calculation, like Vanderbilt with its 750-wrongful-charges projection, move away from detection-first processes. The ones that haven't are the ones where your appeal process matters most right now.

That administrative burden is accelerating the institutional shift. Vanderbilt, MIT, Northwestern, and the University of Waterloo have all disabled detection entirely, each citing false positive rates, legal exposure, and the impossibility of meeting a scientific burden of proof with a probabilistic score.

For Teachers Reading This

If you arrived at this post from the teacher side of the desk, the research position is clear. Dr. Sarah Elaine Eaton, Werklund Research Professor at the University of Calgary, put it plainly in 2026: "We need to stop demonizing students. Addressing academic integrity requires a multistakeholder approach. We're all in this together. Students have responsibilities, but so do educators and administrators."

Josh Moon, Educational Technology Specialist at Kalamazoo College, made the practical case in 2025: "Not all students are trying to pull a fast one. Trust is really vital for effective learning and for an effective classroom. AI ethics is evolving, and teachers shouldn't submit to a mindset of surveillance, especially when AI checkers are unreliable and can lead to false accusations."

When a student denies AI use and evidence is inconclusive, the most reliable move is a conversation targeting the cognitive experience of writing. A student who wrote the paper can describe where the friction was. A student who prompted AI cannot reconstruct an experience that didn't happen. How teachers can verify authorship through that conversation is more defensible than any second detection pass. Schools still running detection-first discipline should also reckon with why punitive policies fail at the institutional level.

Frequently Asked Questions

Stay calm and do not confess to using any AI-adjacent tool as a concession. Immediately gather process evidence including Google Docs version history, timestamped drafts, handwritten notes, and browser search histories. Then formally request an oral defence where you explain your writing process directly to your instructor.

Turnitin's own documentation states the tool should not be used as the sole basis for adverse actions against a student. Using a detection score alone to suspend or expel a student contradicts the vendor's explicit published warning. Students should cite this disclaimer directly in their formal appeal.

Process evidence includes exported Google Docs version history showing all edits and timestamps, successive rough drafts, handwritten notes or outlines, annotated bibliographies with your own notes, and browser histories showing research activity on the dates you worked on the assignment.

It depends on your institution's specific policy. Do not volunteer that you used Grammarly or any editing tool unless directly asked. Some administrators treat any AI-assisted tool as a blanket admission of AI-generated writing, even when the thinking and writing were entirely your own.

Email your instructor or academic integrity office. State clearly that you wrote the work yourself, that you are prepared to demonstrate this in person, and formally request an opportunity to explain your writing process and defend your arguments. Keep the tone professional rather than confrontational.

Students are winning because the evidentiary bar for proving AI use cannot be met by a probabilistic detection score alone. Turnitin's own disclaimer language, Google Docs version histories, and oral defences have repeatedly been accepted as sufficient counter-evidence in formal appeal processes at universities including Vanderbilt and MIT.

Sources

- RAND Corporation. Student Use of AI for Homework Rises as Concerns Grow About Critical Thinking Skills. March 2026. rand.org

- NIU CITL / Bloomberg. AI Detectors: An Ethical Minefield. December 2024. citl.news.niu.edu

- Turnitin. AI Writing Detection Model. Release Notes. 2026. guides.turnitin.com

- Turnitin Official Blog. AI Detection Accuracy. 2024. turnitin.com

- Structural Learning. AI and Academic Integrity. 2026. structural-learning.com

- Thesify.ai. How Professors Detect AI Writing: 2026 Guide. 2026. thesify.ai

- Eaton, Sarah Elaine. Academic Integrity in the Age of Artificial Intelligence. AUP, May 2025. aup.edu

- Moon, Josh, quoted in NIU CITL. AI Detectors: An Ethical Minefield. December 2024. citl.news.niu.edu

- Fowler, Geoffrey A. Got accused of AI cheating? Here's how to fight back. The Washington Post. 2025. washingtonpost.com

- Frontiers in Education. Addressing student use of generative AI in schools and universities through academic integrity reporting. 2025. frontiersin.org

- hub.paper-checker.com. Oral Defense and Viva Preparation: Proving Authorship When Accused of AI Use. 2026. hub.paper-checker.com

Defending Academic Integrity in 2026

Detection software is not the answer. The AI Literacy mini-course covers how to build AI-resistant assessments, talk with students about integrity honestly, and build a classroom culture where tools support learning rather than replace it. Free. No email required.

Start the AI Literacy Course →