Building an AI Academic Integrity Policy (Without the Fear)

Nearly 50% of teachers and principals report that their school or district has no AI policy at all, according to EdWeek Research Center data from 2025. Only 5 U.S. states have enacted comprehensive K-12 AI requirements. Most schools are making it up as they go, class by class. The policy vacuum is not a neutral state. It is producing inconsistent enforcement, successful student appeals, and teacher exhaustion, and the students bearing the cost of that inconsistency are the ones wrongly charged with misconduct under policies that were never clear to begin with. A defined policy grounded in how AI actually works and what students actually need is not a concession to AI use. It is the only approach that produces defensible decisions.

- Nearly 50% of schools have no AI policy, according to EdWeek Research Center, 2025. Individual teachers are left to interpret situations without institutional backing.

- The Postplagiarism framework, developed by Dr. Sarah Elaine Eaton at the University of Calgary, provides the philosophical foundation: hybrid human-AI writing is normal, but responsibility for the output cannot be relinquished.

- OECD and UNESCO frameworks require that policies teach students to engage with, evaluate, and critically assess AI outputs, not just restrict access to them.

- K-12 policies require different considerations than university templates: parental communication, age-appropriate language, FERPA/COPPA data privacy for minors, and developmental appropriateness by grade level.

- Detection software should not be the enforcement mechanism. Process documentation, supervised baselines, and oral defence protocols are more reliable and legally defensible.

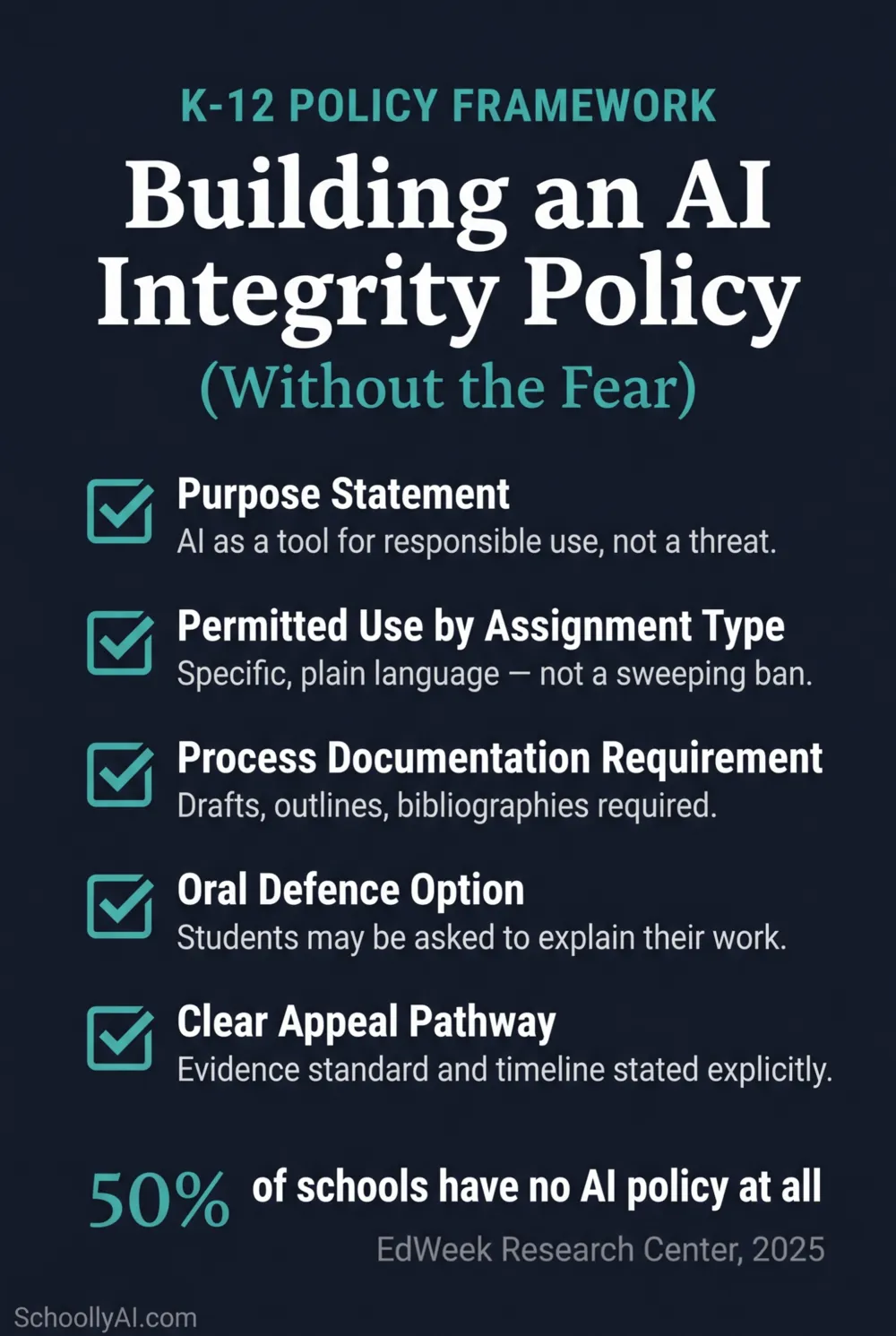

A defensible K-12 AI policy has five components: a philosophical foundation, use definitions by assignment type, process documentation requirements, an oral defence option, and a clear appeal pathway.

The Policy Vacuum and What It Actually Costs

When nearly half of all schools have no AI policy, what fills that gap is individual teacher judgment, student confusion, and after-the-fact conflict. Teachers make inconsistent calls on what counts as acceptable AI use. Students interpret silence as permission or as a minefield depending on their risk tolerance. Parents escalate when a grade is questioned. Administrators respond to individual situations without an institutional framework to cite.

The cost shows up in the appeal data. Only 22% of students report receiving clear guidance on their school's specific AI policy, according to CDT research from 2025. A policy that 78% of students can't describe is not functioning as a policy. It is functioning as an accusation waiting to happen.

The state-level picture is equally thin. Only 5 U.S. states have enacted comprehensive K-12 AI policies or requirements, according to Education Week's policy tracker from 2026. That leaves the vast majority of school districts operating on either nothing or ad-hoc guidance that was never designed to hold up to a formal challenge.

Teachers who use AI weekly save an average of 5.9 hours per week on administrative tasks, according to Engageli's 2026 statistics. That figure shows what's possible when AI is integrated thoughtfully. It also shows how much is being left on the table by schools that have responded to AI with prohibition rather than structure.

The Fear That Stalls Good Policy

The specific fear is this: if a school writes a policy that permits any AI use at all, it will be read as endorsing cheating. That fear is understandable. It is also wrong, and it produces the worse outcome.

A policy that says "students may use AI as a brainstorming tool with citation required" is not endorsing cheating. It is defining a standard and creating an enforcement mechanism. A policy that says nothing leaves teachers adjudicating cases without authority, students guessing about rules, and institutions losing appeals against charges they can't defend.

Fifty-six percent of students and educators believe their institution is completely unprepared to manage the impact of generative tools, according to Coursera survey data collated by Engageli in 2026. The unprepared institutions are disproportionately the ones that responded to AI by avoiding the policy conversation rather than having it.

Eighty percent of students globally report that AI positively supported their learning when used as a supplement, according to Coursera's higher education data from 2026. The research does not support treating AI as inherently incompatible with learning. It supports treating AI as a tool that requires explicit guidance about how to use it well.

The Postplagiarism Framework: The Right Foundation

The most intellectually honest foundation for an AI integrity policy in 2026 is Dr. Sarah Elaine Eaton's Postplagiarism framework, developed at the University of Calgary and published in 2025. It names what is actually true about the current situation and builds policy from that reality rather than from a pretense that AI can be excluded from the writing process.

Eaton's six tenets are worth understanding in full before writing any policy language:

1. Hybrid human-AI writing is normal.

The blending of human thought and AI-assisted writing is already standard practice in most professional contexts. Policy must start from this reality.

2. Human creativity is enhanced, not threatened.

AI tools, used well, extend what a human thinker can produce. The threat is not creativity. It is the substitution of AI judgment for human judgment.

3. Language barriers can be reduced.

AI tools reduce the disadvantage faced by non-native English speakers when expressing ideas they genuinely hold. Policies that ignore this dimension disadvantage ESL learners twice.

4. Humans can relinquish mechanical control but not ethical responsibility.

A student who uses AI to draft a paragraph still owns the accuracy, bias, and truthfulness of what that paragraph claims. This is the core of academic responsibility in an AI context.

5. Attribution remains required.

AI tools used in academic work require citation in the same way that any research tool does. The standard for what counts as attribution is specific to each assignment and must be stated explicitly.

6. Historical definitions of plagiarism no longer apply.

Policies built on a pre-AI definition of plagiarism are already outdated. The relevant question is no longer who wrote the words. It is who is responsible for the claims.

These six tenets shift institutional focus from forensic detection to metacognitive accountability. They define what students are responsible for rather than what tools they are forbidden from using. That is a more defensible position legally, a more honest position intellectually, and a more useful one educationally.

The OECD and UNESCO Layer

The Postplagiarism framework addresses the philosophical question. OECD and UNESCO address the educational standards question.

The OECD's AI Literacy Framework, published in 2024 and updated in 2026, specifies that educational policies must deliberately teach students to engage with, create using, manage, and critically evaluate AI systems. The framework treats AI literacy as a core educational outcome comparable to reading and numeracy. A policy that only restricts AI use without building AI literacy is failing this standard.

UNESCO's global guidelines add the data rights dimension: policies must protect student data privacy, address algorithmic bias, and ensure that AI tools supplement rather than displace the teacher-student relationship. For K-12 in particular, this means checking that any AI tools introduced in the classroom comply with FERPA in the U.S. and PIPEDA in Canada for student data handling, and that the tools are not sharing student work with external training datasets.

The practical implication is that a complete AI policy in 2026 addresses three things: what students are permitted to do, what they are responsible for regardless of tools used, and what the school is doing to build the literacy that makes responsible use possible.

A Working Policy Structure for K-12

The structure below draws on Eaton's framework, OECD/UNESCO standards, and the policy elements that have survived formal appeals at institutions including Vanderbilt and Cornell. It is not a template to copy verbatim. It is a structural checklist for what a defensible K-12 AI policy contains.

K-12 Specific Considerations That University Templates Miss

The dominant search results for "how to write an AI academic integrity policy" return university teaching centre templates. These are structurally unsuitable for K-12 application. Four areas require K-12 specific treatment.

Parental communication. K-12 schools operate in a legal and relational context where parents are stakeholders in their child's education in ways that university students' families are not. An AI policy that parents haven't seen and can't interpret will generate escalation at the first misconduct conversation. A simple one-page parent summary of what the policy permits, requires, and prohibits is worth including in any school-wide rollout.

Developmental appropriateness by grade level. A policy appropriate for a Grade 11 research paper is not appropriate for a Grade 5 writing assignment. Policies that apply a single standard across all grade levels either over-restrict younger learners or under-protect the integrity of high-stakes senior work. The policy should specify by grade band or by assignment type what AI use looks like at that level.

Data privacy for minors. FERPA in the U.S. and PIPEDA in Canada impose specific protections on student data. AI tools that process student work in the cloud may be sharing that data with external training datasets, depending on the tool's terms of service. A K-12 AI policy should specify which tools are approved for classroom use and what vetting process established that approval. This is not a theoretical concern. It is a documented risk flagged by CDT in their 2025 report.

Age-appropriate language in the policy document itself. A policy written in legal or academic register will not be read or understood by Grade 7 students. The student-facing version of the policy should be written at a reading level appropriate for the youngest students covered by it, with the full administrative version available separately.

What Not to Put in a Policy

AI detection as the enforcement mechanism. Detection software should not be referenced in policy as the means by which violations are identified. The false positive rates are too high, the ESL and neurodivergent bias is documented, and the tools are easily bypassed. A policy that cites detection scores as evidence of misconduct is a policy with a built-in appeal pathway for every student who knows about vendor disclaimers.

Sweeping bans with vague language. "Students may not use AI tools for academic work" is not a policy. It is a statement that every student will interpret differently, that teachers will enforce inconsistently, and that will not survive a formal appeal because it fails the notice standard. Specificity is what makes a policy enforceable.

Zero-tolerance language without an appeal pathway. A zero-tolerance policy without a defined appeal process is a procedural justice problem waiting to happen. Every formal policy that results in a grade penalty or a misconduct record must have a stated mechanism for contesting that outcome. Policies that omit appeal pathways are among the most vulnerable to successful challenge.

Policy language that treats AI as static. The tools available in 2026 are not the tools that will exist in 2027. A policy built around specific tool names or specific technical capabilities will be outdated faster than the review cycle that updates it. Policy language should address the category of use and the standard of responsibility, not the specific tool.

Punitive policy elements fail for well-documented reasons: bans drive AI use underground rather than reducing it, and the 89% student appeal success rate is the direct result of institutions trying to prove AI authorship with a tool that cannot meet that evidentiary standard.

Frequently Asked Questions

Start with a philosophical foundation that treats AI as a tool requiring responsible use rather than a threat. Define permitted use by assignment type in plain language. Build process documentation requirements into the assignment structure. Include an oral defence option. Define the appeal pathway clearly. Ground everything in OECD and UNESCO frameworks for age-appropriate AI literacy.

Postplagiarism is a framework developed by Dr. Sarah Elaine Eaton at the University of Calgary. Its core tenet is that hybrid human-AI writing is normal and that while humans can relinquish mechanical control of writing to AI, they cannot relinquish ethical and academic responsibility for the output's accuracy, bias, and truthfulness. Applied to school policy, it shifts institutional focus from forensic detection to metacognitive responsibility.

Nearly 50% of teachers and principals report that their specific school or district does not currently have any AI policy, according to EdWeek Research Center data from 2025. Only 5 U.S. states have enacted comprehensive K-12 AI policies or requirements. Most schools are operating without official guidelines.

Yes. K-12 policies must address parental communication requirements, developmentally appropriate language by grade level, FERPA and COPPA data privacy protections for minors, and age-appropriate distinctions in what AI use is expected, permitted, and prohibited. University syllabus templates are structurally unsuitable for K-12 application.

No. AI detection software should not be the enforcement mechanism. The false positive rates are too high, the ESL and neurodivergent bias is documented, and the tools are easily bypassed. Process documentation requirements, supervised writing baselines, and oral defence protocols are more reliable and legally defensible.

Sources

- EdWeek Research Center. How School Districts Are Crafting AI Policy on the Fly. October 2025. edweek.org

- Education Week. AI Literacy Lessons and Policy Tracker. 2026. edweek.org

- Eaton, Sarah Elaine. Postplagiarism Fundamentals: Integrity and Ethics in the Age of GenAI. University of Calgary. 2025. ucalgary.scholaris.ca

- OECD. AI Literacy Framework for Primary and Secondary Education. 2024. learnworkecosystemlibrary.com

- UNESCO. AI for Skills Development in Higher Education. 2025-2026. unesco.org

- UNESCO. AI and Education: Protecting the Rights of Learners. 2025. unesdoc.unesco.org

- Center for Democracy and Technology. AI in Schools Report. 2025. cdt.org

- Coursera / Engageli. 25 AI in Education Statistics to Guide Your Learning Strategy in 2026. 2026. engageli.com

- CBC News. Northeast B.C. school district adopts AI guidelines. 2026. cbc.ca

- York Region District School Board. Student AI Guidelines (Grades 7-12). 2026. yrdsb.ca

- Montana OPI. Artificial Intelligence in K-12 Education Guidelines. October 2025. opi.mt.gov

- Carnegie Learning. 5 Tips For Addressing AI in Your Academic Integrity Code. 2026. carnegielearning.com

Defending Academic Integrity in 2026

The AI Literacy mini-course walks through the practical steps for building an AI-literate classroom culture: what to teach, how to assess it, and how to talk with students and parents about where the lines are. Free. No email required.

Start the AI Literacy Course →