The Case Against Punitive AI Policies: What Research Says

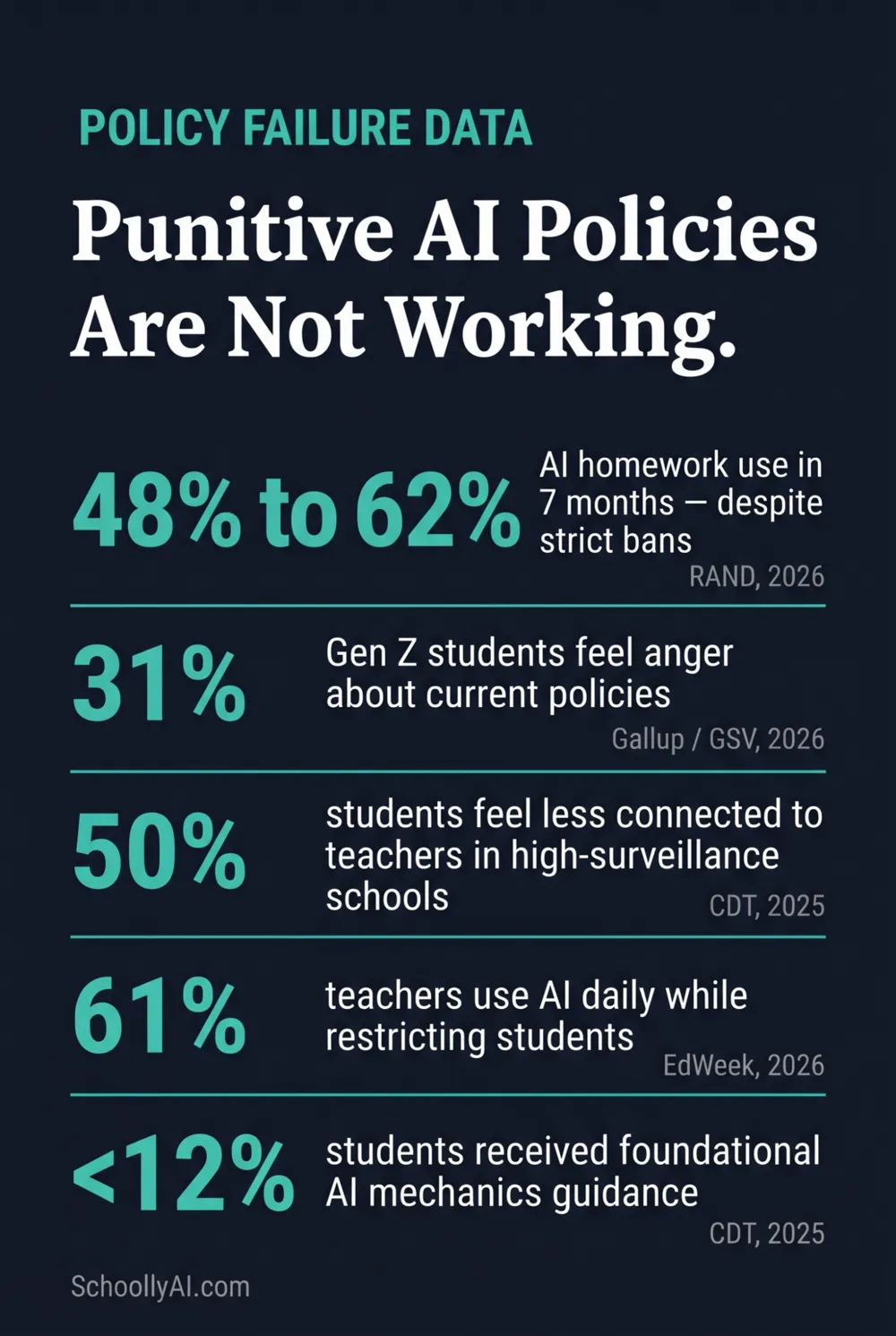

A 2025 CDT report found that in school districts with highly punitive AI surveillance, 50% of students reported feeling significantly less connected to their teachers. AI homework use rose from 48% to 62% between May and December 2025 despite widespread bans. Thirty-one percent of Gen Z students now report feeling anger about current school AI policies, up 9 points since last year. The policies are not reducing AI use. They are reducing trust, increasing resentment, and leaving students without the skills they actually need, including the students wrongly flagged for work they actually wrote.

- AI homework use jumped from 48% to 62% between May and December 2025, according to RAND Corporation data, despite strict institutional bans at many schools.

- 50% of students in high-surveillance school environments reported feeling less connected to their teachers, according to the Center for Democracy and Technology, 2025.

- 31% of Gen Z students feel anger about current AI policies, an increase of 9 points since 2025 (Gallup / GSV Ventures, 2026).

- Less than 12% of students have received foundational guidance on how these AI systems actually work, according to CDT, 2025.

- The academic consensus recommends a shift from punitive surveillance to structural integration: teaching AI use, modeling transparent attribution, and designing assignments that build judgment rather than bypass it.

The data on punitive AI policies is consistent across CDT, RAND, Gallup, and OECD: bans do not reduce AI use. They redistribute it underground, where students use the tools without understanding their limitations.

The Disconnect Schools Won't Name Out Loud

Eighty-six percent of educational organizations now use generative AI tools, the highest adoption rate of any global industry, according to Coursera's 2026 report. That includes schools. That includes teachers. The tools are embedded in how the profession operates at every level except, formally, student learning.

Students see this. They watch teachers use AI to write rubrics, generate quiz questions, and draft parent communication, then sit in classrooms where using the same technology on their own assignments is treated as academic fraud. The research does not describe this as a perception problem on the students' part. It describes it as a real double standard with measurable consequences for policy compliance.

This is not an argument that student AI use should be unregulated. It is the starting point for understanding why punishment-first policies fail. A policy built on a rule that the enforcers visibly don't follow themselves does not produce compliance. It produces resentment, underground use, and the loss of any opportunity to actually teach students how to use these tools well.

What Punitive Policies Actually Produce

The documented outcomes of strict, punishment-first AI policies cluster around the same three categories: anxiety, strategic writing degradation, and relational breakdown.

Students under high surveillance report worrying that their legitimate work will be flagged. RAND Corporation's 2025 research found that 50% of students actively worry about false AI accusations. That anxiety produces a specific adaptive behavior: deliberately writing worse. Students in forums from 2024 to 2026 consistently describe introducing grammatical errors, misused idioms, and awkward phrasing to pass detection tools. The policy designed to protect writing quality is producing deliberately degraded writing as a workaround.

The relational damage is documented by CDT. In districts with high-surveillance architectures, 50% of students report feeling less connected to their teachers, and 47% of educators report a decrease in peer-to-peer student connections. The attempt to police AI use with algorithms is eroding the foundational trust that instruction depends on. That is not a theoretical risk. It is a measured outcome at the school district level.

And the policies aren't working. AI homework use rose from 48% to 62% between May and December 2025. Not over years. Over seven months. The bans are not slowing adoption. They are teaching students to use AI covertly rather than transparently.

| What Schools Observe | What the Data Shows | Source |

|---|---|---|

| Bans don't reduce AI use among students | AI homework use: 48% to 62% in 7 months despite bans | RAND Corporation, 2026 |

| Strict policies produce student anger | 31% of Gen Z report anger about AI policies, up 9 points | Gallup / GSV Ventures, 2026 |

| Surveillance damages teacher-student relationships | 50% of students feel less connected to teachers in high-surveillance districts | Center for Democracy and Technology, 2025 |

| Teachers use AI heavily despite restricting students | 61% of teachers used generative AI in daily work in 2025 | EdWeek Research Center, 2026 |

| Students understand the critical thinking risk but use AI anyway | 67% say over-reliance will harm their critical thinking | RAND Corporation, December 2025 |

| Students have not been taught how AI actually works | Less than 12% have received foundational AI mechanics guidance | Center for Democracy and Technology, 2025 |

That last line is the one that should concern schools the most. Less than 12% of students have received any foundational guidance on how these systems work. They are using tools they don't understand, in violation of policies they resent, without the critical literacy to identify when those tools are wrong. That is the actual outcome of the current approach.

The Research Case: What CDT, RAND, OECD, and UNESCO Found

The academic consensus from 2024 to 2026 is not divided on this. Across independent research institutions, international education bodies, and behavioral studies, the recommendation is consistent: move away from punitive surveillance toward structural integration and proactive literacy.

Elizabeth Laird, Director of Equity in Civic Technology at the Center for Democracy and Technology, put the equity dimension plainly in 2025: "As many hype up the possibilities for AI to transform education, we cannot let the negative impact on students get lost in the shuffle. Our research shows AI use in schools comes with real risks, like large-scale data breaches, tech-fueled sexual harassment and bullying, and treating students unfairly."

The OECD's AI Literacy Framework, published in 2024, specifies that educational policies must deliberately teach students to engage with, create with, manage, and design AI systems while evaluating their societal benefits and risks. That framework explicitly treats AI literacy as a core educational outcome, not a threat to be managed.

UNESCO's global guidelines add the human-centered constraint: policies must protect student data privacy, address algorithmic bias, and ensure that AI supplements rather than replaces the teacher-student relationship. These are not arguments against AI in schools. They are arguments against deploying AI surveillance tools without the same scrutiny applied to AI learning tools.

Pat Yongpradit, General Manager of Global Education and Workforce Policy at Microsoft, summarised the practitioner case in 2026: "What these numbers tell me is that educators are meeting the AI moment and leaning into their responsibility to prepare kids not just for academic subjects but life. They understand the urgency to help students build the knowledge and judgment they'll need to navigate AI."

Why Bans Fail Structurally

The cat-and-mouse dynamic is built into the architecture of prohibition. Every restriction on AI use creates a market for tools designed to circumvent that restriction. AI humanizer software exists specifically because detection tools exist. Paraphrasing models exist specifically because detection accuracy is high enough to create demand for workarounds.

The students being caught by detection tools are disproportionately the ones who aren't sophisticated enough to bypass them. A student who knows about a free paraphrasing app sails through detection. A student who generated an essay with AI but didn't know to process it through a paraphraser gets flagged. The policy ends up punishing the least tech-literate AI users while providing no barrier to the most tech-literate ones. That is not a functional integrity system.

Behavioral research cited by OECD and UNESCO adds the learning dimension. In zero-tolerance environments, students who use AI secretly do so without understanding its limitations, which means they adopt hallucinations, biased outputs, and factual errors uncritically. The student who uses AI in the open, in a structured classroom context where the errors are visible and discussed, develops better critical evaluation skills than the student who uses it covertly with no feedback loop. The ban produces the worse learning outcome.

What the Data Recommends Instead

The research converges on several practical alternatives to punishment-first policies. These are not theoretical. They are approaches currently used by the institutions that have moved away from detection.

Teach how the tools work before enforcing rules about them. Students who understand that AI generates plausible-sounding text by predicting the next likely word, and who have seen that process produce factual errors, are far better equipped to use the tools as thinking aids rather than answer-generation machines. Less than 12% of students have had that conversation. That gap is where the policy work should start.

Use AI for controlled critical analysis. Assigning students to find factual errors in a machine-generated essay, or to compare an AI's argument to a primary source, positions students as active evaluators rather than passive consumers. It builds the skepticism that a student covertly using AI for homework is never developing.

Model transparent attribution explicitly. Randy Kolset, Educational Administrator writing in EdTech Digest in 2025, named the double standard directly: "If teachers use AI without acknowledgment, we cannot ask students to do otherwise. If a teacher uses a system to generate a quiz or a rubric, they should explicitly cite the tool at the bottom of the document, showing students exactly what ethical, responsible attribution looks like in practice."

Design assignments that require human judgment. An assignment asking students to connect a global policy issue to a specific classroom conversation, a specific local event, or a specific personal experience is an assignment that AI cannot complete convincingly because it lacks the context. That design choice reduces the incentive for dishonest AI use without requiring any enforcement mechanism.

The Teacher Double Standard: Why It Matters More Than It Seems

The EdWeek Research Center found in 2026 that 61% of teachers used generative AI tools in their daily professional work in 2025. Teachers who use AI to plan lessons, write rubrics, draft communications, and generate assessment materials are using the technology in exactly the way students are being penalized for.

Students notice. The Gallup and GSV Ventures data from 2026 shows the emotional outcome directly: 31% of Gen Z students report feeling anger about current AI policies, with the nine-point increase from 2025 tracking the period when teacher AI adoption rose fastest. The anger is not about the rules in the abstract. It is about the perceived unfairness of a system that restricts students for behavior that their teachers engage in daily.

This matters for policy compliance because the perceived legitimacy of a rule drives whether students follow it. A rule enforced by people who visibly don't follow it themselves is a rule that generates strategic evasion, not genuine compliance. The 48-to-62% rise in AI homework use over seven months is not because students became less principled. It is because the policy environment became less credible.

The practical response is not for teachers to stop using AI. It is to make that use visible, cited, and discussed, so that the model students observe is transparent engagement rather than hypocrisy, the same transparency that a defensible K-12 integrity policy is built around.

Frequently Asked Questions

Punitive AI policies fail because they operate on the assumption that AI is purely a cheating tool, which ignores its reality as embedded societal infrastructure. They produce anxiety, resentment, and deliberate writing degradation without reducing actual AI use. AI homework use jumped from 48% to 62% between May and December 2025 despite widespread bans.

Documented drawbacks include: 10-20% false positive rates on authentic human writing, structural bias against ESL and neurodivergent students, 71% of teachers reporting significant added administrative burden, 50% of students in high-surveillance environments feeling less connected to their teachers, and detection accuracy collapsing to 4.6% once text is processed through a paraphrasing tool.

Research from CDT, RAND, OECD, and UNESCO consistently recommends a shift to structural integration and proactive literacy: teaching students how AI works including its limitations and biases, using AI for controlled critical analysis, modeling transparent attribution, and designing hyper-local assignments that AI cannot complete convincingly.

Yes. EdWeek Research Center data from 2026 shows that 61% of teachers used generative AI tools in their daily work in 2025. Students are aware of this. Gallup and GSV Ventures found in 2026 that 31% of Gen Z students feel anger about current AI policies, an increase of 9 points since 2025. The perceived double standard is a documented driver of policy resentment and non-compliance.

RAND research from December 2025 found that 67% of students acknowledge that over-reliance on generative tools will harm their critical thinking. But bans don't reduce use; they drive it underground. Students who use AI covertly without understanding its limitations are more likely to adopt hallucinations and bias uncritically, which is worse for critical thinking than structured, supervised AI use.

Sources

- Center for Democracy and Technology. AI in Schools Report. 2025. cdt.org

- RAND Corporation. Student Use of AI for Homework Rises as Concerns Grow. March 2026. rand.org

- RAND Corporation. More Students Use AI for Homework, and More Believe It Harms Critical Thinking. December 2025. rand.org

- Gallup / GSV Ventures. 2026 AI Education Survey. 2026.

- EdWeek Research Center. More Teachers Are Using AI in Their Classrooms. Here's Why. January 2026. edweek.org

- Coursera. AI in Higher Education Report. 2026. coursera.org

- OECD. AI Literacy Framework for Primary and Secondary Education. 2024. learnworkecosystemlibrary.com

- UNESCO. AI and Education: Protecting the Rights of Learners. 2025. unesdoc.unesco.org

- Kolset, Randy. Practice What You Teach: Academic Integrity and AI. EdTech Digest. October 2025. edtechdigest.com

- EdWeek. Are AI Literacy Lessons Now the Norm? March 2026. edweek.org

Building Better AI Policy

The AI Literacy mini-course covers the practical moves that replace punitive detection: process-based assessment, transparent attribution, and assignment design that builds judgment. Free. No email required.

Start the AI Literacy Course →