Appeals and False Positives: Why Students Are Winning

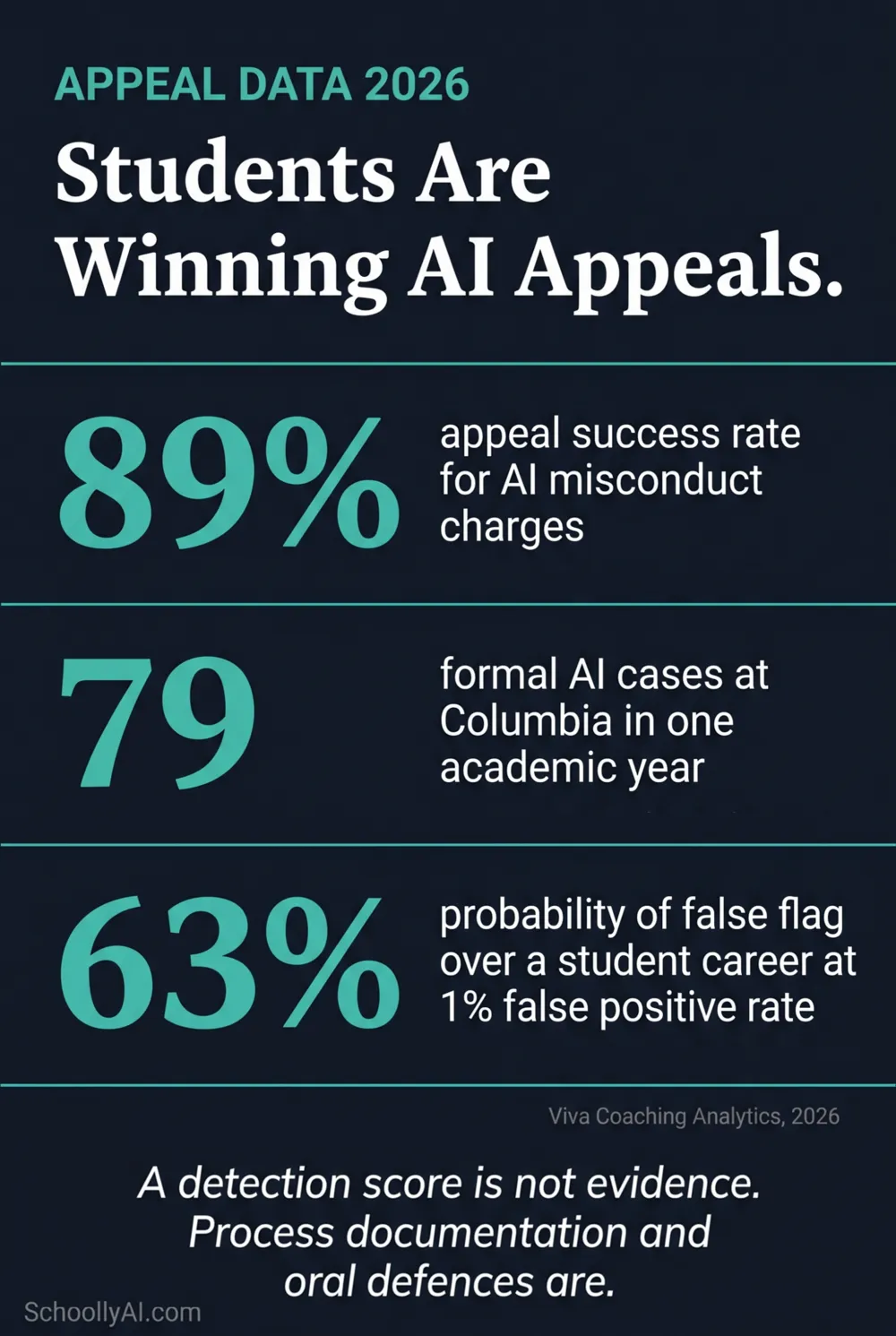

A commercial academic defence coaching service reported an 89% success rate for students formally appealing AI academic misconduct charges in 2026. Not a student advocacy number. A business metric from a company whose revenue depends on winning. That figure tells you something specific about the state of AI detection in formal hearings: institutions cannot scientifically prove that a text was generated by a machine, and students who fight back with process evidence are winning at a rate that should concern any school still running detection-based disciplinary processes.

- Commercial AI misconduct defence services report an 89% appeal success rate in 2026, driven by institutions' inability to prove AI authorship beyond a probabilistic score.

- Columbia University recorded 79 formal AI misconduct cases in 2024-25, making it the second most common academic integrity concern. Appeal volumes are rising at the same rate.

- Review panels require tangible, verifiable evidence. A detection score is probabilistic. Version histories and oral defences are not. Faculty relying solely on dashboard percentages consistently lose formal hearings.

- Most university policies mandate appeals within 10 to 14 calendar days. Students who move fast with process evidence are the ones winning.

- The administrative burden of appeals is so high that many instructors have stopped reporting suspected AI use altogether, creating the opposite of the intended effect.

The appeal success rate reflects a structural evidentiary problem: probabilistic scores cannot meet the proof standard that formal review panels apply.

The 89% Appeal Success Rate and What It Actually Means

The 89% figure comes from Viva Coaching Analytics, published in 2026. These are academic defence services that coach students through misconduct hearings. Their business model requires winning appeals at a high rate to attract clients, so the number is commercially motivated to be accurate rather than inflated.

What it reflects is a fundamental evidentiary asymmetry. Plagiarism was historically objective. You could point to the source document. The match was there or it wasn't. AI generation is entirely different. There is no source document. There is no origin point. There is a probabilistic percentage from a proprietary algorithm that cannot name the AI tool used, cannot identify when the text was generated, and cannot distinguish between a student who used AI and a student who writes formally.

Research published in the International Journal for Educational Integrity in 2026 summarised the formal hearing dynamic directly: "Faculty relying solely on a percentage score from software dashboards frequently lose these appeals when students present counter-evidence, such as cloud-based document edit histories, early developmental drafts, or the ability to eloquently defend the concepts verbally during a hearing."

The appeal success rate is not a student gaming the system. It is the natural result of applying a scientific standard of evidence to a non-scientific claim. A detection score cannot meet that standard. Process evidence can.

Why the Score Alone Fails in a Formal Hearing

Academic integrity review panels, when functioning correctly, apply a burden of proof. The institution makes a claim. The institution provides evidence. The student responds. The panel decides.

A detection score is evidence that a text's statistical properties resemble AI output. It is not evidence of authorship. Those are different claims. The panel's job is to determine authorship. The score cannot do that.

When a student arrives at a hearing with Google Docs version history showing 47 distinct editing sessions across 8 days, rough drafts from two weeks prior, a browser history showing research sessions on the relevant dates, and the ability to explain the hardest part of their argument under mild questioning, the panel has a direct conflict between probabilistic software output and human-documented process. The human-documented process wins because it addresses the actual question. The software output does not.

Cornell University's Generative AI Academic Integrity Guidelines, published in 2025, named exactly this standard: "Faculty should follow up their initial concerns about a generative AI-related violation with tangible evidence, such as the inclusion of advanced references that exceed the scope of the course, or situations where the student is unable to verbally explain, expand upon, or defend their submitted work when queried." That guidance acknowledges what the appeal data confirms: a detection score is not tangible evidence. It is a trigger for further investigation.

When suspicion arises, the most reliable approach is a structured conversation targeting the cognitive experience of writing, not a second detection pass. The four-question oral framework that reveals authentic engagement is far more defensible in a hearing than any dashboard percentage.

The Columbia Data: Appeal Volumes at Scale

Columbia University's Center for Student Success and Intervention published its 2024-25 annual report with a finding that received less attention than it deserved. Unauthorized use of generative tools rose to 79 formal cases, making it the second most commonly reported academic integrity concern at the institution.

That is one elite university in one academic year. It does not include the cases that instructors decided not to report after calculating the documentation burden. It does not include the students who accepted a grade penalty rather than go through a formal process. The 79 visible cases represent the tip of a much larger dynamic.

| Finding | Statistic | Source |

|---|---|---|

| Appeal success rate for AI misconduct charges | 89% | Viva Coaching Analytics, 2026 |

| Formal AI misconduct cases at Columbia in 2024-25 | 79 (2nd most reported concern) | Columbia CSSI Annual Report, 2025 |

| Standard appeal filing window at most institutions | 10 to 14 calendar days | Kean University / Washington University policies, 2025 |

| Students receiving clear guidance on their school's AI policy | Only 22% | Center for Democracy and Technology, 2025 |

| Educators noting students struggle to differentiate AI vs. human content | 61% | EdWeek Research Center, 2026 |

That last figure is the other side of the same coin. If 61% of educators struggle to tell AI from human writing by eye, and detection software fails at a 10-20% false positive rate, and 89% of formal appeals succeed, then the detection-first disciplinary model is not producing reliable outcomes at any point in the process.

Gengyan Tang, a researcher on academic misconduct services at LSE, described what has emerged in 2026: "What is often described as 'academic misconduct appeal assistance' is no longer a marginal service. It has evolved into a platform-oriented business model that systematically captures students precisely when they are likely to feel uncertain or anxious." The commercial defence industry is a symptom of an institutional failure to build a defensible process.

The Administrative Cost Schools Aren't Counting

The visible cost of an AI misconduct appeal is the hearing itself. The hidden cost is everything that leads to it and everything that follows.

Filing a formal charge requires the instructor to produce charging documents, provide relevant syllabus statements, document the timeline of their intervention, and in most institutions participate in at least one formal hearing. For a single case, that is several hours of work. For an institution running detection across tens of thousands of submissions, the false positive rate generates a caseload that no administrative office is resourced to handle at full quality.

The result is predictable and well-documented in educator forums: instructors stop reporting. They see a suspicious paper, they run the detection tool, they get a flag, they calculate the documentation burden, and they decide it isn't worth it. They grade the paper or assign a lower mark without a formal process. This behaviour defeats the entire purpose of running detection. The tool produces anxiety and administrative waste without producing accountability.

The Center for Democracy and Technology found in 2025 that 71% of teachers reported significant added administrative burden from trying to determine whether student work was authentic. That is time pulled from instruction, from feedback, from the actual job. The detection system is consuming resources at both ends: generating false accusations that require appeals, and generating valid suspicions that instructors decide not to pursue.

What Institutions Must Fix Before the Next Appeal Season

The research from 2024 to 2026 produces a clear institutional checklist. These are the structural fixes that reduce both false accusation rates and successful appeals against legitimate ones.

Make process documentation part of the grade from day one. Requiring outlines, annotated bibliographies, and draft histories as graded components of every major assignment builds the evidentiary record before any suspicion arises. If a student fails to provide process documentation, the grade reflects that absence. No accusation required. No appeal possible on those grounds.

Update syllabi to state policy explicitly. Only 22% of students report receiving clear guidance on their school's AI policy. Sweeping bans phrased in vague language are the easiest to overturn in an appeal because they fail the notice standard. A policy that specifies what is permitted by assignment type, what requires citation, and what is prohibited is far harder to appeal successfully.

Train faculty on what constitutes actionable evidence. A detection score is a trigger for investigation, not a verdict. Faculty who understand this distinction approach the initial conversation correctly. Faculty who treat a dashboard percentage as proof produce the conditions for the 89% appeal success rate.

Decide institutionally on detection, not individually. A teacher who disables detection in their own workflow but whose school still runs it institutionally is still exposed to escalation from outside their classroom. The decision to continue or discontinue AI detection belongs at the administrative level, with a published policy that teachers can reference when a parent or colleague escalates a flag.

Building the evidentiary record before any suspicion arises removes the adversarial dynamic entirely. Schools that have built process documentation into their assignment structure don't end up in appeal hearings, because there's nothing to contest, and the reason detection-first approaches consistently fail is that they invert that logic, chasing evidence after the fact rather than requiring it upfront.

Frequently Asked Questions

Commercial academic defence coaching services reported an 89% success rate for students formally appealing AI-related academic misconduct charges in 2026. The high success rate reflects the fact that institutions cannot scientifically prove AI authorship using probabilistic detection scores alone, especially when students present counter-evidence such as version histories and oral defences.

Students are winning because review panels require tangible, verifiable evidence of AI use, and a detection score is not tangible evidence. It is a probabilistic estimate. When students present Google Docs version histories, draft documents, annotated bibliographies, or pass an oral defence, faculty relying solely on a dashboard percentage consistently lose.

Most university and school academic integrity policies mandate that formal appeals be filed within 10 to 14 calendar days of receiving the charge. Students should begin gathering process evidence and drafting their appeal immediately after being notified.

The most effective evidence is Google Docs version history showing timestamped edits over multiple sessions, successive rough drafts, handwritten notes, browser search histories corresponding to research dates, and a successful oral defence. The vendor's own disclaimer language is also regularly cited.

The administrative cost is substantial. Faculty must file charging documents, provide syllabus statements, document intervention timelines, and attend lengthy appeal hearings. Many instructors report that the process is so burdensome that they stop reporting suspected AI use altogether, which defeats the stated purpose of running detection.

Sources

- Viva Coaching Analytics. AI Misconduct Appeal Success Data. 2026. hub.paper-checker.com

- Columbia University CSSI. 2024-25 Annual Report. 2025. cssi.columbia.edu

- Tang, Gengyan. The growing market for student academic misconduct services. LSE Impact of Social Sciences. February 2026. blogs.lse.ac.uk

- Cornell University. Generative AI Academic Integrity Guidelines. 2025. cornell.edu

- Frontiers in Education. Addressing student use of generative AI in schools and universities through academic integrity reporting. 2025. frontiersin.org

- International Journal for Educational Integrity / Taylor and Francis. Heads we win, tails you lose: AI detectors in education. 2026. tandfonline.com

- Center for Democracy and Technology. AI in Schools Report. 2025. cdt.org

- Kean University. Academic Integrity Policy. 2025. kean.edu

- EdWeek Research Center. AI in Classrooms Survey. 2026. edweek.org

Defending Academic Integrity in 2026

The AI Literacy mini-course covers how to build an assessment approach that doesn't depend on detection software, and how to have honest conversations about AI use that reduce the adversarial dynamic. Free. No email required.

Start the AI Literacy Course →