Student AI Denial vs. Evidence: A Teacher's Framework

The detection score says 95%. The student is looking you in the eye, genuinely distressed, saying they wrote every word. You have no idea what to believe. This is the defining professional situation for teachers in 2026, and a second detection pass isn't going to resolve it. What resolves it is a structured conversation that targets the cognitive experience of writing, not the tool that may or may not have been used. If you're a student on the other side of this conversation, the companion post Falsely Accused of AI Cheating? Do This covers your options.

- Human detection of AI writing is barely better than chance without tools. Your instinct alone is not reliable evidence.

- Interrogating the cognitive struggle of writing is more reliable than interrogating the tool. AI removes friction. A student who wrote the paper remembers where the friction was.

- Four specific questions reveal authentic engagement in a way no detection score can match.

- If evidence is inconclusive after the conversation, issuing a zero is unjust. Use the situation as a forward-looking teaching moment instead.

- The long-term solution is assignment redesign, not better detection software.

A structured conversation targeting the cognitive experience of writing outperforms any detection tool in a genuine denial situation.

The Desk Standoff: Why More Detection Won't Help

You have a student in front of you. You have a 90% AI detection score on the screen behind you. The student is distressed and vehement. This situation is emotionally charged, professionally risky for you, and consequential for them.

Running the paper through a second detection tool will not help. If anything, the variation between tools will create more ambiguity, not less. The JISC AI Detection Assessment from 2025 found that Copyleaks scored 100% on one dataset and 0% on a minimally modified version. Different tools will give you different numbers on the same paper. That's not a resolution. That's additional noise.

Here is what the research says about human detection of AI writing: without tools, educators are historically documented as being barely better than random chance at identifying AI writing by instinct alone. Thesify.ai's evidence guide from 2026 puts the unassisted human accuracy at roughly 50 percent. Your gut feeling about whether the writing "sounds like" the student is not reliable evidence.

What you need is a framework that produces evidence through conversation. The broad context is covered in AI Detectors Are Failing Honest Students. This post gives you the specific conversational tools to use in that meeting.

Interrogate the Struggle, Not the Tool

Generative AI removes cognitive friction. You type a prompt and receive a polished essay in seconds. There is no experience of not knowing how to connect two ideas, no memory of the paragraph you rewrote three times, no frustration over a source you couldn't cite correctly.

A student who actually wrote a complex paper carries that experience. They remember what was hard. They can describe the argument they abandoned after writing two hundred words of it. They know which source was the one they almost misread.

A student who generated the essay with AI cannot reconstruct any of that, because it didn't happen. They can tell you what the paper says. They cannot tell you what it felt like to write it, because no writing occurred.

This asymmetry is the most reliable diagnostic available to you in a denial situation. Target it.

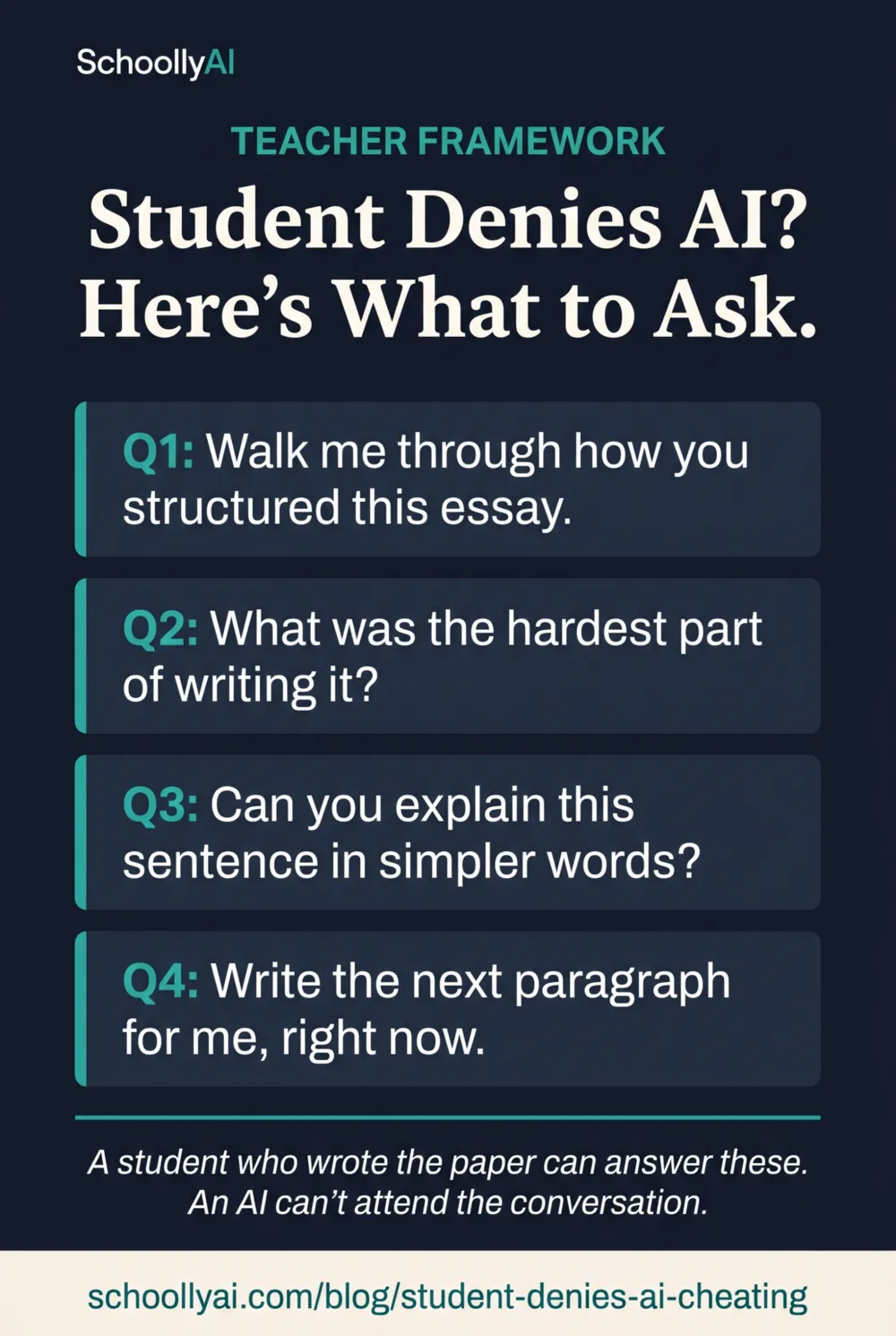

The Four-Question Framework

These four questions, used in sequence, produce the most useful evidence a teacher can gather in a denial conversation. They don't prove innocence or guilt definitively. But they reveal a pattern of engagement that either aligns with or contradicts the claim that the student wrote the work.

Conversations, Not Confrontations

Question 1: Process Inquiry

"Can you walk me through exactly how you structured this essay? Tell me what your argument was and how you decided to organise it."

What you're looking for: A student who wrote the paper can explain the logic of their structure. A student who prompted AI often knows what the paper concludes but cannot explain the architectural decisions that led there.

Question 2: Identifying Struggle

"What was the absolute hardest part of researching and writing this? What gave you the most trouble?"

What you're looking for: Authentic writing is hard. A genuine writer can describe specific friction points. A student who used AI either says nothing was hard (suspicious) or invents a plausible-sounding struggle that doesn't match anything specific in the paper (also suspicious).

Question 3: Comprehension Test

"I'm looking at this sentence here [point to a complex passage]. Can you explain what that means in simpler words?"

What you're looking for: A student who wrote the sentence chose those words deliberately and can unpack them. A student who generated the sentence may not understand it well enough to explain it differently.

Question 4: Style Consistency

"I'd like you to write the next paragraph of this essay, continuing from where it ends. Take your time."

What you're looking for: Compare the live writing to the submitted text. Authentic authors produce consistent voice, vocabulary level, and argumentative style under pressure. If the in-session paragraph is dramatically different in quality or style, that divergence matters.

No single question is definitive on its own. What you're looking for is a pattern across all four. A student who answers consistently, specifically, and with genuine detail is probably telling the truth. A student who gives generic, implausible, or evasive answers across multiple questions is giving you a different signal.

When Evidence Stays Inconclusive

Sometimes the conversation doesn't resolve the question. The student is articulate but you're still not sure. The in-session writing is decent but not definitively matching or mismatching.

In this situation, issuing a zero based on algorithmic suspicion alone is unjust. Turnitin's own documentation states explicitly that detection scores should not be the sole basis for adverse actions against a student. If your own follow-up conversation hasn't produced clear evidence of AI use, you have not met the evidentiary threshold for a misconduct charge.

Use the situation as a forward-looking teaching moment instead. Establish explicit expectations for process documentation on future assignments. Tell the student that going forward you will expect to see draft histories and research notes submitted with major papers. This protects both of you.

It also sends a clear signal that matters to the student: you take the work seriously, you're watching, and the next assignment has no ambiguity about process requirements. For most students who are genuinely on the line about AI use, this changes behavior more reliably than a zero they can appeal.

The Real Fix: Redesign the Assignment

The Conversations, Not Confrontations framework handles individual situations. It does not solve the structural problem. The structural fix is assignment redesign.

John Lande, writing in Indisputably in January 2026, made the point directly: "Redesigning assignments in ways that make cheating riskier... Some students may be deterred if cheating becomes harder, less effective, and more likely to result in lower grades." An assignment where AI provides a clear advantage is an assignment asking to be exploited.

Curriculum specialist Vee Murugan wrote in The Educator's Room in March 2026: "Instead of banning AI, educators must teach students how to use it ethically and transparently so it supports learning rather than becoming a shortcut for academic dishonesty. AI is not the problem and it certainly did not introduce academic dishonesty into our classrooms."

AI-resistant assignments require one or more of the following: hyper-local knowledge that only someone in your specific class on a specific date would have, documented process evidence submitted alongside the final product, references to personal experience or specific classroom discussions, or oral reflection components where students explain their thinking. An AI cannot produce local knowledge. It cannot attend your class discussion. It cannot write a reflection about a specific moment in the student's own experience.

73% of employers currently use generative AI in their operations, according to University of Utah employer survey data from 2024 and 2026. Students will use AI professionally. The question is whether they're learning to use it as a thinking tool or a bypass tool, and the answer to that question depends largely on what you assign them.

What the Data Shows About Teacher AI Adoption and the Gap

Teachers are using AI tools themselves. OECD Digital Education Outlook and TALIS data from 2024 and 2025 shows that on average, 68% of teachers report using AI to learn about a topic and 64% use it to generate lesson plans. This is not a technophobe versus technophile situation. Teachers are integrating AI into their own practice while simultaneously trying to determine when students are using it inappropriately.

The professional development has not kept up. Only 38% of teachers had participated in AI-related learning by the time of those surveys, with 29% still reporting unmet professional development needs around AI use. The gap between AI adoption and AI literacy among teachers is real, and it is part of why the response to student AI use has leaned so heavily on detection tools rather than pedagogical approaches.

Detection tools require no training. A structured conversation framework requires understanding what AI can and cannot do. Building AI-resistant assignments requires rethinking how you assess learning. All of that takes time and support that most teachers haven't received.

Frequently Asked Questions

Start with a structured conversation that targets the cognitive process of writing, not the tool used. Ask the student to walk through the structure of their argument, describe what was hardest about the assignment, explain specific vocabulary choices, and write a continuation paragraph in front of you. These questions reveal genuine engagement with the material in a way a detection score cannot.

Ask the student to defend their work verbally. Request an explanation of complex vocabulary. Ask them to describe the specific struggle of writing the paper. Then compare a live in-class writing sample to the submitted work. A student who actually wrote the paper can pass all of these tests. A student who only prompted an AI cannot reconstruct the cognitive experience of writing.

Look for hallucinated citations that don't exist when checked, a sudden and dramatic shift from the student's historical writing voice, arguments that are generic and unconnected to specific class content, vocabulary the student cannot explain in a follow-up conversation, and a complete absence of any personal perspective or local detail that only a student in the actual class would know.

Use the situation as a teaching moment. If you cannot prove AI use, issuing a zero based on algorithmic suspicion alone is unjust and likely to damage your relationship with a student who may be innocent. Instead, establish explicit expectations for process documentation on future assignments and make the next assignment AI-resistant by design.

Require hyper-local knowledge: references to specific class discussions, local community events, or personal experiences that an LLM cannot access. Build in process requirements: outlines, drafts, annotated bibliographies submitted alongside the final paper. Include a required oral reflection where students discuss their writing choices. An AI cannot produce local knowledge or attend a reflection conversation.

Sources

- Thesify.ai. How Professors Detect AI Writing: 2026 Guide. 2026. thesify.ai

- Structural Learning. AI and Academic Integrity. 2026. structural-learning.com

- OECD. Digital Education Outlook 2026. 2026. oecd.org

- Lande, John. Worried About Students Cheating with AI? Here Are Some Smart Ways to Respond. Indisputably. January 2026. indisputably.org

- Murugan, Vee. If We Don't Teach Students How to Use A.I., We Teach Them How to Cheat. The Educator's Room. March 2026. theeducatorsroom.com

- University of Utah. AI Tools for Teaching and Learning. 2024/2026. cte.utah.edu

- MIT Sloan EdTech. AI Detectors Don't Work. Here's What to Do Instead. 2024/2026. mitsloanedtech.mit.edu

- OpenEduCat. AI Academic Integrity Guide for Schools. 2026. openeducat.org

Defending Academic Integrity in 2026

Handling AI denial is one situation. Building a classroom where it comes up less often is the goal. The AI Literacy mini-course covers assessment redesign, transparent AI use policies, and how to shift the conversation from enforcement to education. Free. No email required.

Start the AI Literacy Course →