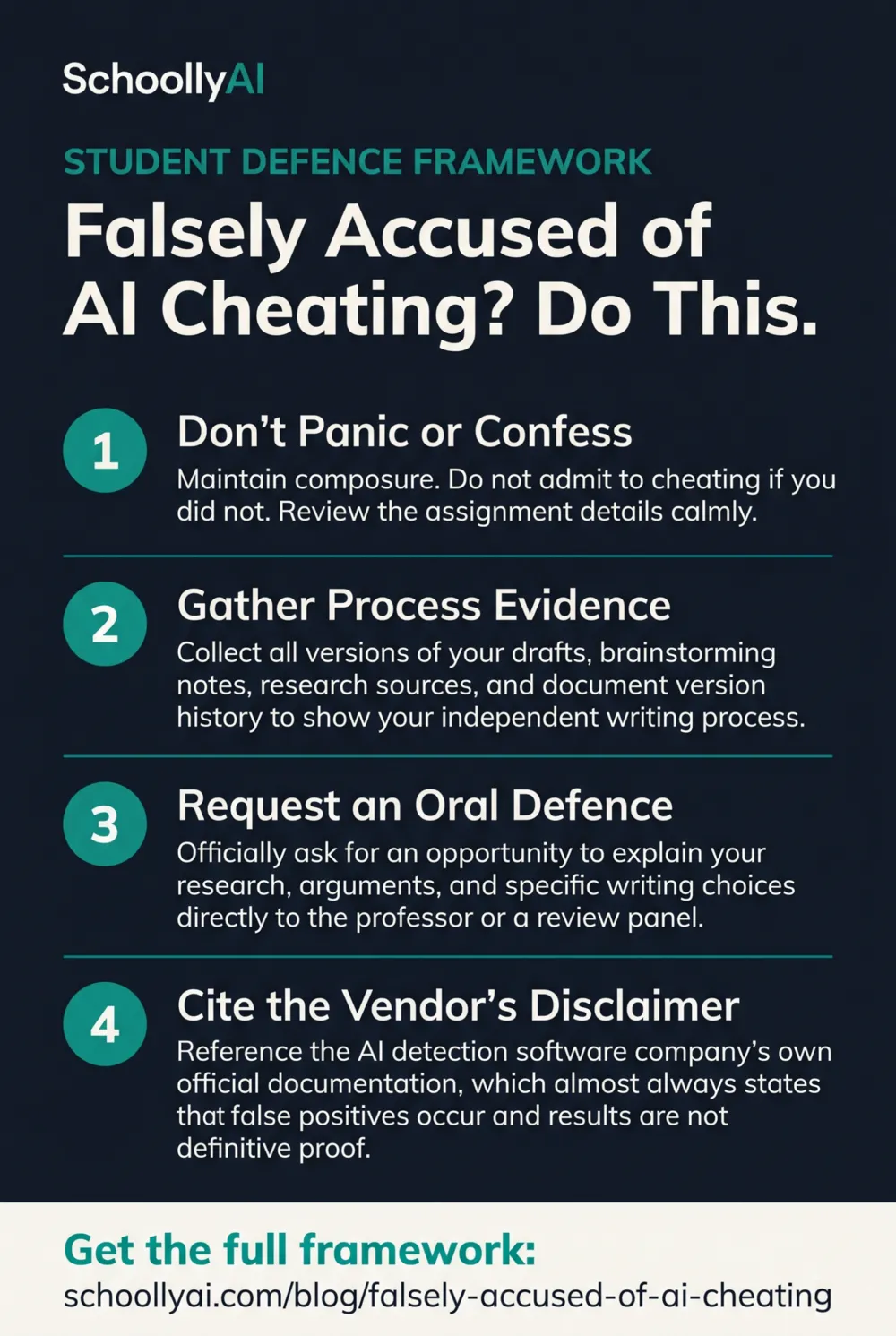

Falsely Accused of AI Cheating? Do This

If you have been falsely accused of AI cheating, the worst thing you can do is respond emotionally or confess to anything. The second worst thing is to wait. AI detection scores are probabilistic statistics, not evidence of guilt, and the vendors who build these tools say so in their own documentation. This is your step-by-step framework for building a defence that works. See also the companion post on the broader problem: AI Detectors Are Failing Honest Students.

- AI detection scores are probabilistic estimates, not proof of cheating. Turnitin's own documentation says scores should not be the sole basis for disciplinary action.

- Do not confess to using Grammarly or any editing tool as a way of acknowledging something minor. Administrators often treat this as a blanket admission of AI use.

- Gather process evidence immediately: Google Docs version history, timestamped drafts, handwritten notes, and browser search histories.

- Request an oral defence. A student who genuinely wrote their work can answer questions about it. An AI cannot attend that conversation.

- Bring the vendor's own disclaimer to your appeal meeting, printed and highlighted.

A methodical, evidence-first response is more effective than an emotional one. This framework works because it addresses what AI detectors actually measure, not what they claim to measure.

The Burden of Proof Has Quietly Shifted

Historically, academic institutions had to prove a student cheated. That burden has quietly reversed in the three years since AI detection tools became standard. Algorithmic scores now function as de facto accusations, and the student is expected to prove a negative: that they did not use AI.

This is not just unfair in the philosophical sense. It is scientifically backwards. Independent research has consistently found false positive rates of 10% to 20% on authentic human writing. A school of 1,000 students running these tools annually could generate between 100 and 200 false accusations per year, according to Structural Learning's 2026 evidence overview. Not accusations of suspicion. Formal academic misconduct charges.

One particularly troubling pattern has emerged in university disciplinary hearings. Some institutions are hiring technical "experts" to retroactively engineer ChatGPT prompts designed to generate essays that resemble the accused student's work, then using the similarity as after-the-fact evidence of guilt. This fundamentally misunderstands how generative AI works. An LLM can be coerced into replicating almost any text if prompted specifically enough. It proves nothing about the original author.

The situation is alarming enough that legal scholars are beginning to take notice. At the University of Florida, AI-specific honor code violations went from zero in 2023 to 66 reported cases in Spring 2025 alone, according to public records reported by The Independent Florida Alligator in April 2026. Not all of those cases involved genuine cheating.

Step 1: Do Not Panic and Do Not Confess to Anything Minor

The impulse when accused is to soften the confrontation by acknowledging something small. "I used Grammarly to clean up my grammar." "I ran it through spell-check." "I asked ChatGPT to explain one concept and then wrote about it myself." These are all reasonable, commonly accepted practices. They are also how a lot of students walk into formal misconduct charges.

Administrators who are unfamiliar with how AI tools work, or who are operating under pressure to take the detection score seriously, frequently treat any admission of any AI-adjacent tool use as a blanket confession to AI-generated writing. Unless your institution's policy explicitly permits Grammarly, do not volunteer that you used it. If asked directly, give a direct and honest answer. But do not lead with it as a concession.

Stay calm in every written and verbal communication. Accusatory emails will hurt your case. Professional, factual responses that request documentation and a formal hearing will help it.

Step 2: Gather Process Evidence Immediately

A linguistic argument will not clear you. Your writing style is not evidence of authorship. What clears a false AI accusation is documented proof of cognitive effort over time, and that means process evidence.

Start here. Open your Google Doc and go to File, then Version History, then See Version History. You will see every edit, every revision, every paragraph that was added or deleted, all timestamped. Export this history or screenshot it thoroughly. This is your strongest single piece of evidence, because it shows a human drafting process over hours or days. AI does not revise in Google Docs.

If you have physical notes, photograph them with a timestamp. Photograph your handwritten outline. Look back through your browser history and screenshot the research sessions that correspond to the dates you were working on the paper. Check your notes app, your email drafts, anything with a timestamp that shows you engaging with the material before the paper existed.

The Process Evidence Audit

Check off each item as you gather it. When you've completed the audit, you'll see a summary of your defence strength before you send a single email.

The goal is to document a cognitive process that happened over time. AI generates text in seconds. A student who worked on a paper over several days leaves a different evidence trail. Your job is to make that trail visible.

Step 3: Request an Oral Defence

An oral defence is the most effective tool available to a falsely accused student, and it is underused because most students don't know they can request one.

Send a formal email to your instructor or academic integrity office. Keep it simple: you wrote the work yourself, you are prepared to demonstrate this, and you are formally requesting a meeting to explain your writing process and defend your arguments. Do not be combative. Be factual and direct.

In that meeting, be ready to walk through the structure of your argument from memory, explain why you made specific vocabulary choices, and discuss what was most difficult about the assignment. If you wrote the paper, you can answer these questions. If you did not, you cannot. That asymmetry is what makes an oral defence more reliable than any detection score.

Expect them to point to a complex sentence and ask you to explain it in simpler language. Expect to be asked what sources shaped your thinking. If a continuation paragraph is requested, write it. The live comparison between your in-session writing and your submitted work is powerful evidence.

For the teacher side of this interaction, see Student AI Denial vs. Evidence: A Teacher's Framework, which covers exactly what a good teacher should be asking in this conversation.

Step 4: Cite the Vendor's Own Disclaimer

This is the move most students and parents don't know to make, and it is often the most effective one in a formal appeal.

Turnitin's official documentation from 2026 states explicitly that the AI detection tool "should not be used as the sole basis for adverse actions against a student" because it misidentifies both human and AI-generated text. Print that page. Highlight that sentence. Bring it to your meeting.

You are not attacking the technology. You are citing the company's own published limitations and pointing out that the school's process has not followed the vendor's stated guidelines. This shifts the conversation from "did you cheat" to "has the school followed its own process correctly." That is a much stronger ground to stand on.

The same disclaimer logic applies to GPTZero, Copyleaks, and other tools. Every major AI detection platform publishes caveats about false positives. Find the specific one used in your case, find its published limitations, and bring that documentation.

What Not to Do

Do not run your paper through an "AI humanizer" tool to reduce the detection score after the fact. This will almost certainly make your situation worse. The tool changes the text, which means the version you submit as "original" no longer matches your drafts and version history. It also introduces new phrasing you didn't write, which can be identified in a close reading.

Do not threaten legal action in your first communication. Even when the accusation is genuinely wrong and you are understandably furious, leading with legal threats puts the institution into defensive mode and makes resolution harder. Escalate to legal advice if the process fails, but start with the formal appeal process.

Do not accept a resolution that includes any admission of guilt, even minor guilt phrased as "the matter is resolved," without understanding exactly what you are signing. Admissions stay on your academic record and can follow you into graduate school or professional licensing.

The Numbers Behind False Accusations in 2026

Understanding why this is happening at scale helps students and parents respond without assuming bad faith on the teacher's part. Most teachers using AI detection tools are not trying to harm their students. They are working with a technology that is genuinely unreliable, in an institutional system that has not caught up to the research.

| Finding | Statistic | Source |

|---|---|---|

| Institutions using formal AI detection in disciplinary workflows | 60%+ | Thesify.ai, 2026 |

| TOEFL essays flagged by at least one major detector | 97% (89 of 91) | Stanford HAI, 2024 |

| False positive rate on authentic human writing | 10% to 20% | Weber-Wulff et al., 2024 |

| Student with 100 assignments and 1% false positive rate: probability of being wrongly flagged at least once | 63% | Reddit r/academia statistical analysis, 2024 |

| Tools that can reliably identify AI after manual editing | Accuracy drops to 60-80% | Thesify.ai, 2026 |

These numbers matter in your appeal because they establish that a false positive is not a remote theoretical possibility. It is a documented, statistically probable event that occurs across every school using these tools. Your case is not unusual.

Frequently Asked Questions

Do not panic and do not confess to minor AI use. Immediately gather process evidence: Google Docs version history, timestamped drafts, handwritten notes, and browser search histories. Then request an oral defence where you explain your writing process directly to the instructor.

Turnitin's own documentation states the tool should not be used as the sole basis for adverse actions against a student. Using a detection score alone to expel a student ignores the vendor's explicit warning about false positives. Students should cite this disclaimer directly in their appeal.

Process evidence includes Google Docs version history exports showing all edits and timestamps, successive rough drafts, handwritten notes or outlines, annotated bibliographies, and browser histories showing research activity. The goal is to document cognitive effort over time, which an AI-generated text cannot produce.

It depends on your institution's policy. Do not volunteer that you used Grammarly or any editing tool unless directly asked. Some administrators treat any AI-assisted tool use as a blanket admission of guilt, even when the actual writing and thinking were entirely your own.

Email your instructor or academic integrity office directly. State that you wrote the work yourself, that you are prepared to demonstrate this in person, and formally request an opportunity to explain your writing process and defend your arguments verbally. Keep the tone professional, not confrontational.

Sources

- Turnitin. Using the AI Writing Report. Guide. 2025/2026. guides.turnitin.com

- Structural Learning. AI and Academic Integrity. 2026. structural-learning.com

- Zou, James, et al. GPT Detectors Are Biased Against Non-Native English Writers. Stanford University HAI. 2024. hai.stanford.edu

- Thesify.ai. How Professors Detect AI Writing: 2026 Guide. 2026. thesify.ai

- The Independent Florida Alligator. AI in classrooms: Reports of academic misconduct surge. April 2026. alligator.org

- Cambridge University Press. Academic Integrity in the Age of AI. 2026. cambridge.org

Defending Academic Integrity in 2026

The AI Literacy mini-course covers what AI detectors actually measure, how to design assessments that don't rely on them, and how to build a classroom culture where honest work is protected. Free. No email required.

Start the AI Literacy Course →