How Schools Are Quietly Ditching AI Detection Tools

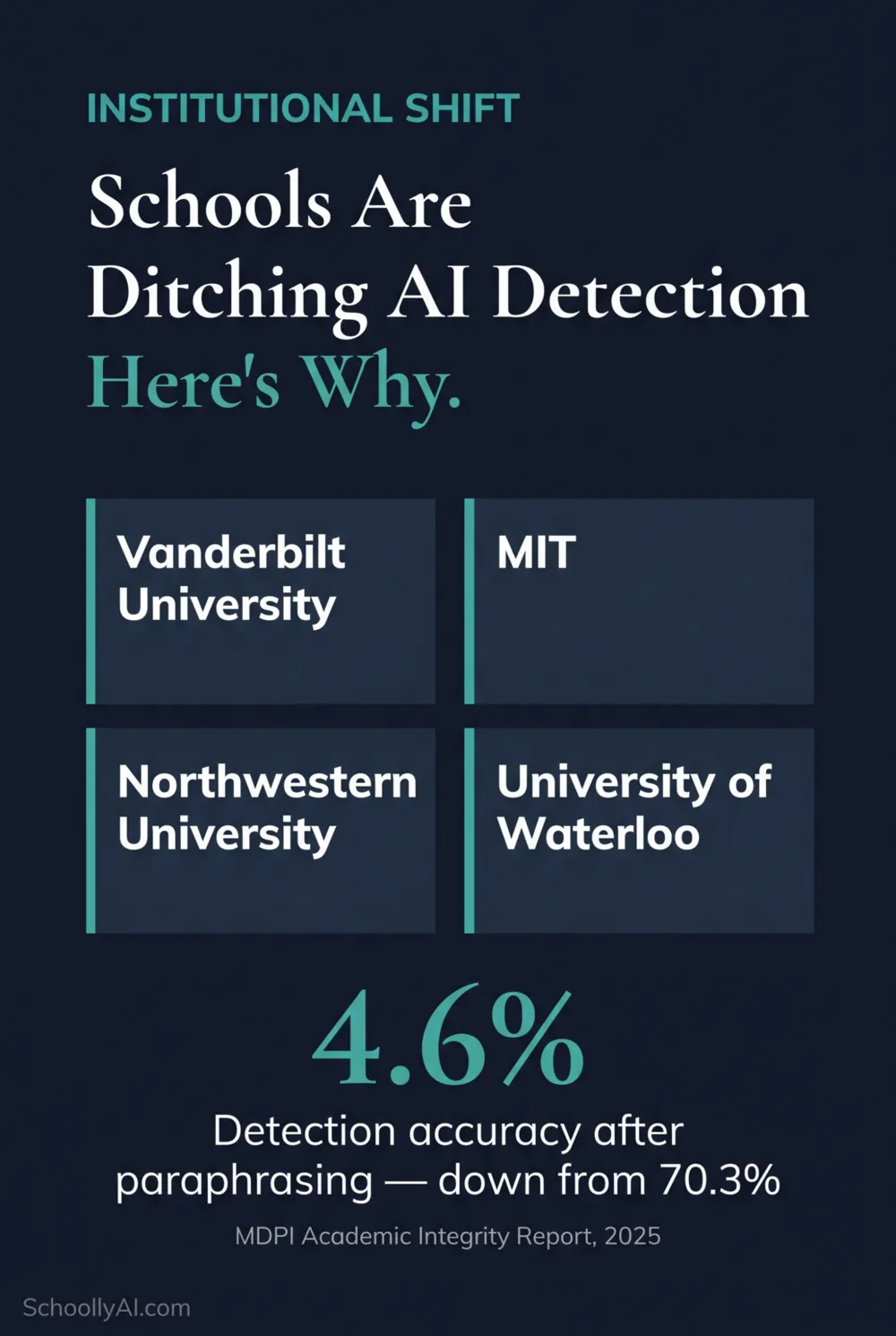

Vanderbilt University ran the math. At a 1% false positive rate applied to 75,000 annual paper submissions, their detection tools would mathematically produce 750 wrongful academic misconduct charges per year. That single calculation drove a formal policy decision to disable AI detection entirely. MIT, Northwestern, and the University of Waterloo followed. Students at schools that haven't made that call yet and who've been wrongly flagged need to move fast with process evidence. For K-12 school leaders, the question is what these institutional decisions mean for the schools that haven't done the calculation yet.

- Vanderbilt, MIT, Northwestern, and the University of Waterloo have all formally disabled AI detection functionality.

- Detection accuracy dropped from 70.3% to 4.6% after text was processed through a paraphrasing model, according to a 2025 MDPI report. The tools are easily bypassed by students who know how.

- 71% of teachers report significant added administrative burden trying to determine whether student work is authentic, according to the Center for Democracy and Technology, 2025.

- 86% of educational organizations now use generative AI tools, the highest adoption rate of any global industry, according to Coursera's 2026 report. Policing output is not a sustainable strategy at that saturation level.

- The K-12 sector has not kept pace with higher education's shift. Most district IT departments still have detection enabled by default, placing the burden of interpretation on individual teachers.

The institutional decision to disable AI detection is increasingly driven by internal calculations about false positive rates and legal exposure, not by a policy position on AI use itself.

The Vanderbilt Calculation: Why Math Ended the Detection Era

The cleanest argument against AI detection in academic integrity processes is not philosophical. It is arithmetic.

Vanderbilt administrators applied a conservative 1% false positive rate to their annual submission volume of 75,000 papers. The result: 750 honest students facing formal misconduct charges every year. Not potential charges. Not theoretical exposure. Guaranteed wrongful charges, baked into the math of using the tool at all.

Their published policy statement, released in 2024, was direct: "Based on this, we do not believe that AI detection software is an effective tool that should be used. Moving forward, with Turnitin's AI detection tool disabled, instructors should communicate with their students early about this. Many students are open to discussion about using AI, what is allowable or not."

That statement is worth keeping. It is directly citable in any school policy review that needs institutional precedent for disabling detection. It says what many administrators already know but haven't said out loud.

Why detection tools produce false positives at these rates comes down to two statistical properties, perplexity and burstiness, that formal, rubric-compliant student writing shares with AI output, the same reason the U.S. Constitution fails detection tests.

Which Institutions Have Moved and When

The institutional shift is not a rumor. It is documented and accelerating.

| Institution | Action | Date |

|---|---|---|

| Vanderbilt University | Formally disabled Turnitin AI detection; published policy statement | 2024 |

| Massachusetts Institute of Technology | Disabled AI detection features; shifted to process-based assessment | 2024/2025 |

| Northwestern University | Discontinued use of AI detection in disciplinary workflows | 2025 |

| University of Waterloo | Formally discontinued all Turnitin detection functionality | September 2025 |

These are not small institutions hedging on a pilot program. These are research universities with the legal and administrative infrastructure to absorb the decision and the reputational weight to make it stick as precedent. When MIT concludes that AI detection is not fit for disciplinary use, that conclusion matters to every institution below it in the hierarchy that is still relying on the same tools.

The University of Waterloo's decision in September 2025 is the most recent and the most formal. Their Academic Vice-President's office published the policy change with explicit instructions for instructors: disable detection, communicate expectations to students directly, and rely on pedagogical conversation rather than software dashboards.

This breakdown covers why Cambridge, Durham, and other leading universities are discontinuing AI detection, including real student impact data and the internal decision logic that drove the shift. Worth watching before your next staff meeting on this topic.

Why Now: What Finally Broke the Dam

Three things converged to produce this shift, and they arrived roughly simultaneously.

The first is the accuracy collapse under real conditions. A 2025 MDPI academic integrity report found that detection accuracy dropped from 70.3% to just 4.6% when text was processed through a paraphrasing model. That is not a marginal decline. It means the tools work adequately against a student who doesn't know they exist and fail almost completely against a student who knows about a free paraphrasing app. The students being caught are disproportionately the ones who aren't cheating or who aren't sophisticated enough to bypass the tool.

The second is legal and ethical exposure. The documented bias against ESL and neurodivergent students is increasingly being viewed through the lens of anti-discrimination law, not just pedagogy. Institutions with legal counsel are looking at systematic false positive rates against specific student populations and recognizing that the liability exposure is real. The Northern Illinois University Center for Innovative Teaching and Learning flagged this directly in 2024: deploying tools that produce algorithmically biased results against protected student populations creates potential violations of student privacy law and anti-discrimination mandates.

The third is the administrative burden. The Center for Democracy and Technology found in 2025 that 71% of teachers reported significant added workload trying to determine whether student work was authentic. That is time pulled directly from instruction. When detection tools generate false positives at scale, the appeals process consumes staff hours at a rate that schools cannot sustain. Several institutions concluded that the cost of running the detection system exceeded its benefit.

| Finding | Statistic | Source |

|---|---|---|

| Detection accuracy after text processed through paraphrasing tool | 4.6% (down from 70.3%) | MDPI Academic Integrity Report, 2025 |

| Teachers reporting significant added admin burden from detection | 71% | Center for Democracy and Technology, 2025 |

| Educational organizations using generative AI tools | 86% | Coursera AI in Higher Education, 2026 |

| K-12 students using AI for homework despite strict bans | 41% report classmates doing so | Gallup / Walton Family Foundation, 2026 |

| Students receiving clear guidance on their school's AI policy | Only 22% | Center for Democracy and Technology, 2025 |

That last figure deserves attention. Only 22% of students say they've received clear guidance on what their school's AI policy actually permits. Schools are running detection against a student population that largely doesn't know the rules. That combination produces exactly the adversarial, litigious dynamic the institutions above are trying to exit.

The K-12 Gap: Why This Hasn't Reached Most Schools Yet

The policy shift at elite universities has not translated to K-12. The operational reason is simple: in most districts, detection tools are enabled by default in the Learning Management System, and no administrator has made an active decision to turn them off. The default persists not because anyone decided detection was a good idea. It persists because no one has made the decision to change it.

That leaves individual classroom teachers holding the full weight of a broken system. Teachers in K-12 forums describe exactly the dynamic the research predicts: pressure from administration to use the detection tool, simultaneous advice to tread carefully when accusing students, aggressive parent pushback when a flag is issued, and no training on what the percentage score actually means or how to respond to it appropriately.

Jill Kowalchuk, Manager of AI Literacy at the Alberta Machine Intelligence Institute, put the core issue plainly in 2025: "We can't think about plagiarism in the ways that we traditionally did in education, because AI presents new challenges. I think it is fundamentally shifting the way that we think about assessment. It's less of a question of 'How do we catch these kids cheating?' and instead, 'How do we better create or craft assessment strategies to actually support student learning?'"

That question is the right one. But it requires a decision at the administrative level, not just the classroom level. A teacher who has disabled detection in their own gradebook workflow but whose school still uses it institutionally is still exposed to the same false positive escalation when a parent or a colleague uses the score to initiate a formal complaint.

What to Do Instead: The Practical Transition for K-12

The research recommendation is consistent across all tier-one sources: shift from policing output to authenticating process. These are the practical moves that replace detection in a K-12 context.

Require process documentation as part of the grade. Outlines, annotated bibliographies, and draft histories submitted alongside the final paper make the writing process visible. If a student's process documentation is absent or inconsistent with their final paper, the grade reflects the missing process, not an unproven accusation of AI use. This sidesteps the detection debate entirely.

Use supervised in-class writing as a baseline. One early supervised writing task per semester, kept on file, gives you a reference point for every student's authentic voice and ability level. When out-of-class work diverges dramatically from that baseline in style, vocabulary, and structural sophistication, that divergence is human evidence, not algorithmic inference.

Design assignments that AI cannot complete convincingly. Hyper-local assignments requiring specific classroom references, community knowledge, or personal experience sit outside an LLM's training data. An assignment asking a student to connect a global policy issue to something specific that happened in the last week of class cannot be completed by AI because the AI wasn't in your class. That design choice reduces the incentive to use AI dishonestly without requiring any enforcement mechanism.

Update the policy language and communicate it clearly. Only 22% of students have received clear guidance on what their school's AI policy permits. A policy that students don't understand is a policy that produces accidental violations and successful appeals. Plain language statements of what is permitted, what requires citation, and what is prohibited by assignment type are the foundation of any enforceable approach.

The institutions that have disabled detection share a common transition: from software-based enforcement toward process documentation, baseline writing, and policy clarity.

The Expert Consensus

The research position across independent scholars, international bodies, and institutional policy offices has converged. The Vanderbilt policy statement is the most direct: detection software is not an effective tool and should not be used. The University of Waterloo reached the same conclusion through the same analysis.

The educational technology analysis from Techdirt in 2026 named the structural problem with using detection as a quality standard: "If algorithms start deciding what 'human writing' looks like, will education reward deeper thinking, or simply writing that appears human to a machine?" That is the actual consequence of building assessment around detection scores. Students learn to optimise for algorithmic clearance, not for intellectual quality.

CDT, RAND, OECD, and UNESCO have all documented why punitive detection-first approaches damage the teacher-student relationship without reducing AI use, and schools that want to move away from detection need a policy structure grounded in Postplagiarism principles rather than surveillance.

Frequently Asked Questions

The primary reasons are false positive rates that produce wrongful misconduct charges at scale, documented bias against ESL and neurodivergent students, legal and privacy liability, and the finding that detection accuracy drops from 70.3% to 4.6% once text is processed through a paraphrasing tool. Institutions including Vanderbilt, MIT, and the University of Waterloo concluded that the harm from false positives exceeded any benefit from detection.

Vanderbilt University, MIT, Northwestern University, and the University of Waterloo have all formally disabled AI detection functionality. The University of Waterloo discontinued all Turnitin detection features as of September 2025. These institutions cite false positive rates, ESL bias, and procedural justice concerns as the reasons for the decision.

K-12 schools should shift to process-based assessment: requiring outlines, drafts, and annotated bibliographies alongside final submissions. Assignments should incorporate hyper-local content, specific classroom references, or personal experience that AI cannot generate. Supervised in-class writing baselines provide comparison evidence far more reliable than a detection score.

No. Independent research from 2025 found that AI detection accuracy dropped from 70.3% to 4.6% after text was processed through a paraphrasing model. Turnitin's own documentation states the tool should not be used as the sole basis for adverse actions. The technology is not fit for punitive disciplinary action.

Vanderbilt's published policy statement provides institutional cover for other schools to make the same move. Their stated rationale, that detection software cannot scientifically prove AI authorship and that instructors should instead use pedagogical conversation, is directly citable in any school's policy review. It normalizes the decision and removes the assumption that disabling detection means endorsing cheating.

Sources

- Vanderbilt University. Guidance on AI Detection Tools. Office of the Provost. 2024. vanderbilt.edu

- University of Waterloo. AI Detection Policy Update. Academic VP Office. September 2025. uwaterloo.ca

- MDPI. Evaluating the Effectiveness and Ethical Implications of AI Detection Tools in Higher Education. 2025. mdpi.com

- Center for Democracy and Technology. AI in Schools Report. 2025. cdt.org

- Coursera. AI in Higher Education Report. 2026. coursera.org

- Gallup / Walton Family Foundation. K-12 AI Use Survey. 2026. waltonk12.org

- Northern Illinois University CITL. AI Detectors: An Ethical Minefield. December 2024. citl.news.niu.edu

- Kowalchuk, Jill. Quoted in Taproot Edmonton. Upper Bound focuses on AI literacy. May 2025. edmonton.taproot.news

- Structural Learning. AI and Academic Integrity. 2026. structural-learning.com

Defending Academic Integrity in 2026

The AI Literacy mini-course covers how to build process-based assessment, write an AI policy that holds up, and have honest conversations with students about where the lines are. Free. No email required.

Start the AI Literacy Course →