AI Didn't Cheat. Your Student Did.

74% of higher education faculty report that students are actively using AI to write their assignments. College Board, February 2026 Only 40% of institutions have a formal policy in place to address it. LRDC, January 2026 The detection software does not work. The policy gap is real. This is the framework that does not require either.

- AI detectors measure statistical patterns in writing. A well-edited human essay can score 0% AI. A humanised machine essay can score 0% AI. The score proves nothing in either direction.

- The oral defence is the most reliable verification tool available in 2026. A student who wrote the work can explain it. A student who did not cannot.

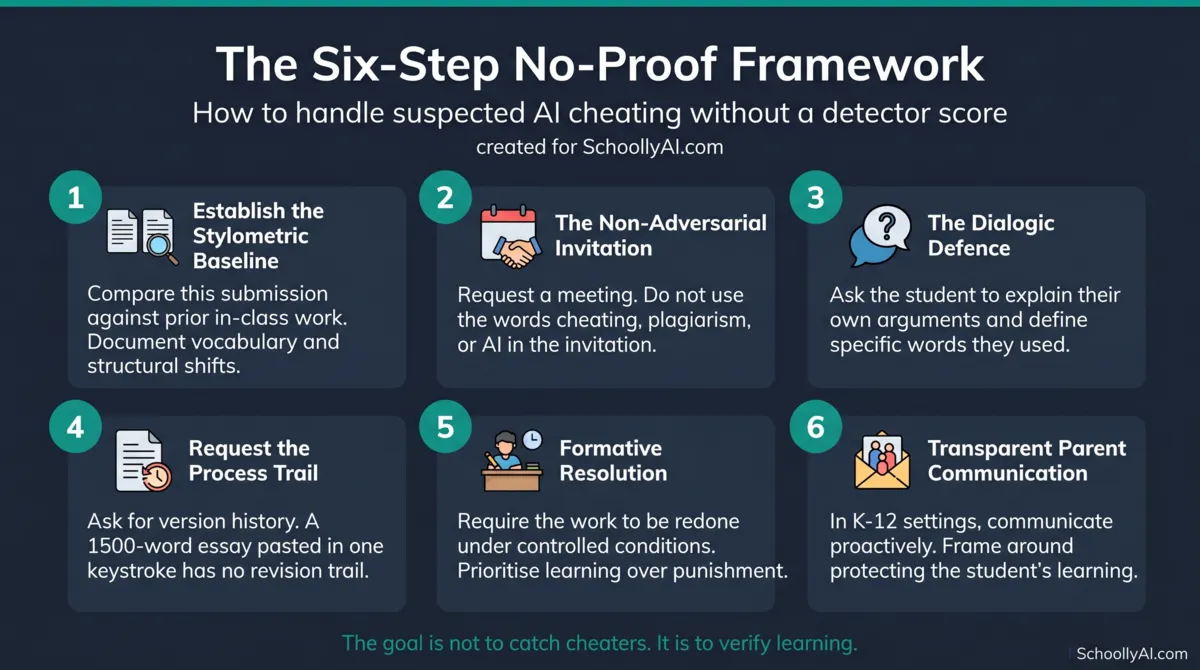

- The six-step no-proof framework moves from stylometric baseline to dialogic defence to formative resolution without requiring algorithmic evidence.

- Assignment design is the long-term fix. Tasks that require hyper-local context, a documented process trail, or an oral component are difficult to complete through AI bypass.

Why Detectors Are Broken

The institutional reflex when AI text generation arrived in classrooms was to deploy detection software. Run the submission through the tool. Get a percentage. Act on the number. That reflex has now been publicly and definitively discredited.

AI detectors work by measuring two properties of text: perplexity and burstiness. Perplexity measures how predictable the word choices are. Burstiness measures the variation in sentence length and complexity. Machine-generated text tends toward low perplexity and low burstiness because it is statistically optimised to produce smooth, predictable output. Human writing tends to be more variable.

The problem is that well-edited human writing behaves the same way. A student who drafts, revises, and polishes an essay removes inconsistencies and produces text with low perplexity and low burstiness. Internal audits published by academic laboratories in late 2025 documented false-positive rates above 30 percent for professional, human-authored nonfiction writing. Medium, 2026 The detectors were not identifying machine output. They were flagging editorial polish.

The bias problem is worse. Detection algorithms flag EAL student writing as machine-generated in 61 to 70 percent of cases, because EAL students write in more formalised grammatical structures with less idiosyncratic vocabulary. r/ChatGPT, 2026 Using these tools in a diverse classroom is not a neutral act. It systematically disadvantages the students who follow the rules of English most carefully.

Students who want to cheat have already solved the detection problem. Feeding AI-generated text through a humaniser tool, which injects deliberate syntactical irregularity, consistently achieves a 0% detection score across Turnitin, ZeroGPT, Copyleaks, and GPTZero. TimelyGrader, 2026 A motivated student with fifteen minutes and a free tool is invisible to the software. UC San Diego Extended Studies formally deactivated their Turnitin detection module in April 2025, stating that academic integrity must now rely on clear guidelines, assignment design, and more hands-on grading practices rather than software surveillance. UC San Diego Extended Studies, 2025

A 0% AI score is not evidence of human authorship. An 80% AI score is not evidence of misconduct. Both numbers are unreliable. For the full mechanical breakdown of why detectors fail, see AI Detectors and False Positives: What Teachers Need to Know.

What AI Cheating Actually Looks Like

Before the framework, a precise definition of AI cheating matters, because the debate around it is muddier than it needs to be.

AI did not make a decision. A student did. The tool did not weigh the academic integrity policy, decide to bypass the learning objective, and submit fraudulent work. A person did that. Starting from that framing keeps responsibility where it belongs and avoids the category error of treating this as a technology problem rather than a behaviour problem.

The boundary between legitimate and illegitimate AI use comes down to one question: does the tool extend the student's thinking, or does it replace it?

| Legitimate use | Illegitimate use |

|---|---|

| Using AI to brainstorm or generate an outline, then writing the actual prose independently | Submitting a fully AI-generated essay, with or without humaniser processing |

| Using AI as a Socratic tutor to clarify a concept before attempting the assignment | Using AI to summarise required reading, bypassing the reading and analysis entirely |

| Using AI for post-draft grammar and structural checks | Using visual AI tools during an exam to scan questions and generate answers |

| Using AI to process a dataset when interpretation is the learning objective | Failing to disclose AI use when disclosure is required by the assignment |

The confusion in educator forums comes from treating this as a binary. It is not. It is a spectrum, and the AIAS framework below gives it a structure that teachers can attach directly to assignment instructions.

One specific pattern worth naming: the humanisation loop. To avoid triggering false-positive flags on their legitimate human work, some students are now deliberately making their writing look more irregular. As one student described it: "Students are now forced to intentionally write worse, dumbing down their own logical, human prose, just to satisfy a machine's preference for messiness." r/ChatGPT, 2026 This is a direct consequence of building a system on broken detection tools.

The AI Assessment Scale

The AI Assessment Scale (AIAS), updated in August 2024 by Leon Furze, Mike Perkins, Jasper Roe, and Jason MacVaugh, gives teachers a practical structure for defining what AI use is permitted on any given assignment. American Psychological Association, 2026 Rather than a blanket policy applied across all work, the AIAS links the permitted level of AI use to the specific learning objective of each task.

| Level | Name | What it means for students | When to use it |

|---|---|---|---|

| 1 | No AI | No AI tools at any stage. Assessment security is fully achievable here. | In-class handwritten exams, oral assessments, supervised lab reports. |

| 2 | AI Planning | AI may be used for brainstorming, outlining, and early research only. Human composition is required for all final output. | Major essays where drafting and argumentation are the learning objectives. |

| 3 | AI Collaboration | AI may be used for feedback and revision. Students must critically evaluate and manually refine the output. | Writing development tasks, iterative drafting assignments. |

| 4 | Full AI | No restrictions on AI generation. The learning objective focuses on curation, critical analysis, and prompt engineering. | Assignments evaluating AI literacy or comparing model outputs. |

| 5 | AI Exploration | Students and AI work together to find new approaches to the learning objective. | Project-based learning, design challenges, experimental units. |

The value of the AIAS is not the levels themselves. It is the act of attaching a level to every assignment and communicating it clearly to students before submission. When a student knows that a specific task is Level 1, the question of whether using AI constitutes misconduct has already been answered.

This removes the most common student defence: "I didn't know it wasn't allowed." It does not eliminate cheating. It eliminates ambiguity, which is what most institutional policy gaps run on.

The Six-Step No-Proof Framework

A submission is on your desk. The vocabulary is too advanced for this student's baseline. The structure is formulaic. The argument is correct but oddly generic. The detector says 0%. You are professionally certain. You have no digital proof. Here is what to do.

Step 1: Establish the Stylometric Baseline

Before any contact with the student, build your evidence file. Compare the suspected submission directly against the student's previous in-class, handwritten, or low-stakes work where AI use was not possible.

You are looking for unexplained shifts in sentence complexity, punctuation habits, and vocabulary. A sudden appearance of words like "juxtaposition," "delve," or "tapestry" in a student who has never used them before is documentable. A uniform paragraph structure that bears no resemblance to the student's usual rhythm is documentable. The evidence is not a percentage score. The evidence is the contrast against the student's own historical performance.

Document these discrepancies in writing before the meeting. This protects you institutionally and keeps the conversation grounded in observable facts rather than suspicion. For a full vocabulary guide to AI writing patterns, see Words AI Uses Too Much: A Teacher's Blacklist.

Step 2: The Non-Adversarial Invitation

Invite the student to a private meeting. Do not use the words cheating, plagiarism, or AI in the initial message. The goal is to lower defensive barriers, not trigger them.

A recommended approach from the University at Albany: "Your recent submission represents a shift from your previous work. I would like to meet to discuss your writing process and how your ideas developed for this assignment." University at Albany, 2026

This language is accurate. You genuinely want to understand the writing process. That is the entire point of the next step.

Step 3: The Dialogic Defence

During the in-person meeting, ask the student to explain the specific arguments in their submitted work. Not the topic generally. The specific claims on the page, in their own words.

Questions that work: "Can you walk me through how you connected these two ideas in paragraph three?" "You used this term here. What does it mean in this context and why did you choose it?" "What was the most difficult part of building this argument, and where did you find the source you cited?"

A student who wrote the work, even with legitimate AI scaffolding at the planning stage, can discuss it. They know the intellectual journey. A student who outsourced the writing entirely will struggle quickly and visibly to explain the meaning of the sentences on the page. As one educator noted: "This approach is slower than pasting text into a detector. It is infinitely more accurate." Structural Learning, 2026

For a full script of dialogic defence questions, see How to Confront a Student About AI Cheating.

Step 4: Requesting the Process Trail

If the dialogic defence leaves serious doubt, ask for the process trail. Request access to the Google Docs version history, or ask for early handwritten brainstorm notes and drafts.

Genuine human writing is messy. It involves deletions, restructuring, pauses, and revisions visible in the document history. A 1,500-word essay that appears in the document as a single paste action, in one keystroke with no revision history, tells you what you need to know without requiring a detector score. Norvalid, 2026

Step 5: Formative Resolution Over Punitive Escalation

If the evidence from steps one through four is clear, pursue the outcome that serves the learning objective rather than the one that satisfies an institutional punishment process.

A recommended framing: "Based on our conversation and the absence of a drafting process, this work does not demonstrate your understanding of the material. Because my goal is for you to learn this, I cannot accept this submission for credit. I am going to ask you to complete the assignment again, by hand, during office hours." University at Albany, 2026

This enforces a high standard. It addresses the misconduct directly. It ensures the learning objective is met. It avoids triggering a disciplinary process that depends on evidence you do not have and software that does not work.

Step 6: Transparent Parent and Guardian Communication

In K-12 settings, parent communication is not optional at this stage. In 2026, over 70% of parents express concern about AI in schools, but 50% are unaware whether their child's teachers are using AI tools at all. SchoolAI, 2026

Frame the communication around the student's learning development, not rule violations. "I want to make sure your child is building the thinking skills that will carry them through exams and into post-secondary" opens a better conversation than "I believe your child cheated." For templates and specific scripts, see AI Academic Integrity: What to Say to Parents.

Assignment Design That Makes AI Less Useful Than Doing the Work

The six-step framework handles the situation after a suspicious submission arrives. The better investment is in assignment design that makes the situation less likely to arise in the first place.

The key finding from scholars Tricia Bertram Gallant and David Rettinger in their 2025 work The Opposite of Cheating is that the opposite of cheating is not compliance with rules. It is learning. Assessments should be designed so that bypassing the learning process is inherently difficult, detectable, or less efficient than doing the work. UC San Diego, 2026

Hyper-local and experiential context. AI tools are trained on vast amounts of generalised global data. They cannot access what happened in your classroom last Tuesday, what the local newspaper reported this week, or what a specific student observed during a field trip. Assignments that require students to connect course concepts to a specific local event, an in-class discussion detail, or a personal lived experience are difficult to complete through AI because the model has no access to that information.

Process grading over product grading. Grade the evolution of the work, not only the final submission. Require students to submit brainstorm notes, early drafts, peer feedback responses, and revision documentation. A student who fabricated the final product has no authentic process trail. The absence of that trail is evidence without needing a detector.

The oral component. Attach an oral component to any high-stakes written assignment. It does not need to be a formal viva voce. A five-minute in-class explanation, a brief recorded video reflection, or a follow-up discussion question in the next class all serve the same purpose. If the student cannot speak to what they submitted, the written work carries less weight.

Critiquing the machine. Assign students to generate an AI response to a prompt and then identify its errors, fill its gaps, and extend its argument with their own reasoning. This converts the tool from a bypass mechanism into an object of critical analysis and directly teaches AI literacy. For specific templates, see How to Design AI-Resistant Assignments in 2026.

Tricia Bertram Gallant and David Rettinger on redefining cheating as a lack of learning and practical assessment redesign strategies. Source: Eric Mazur, 2026.

What Honest Students Are Experiencing

This conversation almost always focuses on students who are gaming the system. The students who are not deserve some attention.

From May to December of 2025, the percentage of high school students using AI for homework rose from 49% to 60%. Education Week, March 2026 The students who are not using it are doing more work, often for the same or worse grades, in an environment where they perceive the misconduct as going unaddressed. One student put it plainly: "Conscientious students know that some unknown number of students are using AI undetected and driving up expectations, and their grades, down. The most honest ones will detest you for your negligence." r/Professors, 2026

There is a separate problem for students who write well. Detection software flags polished, well-structured writing as machine-generated at false-positive rates above 30 percent. A student who has worked hard to produce clean, logical prose can be accused of cheating because their writing looks too good. This is an institutional failure with real consequences for real students.

The oral defence, properly used, protects these students as much as it catches dishonest ones. A student who produced excellent work independently can speak to it confidently and in detail. That conversation is its own verification.

Institutional approaches to academic integrity beyond detection software, grounded in the Five Pillars of Integrity. Source: LibGuides Exhibition, 2026.

A technical walkthrough of how AI-generated text is manually humanised to bypass detection software. Useful context for stylometric analysis. Source: Dr. Amina Kriukow, 2026.

What Should I Do Right Now?

Select the situation you are dealing with and get a recommended next step.

AI Cheating Decision Tool

Select the situation you are in right now.

FAQ

Use the six-step framework: establish a stylometric baseline from previous work, issue a non-adversarial meeting invitation without accusatory language, conduct a dialogic defence asking the student to explain specific arguments in their own words, request a process trail such as Google Docs version history, pursue formative resolution by requiring a redo under controlled conditions, and communicate with parents proactively in K-12 settings.

AI detectors measure perplexity and burstiness. Well-edited, polished human writing produces scores that detectors associate with machine generation. False-positive rates above 30 percent for professional human-authored nonfiction were documented in 2025. Detection algorithms flag EAL student writing as AI-generated in up to 61 to 70 percent of cases. See AI Detectors and False Positives for the full breakdown.

The AIAS is a five-level framework for defining permitted AI use on any given assignment. Level 1 prohibits all AI. Level 2 permits AI for planning only. Level 3 permits AI collaboration with human revision. Level 4 permits full AI generation. Level 5 is exploratory co-creation. Attaching a level to each assignment removes ambiguity about what constitutes misconduct on that task.

An oral defence asks the student to explain specific arguments from their submitted work in their own words. A student who wrote the work can discuss it. A student who outsourced it cannot explain the reasoning behind their own sentences. This is slower than a detector and more accurate. It protects honest students and gives teachers defensible evidence without broken software.

Hyper-local context requirements, documented process trails, and oral components all make AI bypass harder. AI models have no access to what happened in your classroom, in your community this week, or in a student's personal experience. Process documentation proves authorship through the creation trail without requiring a detector score. See AI-Resistant Assignment Design for specific templates.

Do not use the words cheating, plagiarism, or AI in the initial invitation. A recommended approach: "Your recent submission represents a shift from your previous work. I would like to meet to discuss your writing process and how your ideas developed for this assignment." This positions the meeting as a pedagogical conversation rather than an accusation.

Yes. Document the stylometric discrepancies between the submission and the student's baseline, the student's inability to explain their own arguments during an oral defence, and the absence of a drafting process trail. These form a defensible evidence file. Requiring the student to redo the assignment under controlled conditions is stronger than relying on a detector score.

Sources

- College Board. Faculty Express Near-Universal Concern That Student AI Use Undermines Original Writing and Critical Thinking. February 2026. collegeboard.org

- LRDC. Weekly AI in Higher Education Report. January 30, 2026. lrdc.pitt.edu

- Covey, K. Why AI Detectors Mislabel Human Writing: New Data for 2026. Medium. medium.com

- TimelyGrader. How I Fooled the Top AI Detectors Within 15 Minutes. 2026. timelygrader.ai

- UC San Diego Extended Studies. AI Detection Deactivation: Instructor Action Required and Next Steps. 2025. ucsd.edu

- American Psychological Association. Teaching Academic Integrity in the Era of AI. 2026. apa.org

- Gallant, T.B. and Rettinger, D. How to Teach in the Age of AI. UC San Diego Today, 2026. ucsd.edu

- University at Albany. Respond to Suspicions of Student Cheating with AI. 2026. albany.edu

- Norvalid. Authorship Authentication: A Reliable Way to Ensure Student Writing. 2026. norvalid.com

- RAND Corporation. More Students Use AI for Homework, and More Believe It Harms Critical Thinking. March 2026. rand.org

- Education Week. Students Are Worried That AI Will Hurt Their Critical Thinking Skills. March 2026. edweek.org

- SchoolAI. Parent Letter AI Policy: How to Communicate Classroom AI Use to Families. 2026. schoolai.com

- Structural Learning. AI and Academic Integrity: A Teacher's Guide. 2026. structural-learning.com

- University of Pittsburgh. Encouraging Academic Integrity. 2026. teaching.pitt.edu

- FutureEd. Legislative Tracker: 2026 State AI in Education Bills. 2026. future-ed.org

Want the full picture on how AI works?

This post covers what to do when a student submits AI work. How Does AI Work? A Teacher's Guide covers why it produces the vocabulary and structural patterns you are seeing in those submissions. The AI Literacy mini-course picks up from there. Three free sections. No email required.

Start the AI Literacy Course →