AI Detector False Positives: What Teachers Need to Know

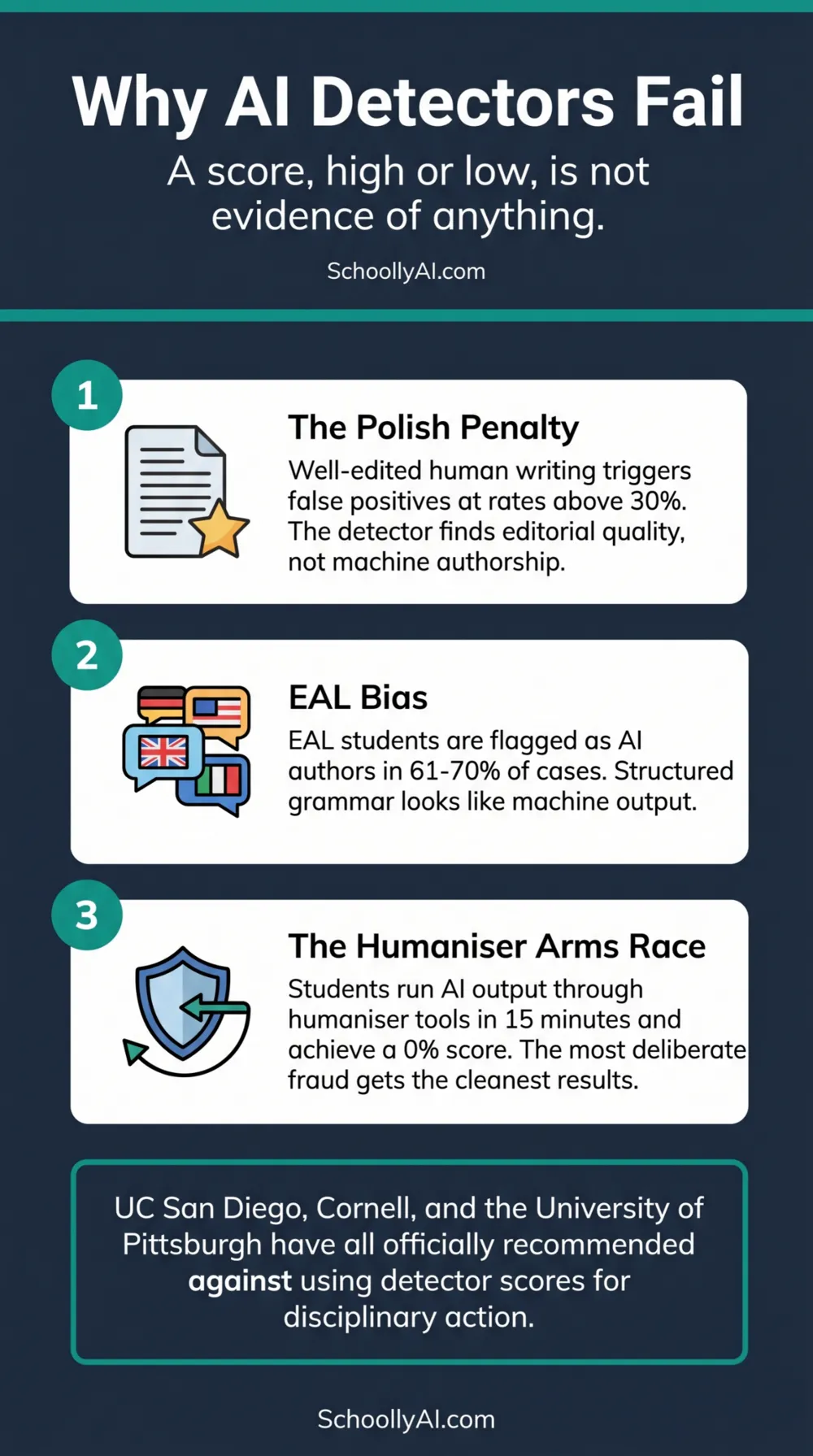

Internal audits published in late 2025 showed AI detector false-positive rates above 30% for professional, human-authored writing. Medium, 2026 Meanwhile, students who run genuine AI output through a humaniser tool consistently achieve a 0% AI score. The detectors are failing in both directions at the same time.

- AI detectors measure perplexity and burstiness. Both metrics have broken down as predictors of machine authorship.

- Well-edited human writing triggers false positives because it looks statistically like machine output after revision.

- EAL students are flagged as AI authors at rates between 61% and 70%. Using detectors for discipline functionally penalises them for writing correct English.

- AI humaniser tools bypass every major detector within minutes. The students who are actively cheating are the least likely to be caught.

How Detectors Work

AI detection tools operate by measuring two statistical properties of text. The first is perplexity: how predictable each word choice is given what preceded it in the sentence. The second is burstiness: how much sentence length and structural complexity varies across a passage.

The logic behind these metrics was reasonable when they were developed. Large language models, optimised to produce smooth and statistically probable text, tend to score low on both. Human writing, with its unexpected word choices and irregular sentence rhythms, tends to score higher. Detectors were trained on this distinction.

The problem is that the distinction has eroded in every direction at once.

Three Ways Detectors Fail

The polish penalty

When students revise and edit their work before submission, they iron out the irregularities that detectors expect from human writers. A logically structured, carefully edited essay reads statistically like machine output because it has been cleaned up to read well. The detector is not finding AI. It is finding editorial quality.

Internal audits from several academic laboratories in late 2025 demonstrated false-positive rates above 30% for professional, human-authored nonfiction writing. Medium, 2026 Teachers who rely on these tools are penalising students for writing well.

One student described the resulting paradox on a community forum: students are now being forced to intentionally degrade their own logical, human prose to satisfy a machine's statistical preference for messiness. "We are effectively punishing clarity," they wrote. r/ChatGPT, 2026

Discrimination against EAL students

Students writing in English as an Additional Language rely on more formalised grammatical structures and display less idiosyncratic vocabulary than native speakers. Their writing consistently triggers the low-perplexity patterns that detectors associate with machine generation.

Research indicates detection algorithms flag EAL student writing as machine-generated in 61% to 70% of cases. r/ChatGPT, 2026 Any school using detectors for disciplinary action is, in practice, running a system that disproportionately accuses international and EAL students of fraud for adhering to the rules of English grammar. The legal exposure alone should give administrators pause.

The humaniser arms race

AI humaniser tools take machine-generated text and restructure it to inject the burstiness and vocabulary variation that detectors look for. These tools analyse the original text for detection patterns and apply natural language processing to rewrite sentences, deliberately introducing the irregularities that signal human authorship to the algorithm.

A 2026 student experiment demonstrated that a GPT-generated essay processed through a humaniser tool bypassed Undetectable AI, ZeroGPT, Copyleaks, and GPTZero within fifteen minutes, achieving a 0% AI score across all four platforms. TimleyGrader, 2026 The most deliberate instances of academic fraud are producing the cleanest detection results. The students who are actively working to cheat are the least likely to be caught.

What the Humaniser Arms Race Means for Your Classroom

The practical consequence of the humaniser arms race is that detector scores are now uninformative in both directions. A high AI probability score could reflect genuine machine generation, or it could reflect a student who writes in formal, structured prose. A 0% AI score could reflect genuine human authorship, or it could reflect a student who ran machine-generated text through a humaniser tool for fifteen minutes before submitting.

The institutions that moved earliest on this have been clear. UC San Diego Extended Studies deactivated the Turnitin detection module in April 2025. Cornell University and the University of Pittsburgh have both explicitly recommended against using detector scores as the basis for disciplinary action. Cornell University, 2026

The risk of proceeding with discipline based on a detector score is not abstract. Students have challenged academic integrity findings, produced their own counter-reports from alternative detectors showing 0% AI, and escalated complaints that have cost institutions far more time and credibility than the original incident would have if handled differently.

A detector score is not evidence. Treating it as evidence is a liability.

What to Use Instead

The three tools that actually work do not require software subscriptions and do not discriminate against any group of students.

Stylometric comparison. Compare the suspected submission directly against prior handwritten or in-class work from the same student. Look for shifts in vocabulary complexity, sentence structure, and punctuation habits. The evidence is the contrast between this submission and the student's own documented history. That contrast is something an administrator can review, a parent can examine, and a disciplinary process can evaluate. A detector score is not.

The oral defence. Ask the student to explain specific arguments from their submission in their own words. Ask them to define specific words they used. Ask them where they found a particular source. A student who wrote the work can engage with these questions. A student who did not will struggle within the first two exchanges. This approach is slower than a detector scan. It is more accurate and produces no false positives.

The process trail. Request the Google Docs version history or Microsoft Word track changes for the submitted document. Genuine human writing has a revision trail: deletions, restructuring, progressive composition across multiple sessions. A 1500-word essay that appeared in a document in a single paste event with no subsequent editing has no process trail. That absence is reviewable evidence that any administrator can examine without relying on any algorithmic output.

For the full six-step framework for handling suspected AI submissions without detector evidence, see AI Didn't Cheat. Your Student Did.

FAQ

AI detectors measure perplexity and burstiness. Well-edited human writing scores low on both metrics because revision removes the irregularities detectors associate with human authorship. False-positive rates above 30% have been documented for professional human-authored writing. EAL students are flagged at rates between 61% and 70%.

Yes, routinely. AI humaniser tools restructure machine-generated text to inject the sentence variation detectors look for. A 2026 experiment showed that a GPT-generated essay processed through a humaniser achieved a 0% AI score across four major detection platforms within fifteen minutes.

No. Cornell, the University of Pittsburgh, and UC San Diego have all explicitly recommended against it due to high false-positive rates and susceptibility to bypass. A detector score, high or low, is not reliable evidence of anything.

Stylometric comparison against prior student work, an oral defence asking the student to explain their own arguments, and the document version history showing the drafting process. These three tools are more accurate than any detector and do not discriminate against EAL students or students who write formally.

Sources

- Covey, K. Why AI Detectors Mislabel Human Writing: New Data for 2026. Medium, 2026. medium.com

- TimleyGrader. How I Fooled the Top AI Detectors Within 15 Minutes. 2026. timelygrader.ai

- Cornell University. AI and Academic Integrity. 2026. cornell.edu

- UC San Diego. AI Detection Deactivation: Instructor Action Required. 2025. ucsd.edu

- r/ChatGPT. Turnitin is acting like a Principal who punishes you for a bad essay. 2026. reddit.com