How to Design AI-Proof Assignments in 2026

The take-home essay was always a proxy for learning. AI did not break it. It exposed that it was already broken. The fix is not better surveillance. It is assignments where doing the work is easier than bypassing it.

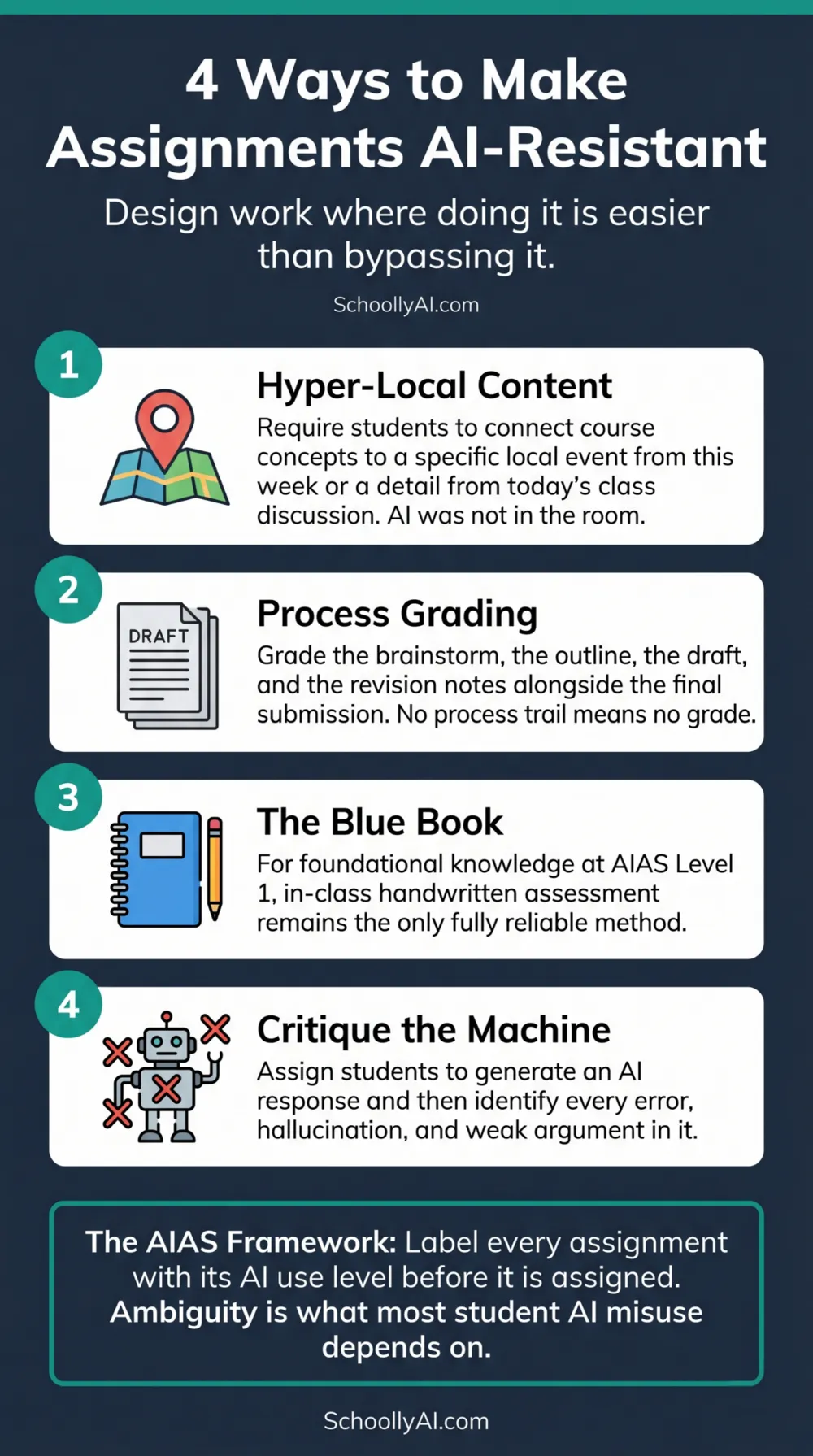

- AI-resistant assignments require content that language models genuinely cannot produce: local, experiential, and time-specific information.

- Labelling every assignment with an AIAS level removes the ambiguity that most student AI misuse depends on.

- Process grading evaluates the evolution of work over time. When the process is the evidence, there is no final product to fake.

- Assigning students to critique AI output is harder to fake than submitting AI output uncritically.

The Core Principle

The take-home essay became vulnerable to AI because it was already vulnerable to pay-for-essay services, older siblings, and copy-paste plagiarism. What changed was the friction. Every workaround that used to require time, money, or effort is now free and instantaneous. The wager that most students would do the work themselves because the alternatives were inconvenient no longer holds.

The response is not to rebuild walls around the old format. It is to design assessments where the act of doing the work genuinely produces something the shortcut cannot.

Scholars Tricia Bertram Gallant and David Rettinger frame this clearly in their 2025 book on academic integrity in the age of AI: the opposite of cheating is not compliance. It is learning. UC San Diego, 2026 If an assignment is designed so that bypassing the work produces the same result as doing it, the assignment has a structural problem. The solution is structural, not disciplinary.

Label Every Assignment with an AIAS Level

The AI Assessment Scale (AIAS) provides a five-level system for communicating exactly what AI use is permitted on any specific task. The most important function of the AIAS is not its taxonomy. It is what that taxonomy eliminates: ambiguity.

Most student AI misuse does not start with deliberate fraud. It starts with a student who is not sure what the rules are, who uses a tool that makes the task easier, and who rationalises that what they did was within the spirit of the assignment. When a teacher labels an assignment as AIAS Level 2 (AI for planning only, human composition required) in the syllabus and reviews that definition with the class, the rationalisation disappears. A student who submits fully machine-generated work at Level 2 has violated a clear, documented, communicated standard. That is a different and far easier conversation.

Label every major assessment before it is assigned. State in writing what each level permits. Review it with the class out loud. For the full AIAS table with classroom applications, see AI Didn't Cheat. Your Student Did.

Four Assignment Strategies That Work

1. Hyper-local and experiential content

Large language models are trained on generalised, historical, internet-scale data. They are poor at synthesising content that is highly localised, immediately current, or rooted in a specific person's lived experience.

Assignments that require students to connect course concepts to a specific event in their community from the past week, a tangent from a particular class discussion that is not publicly available, or their own personal experience with the material are difficult to outsource. The student's genuine presence in the class is a prerequisite for the work. The AI was not in the room.

Examples: Analyse the election results in your municipality through the lens of this week's economics unit. Describe a moment from today's lab that connects to the concept we discussed on Tuesday. Argue for or against a position using only examples you have personally observed.

2. Process grading

Rather than grading only the final polished submission, grade the evolution of the work: the initial brainstorm, the handwritten outline, the first rough draft with peer feedback notes, the revision record showing what changed and why. Require each stage to be submitted alongside the final product.

When the documented process is part of the grade, there is no final product to fake. A student who pasted in a machine-generated essay has no brainstorm, no outline, no draft history, and no revision notes. The absence of the process trail is visible without any detector scan. Harvard's academic integrity guidance for AI specifically recommends this approach as a front-line strategy. Harvard, 2026

3. The return of the blue book

For foundational knowledge assessment at AIAS Level 1, in-class handwritten examinations remain the most reliable tool. This is not a retreat from technology. It is matching the assessment method to the learning objective. A Level 1 assessment is measuring whether the student has internalised knowledge. The only way to confirm that is to ask them to produce it without assistance.

The resurgence of blue book exams and in-class writing sessions in 2025 and 2026 reflects practitioner reality: "You can no longer assign essays to be completed out of class and grade them the way you used to. The solution is proctored assessments — in-person presentations, oral exams, whatever." r/Professors, 2026

4. Critiquing the machine

Instead of prohibiting AI on an assignment, require students to generate a machine response to a complex prompt and then critically analyse, correct, and expand on the output. The student must identify where the AI hallucinated, where its argument is weak, where its reasoning is statistically probable but factually wrong.

This is an AIAS Level 4 task. It is harder to fake than a straightforward essay because it requires the student to demonstrate genuine understanding of the material to critique the AI output. A student who does not understand the topic cannot identify what the AI got wrong. The cognitive work is unavoidable.

This approach has a secondary benefit: it teaches students exactly what AI is and is not capable of, which is a more durable lesson than simply being told not to use it.

Examples by Subject Area

| Subject | Standard vulnerable assignment | AI-resistant version |

|---|---|---|

| English | Write a 500-word essay on the themes of the novel | Write a 500-word response that connects one theme to something you observed in your community this week. Submit your outline and first draft alongside the final version. |

| History | Analyse the causes of World War One | Generate an AI analysis of the causes of World War One, then write a 400-word critique identifying two specific claims the AI got wrong or oversimplified, with sources. |

| Science | Write a lab report on the experiment | Write a lab report that includes your specific observations from this session, including one thing that did not go as expected and what you think caused it. Handwritten notes from the session must be submitted. |

| Social Studies | Describe the impacts of immigration on Canada | Interview one person in your community about their experience with immigration and connect it to two specific concepts from this unit. Include your interview notes. |

| Mathematics | Complete the problem set | Complete the problem set in class in your own handwriting. For one problem, explain your reasoning in words, including where you got stuck and how you worked through it. |

The pattern across every subject is the same: anchor the work in something the student physically experienced, require evidence of the process, or ask the student to evaluate the AI output rather than produce it. Any one of these three approaches makes the assignment harder to outsource than to complete honestly.

For the full framework for handling a submission you suspect was generated by AI even with strong assignment design in place, see AI Didn't Cheat. Your Student Did. For the specific oral defence questions to use when a submission does not match a student's history, see How to Confront a Student About AI Cheating.

FAQ

An assignment becomes AI-resistant when it requires content that language models cannot generate: hyper-local details from a specific class discussion, a personal lived experience, real-time observations, or evidence of a drafting process over time. AI is trained on generalised internet data. It cannot produce information that exists only in a specific classroom, community, or student's life.

The AI Assessment Scale (AIAS) is a five-level system for defining acceptable AI use per assignment: Level 1 (no AI), Level 2 (planning only), Level 3 (collaboration with review), Level 4 (full AI), Level 5 (exploration). Labelling each assignment with its AIAS level removes the ambiguity that most student AI misuse relies on.

Process grading evaluates the evolution of work over time: brainstorms, outlines, drafts, and revision records. When the documented process is part of the grade, there is no final product to fake. A student either did the work over multiple sessions or they did not, and the documentation shows which without any detector scan.

No. Language models are trained on generalised internet data. They cannot generate content that requires physical presence in a specific classroom, knowledge of a local event from the past week, or integration of details from a specific in-class discussion. These elements are genuinely outside what the model can fabricate plausibly.

Sources

- Gallant, T. B. & Rettinger, D. The Opposite of Cheating: Teaching for Integrity in the Age of AI. 2025. ucsd.edu

- Harvard University. Academic Integrity and Teaching With(out) AI. 2026. harvard.edu

- r/Professors. Students are cheating with AI because you haven't updated your assignments. 2026. reddit.com

- American Psychological Association. Teaching Academic Integrity in the Era of AI. 2026. apa.org