How Does AI Work? A Teacher's Guide

By the 2024-2025 school year, 54% of students and 53% of teachers were actively using AI for school-related tasks, and almost none of them had received any formal training on how the technology actually works. RAND Corporation, 2025 AI is not thinking. It is predicting the next word using statistics and geometry. Once you understand that single fact, everything else clicks into place: why it hallucinates, why student essays written by AI feel slightly off, why detectors fail.

- AI tools like ChatGPT and Gemini are not thinking machines. They are statistical prediction engines trained on text.

- AI generates answers by calculating the most probable next word, not by understanding the question or verifying facts.

- Fluency is not reliability. AI can produce grammatically perfect, confident-sounding text that is completely fabricated.

- Every AI tool currently in schools is Narrow AI. General AI, the sentient kind parents worry about, does not exist yet.

The Strawberry Test: What AI Reveals About Itself

Ask a large language model how many times the letter "r" appears in the word "strawberry." It will confidently tell you two.

Then ask it to spell the word. It will give you S-T-R-A-W-B-E-R-R-Y without hesitation, three r's plain as day. Ask it again to count the r's in the word it just spelled. It will still tell you two. Then it will write you a polished, articulate paragraph explaining exactly where those two r's are located.

For any teacher who has ever graded a paper that felt wrong but read perfectly, this is the most useful thing you can know about AI.

The machine is not confused. It is not glitching. It is doing exactly what it was designed to do. It predicts the most statistically probable sequence of words, and "two" follows "how many r's in strawberry" more often in its training data than "three" does. It has no internal alarm that fires when its answer contradicts its own spelling. There is no self-awareness to catch the error.

This is not a bug that will be fixed in the next update. It is the architecture. Understanding why takes about ten minutes, and it changes how you see every AI interaction in your classroom from that point forward.

What AI Actually Is and Is Not

The tools your students are using, ChatGPT, Claude, Gemini, Perplexity, belong to a category called Large Language Models (LLMs). They are not databases. They are not search engines. They are not thinking machines.

An LLM is a mathematical prediction engine built on statistics and probability. It was trained by processing trillions of words from the internet, digitised books, academic papers, and public forums. Through that exposure, it built an enormous map of which words tend to appear near which other words. That map is what it searches when it generates a response.

The technology industry uses language like "the model understands," "the AI reads," or "it knows the answer." None of that is accurate. The model does not understand anything. It calculates what word is most likely to come next given everything that came before it. Those are very different things, and the gap between them is where all the problems in your classroom originate.

How Does AI Work? The Four-Step Breakdown

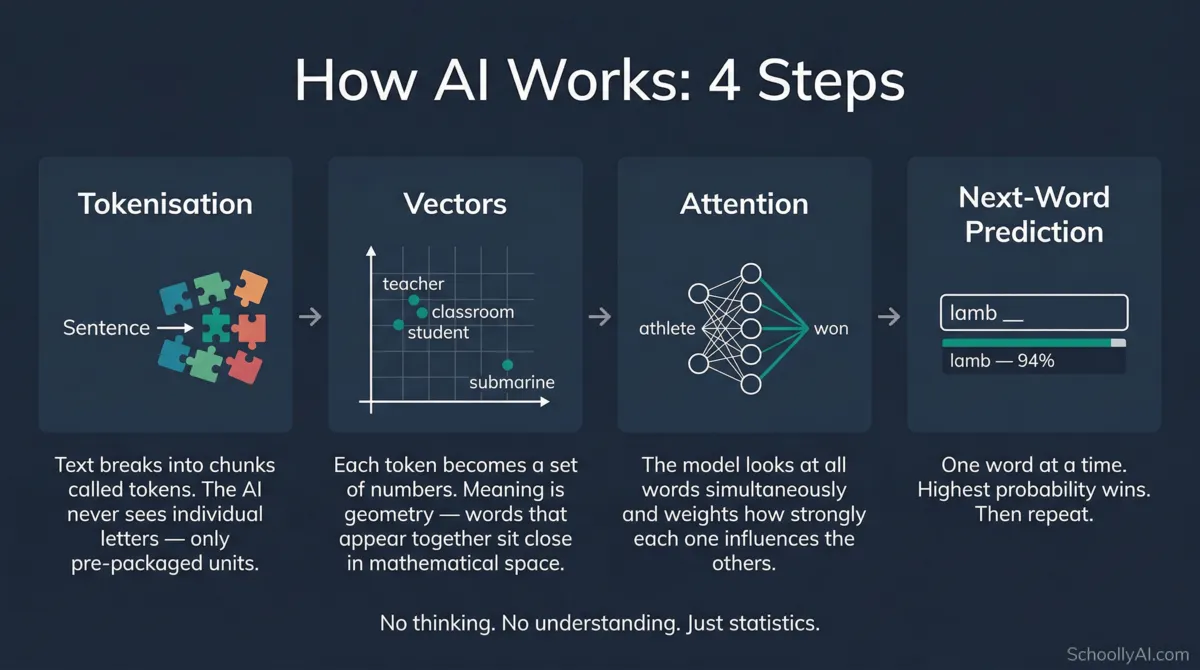

When a student types a prompt into an AI tool, four things happen in sequence. No coding knowledge required to follow this.

Step 1: Tokenisation

The AI cannot read English. Computers only process numbers. So the first thing the system does is break the input text into small units called tokens. A token is not necessarily a full word. It can be a syllable, a prefix, or a punctuation mark. The word "unbelievable" might become three tokens: "un," "believ," and "able."

This is exactly why the AI failed the strawberry test. It never sees the letters in a word. It processes pre-packaged chunks. The letter "r" inside the chunk "strawberry" is invisible to it.

Step 2: Vectors

Each token gets converted into a long list of numbers called a vector. During training, the model learned that the vector for "teacher" sits mathematically close to "classroom," "student," and "pedagogy," and very far from "submarine." The model does not know what a teacher is. It knows the mathematical coordinates of the word relative to every other word it has ever processed.

Meaning, in this system, is literally geometry.

Step 3: The Attention Mechanism

The numerical data passes through a neural network that uses something called the Attention Mechanism. This allows the model to look at all the words in a prompt simultaneously rather than reading left to right. It assigns a mathematical weight to how strongly each word influences every other word in the sentence.

In the sentence "The athlete who trained daily won the race," the mechanism links "won" back to "athlete" rather than the closer word "race." This is how the model keeps track of what a long essay is actually about from paragraph to paragraph.

Step 4: Next-Word Prediction

Everything above leads to one goal: predict the next token. The model assigns a probability percentage to every possible word in its vocabulary that could logically follow. It picks the highest probability word, outputs it, then runs the entire calculation again to predict the word after that. Then again. Then again.

Every essay, every lesson plan, every lab report a student submits that was written by AI was produced this way: one statistically probable word at a time, with no understanding of what any of it means.

Short video explaining how large language models generate text — how does ai work teachers. Source: The Theory Of Code.

Why AI Sounds Confident When It's Wrong

The most disorienting thing about AI for teachers is not that it gets things wrong. It is that it gets things wrong while sounding completely certain.

A March 2026 analysis from the LSE Business Review stated plainly that a system can generate a grammatically flawless essay on a subject it does not comprehend. LSE Business Review, March 2026 The polish, the complete sentence structure, the authoritative tone: these are cognitively powerful signals that humans are wired to read as evidence of understanding. They are not.

When a student asks the AI to write a research paper on a niche historical event with sparse training data, the model does not know it does not know. It has no metacognition, no ability to reflect on its own knowledge gaps. So it fulfills its mathematical objective: it predicts words that sound like a historical essay. It strings together plausible names, dates, and academic phrasing. The result is polished, confident, and sometimes entirely fictitious.

For more on why this happens mechanically, see Why Does AI Hallucinate Facts?

The danger is not that the AI lies. It is that it cannot tell the difference between a fact and a plausible-sounding fabrication, and it presents both with identical confidence.

Veritasium video on what AI means for how students learn and build knowledge — how does ai work teachers. Source: Veritasium.

What Happens in the Classroom When Students Use AI

When a student pastes an assignment prompt into a chatbot, the AI tokenises the prompt, maps the vectors, runs the attention mechanism, and produces statistically probable text. It takes seconds. The resulting essay is frequently grammatically correct, narratively coherent, and utterly devoid of genuine understanding.

Over 60% of students in a 2026 RAND Corporation report expressed concern about using AI for homework, with a growing number aware of what researchers are calling "cognitive stunting." RAND, March 2026 When students outsource the cognitive work of writing, the parsing, the synthesis, the search for the right word, they bypass the neurological process that builds the skill in the first place.

One teacher on r/Teachers framed it plainly: "We don't give students calculators to learn arithmetic because it undermines the learning process and learning outcomes. This is the same for AI tutoring."

The writing that comes back lacks what experienced teachers recognise immediately: the rough texture of authentic human thought. A student who wrote their own essay made choices, hit dead ends, tried a word and deleted it. AI has no dead ends. It has only the most probable path through the sentence, which produces text that is smooth in a way real thinking never quite is.

For a deeper look at the cognitive research, see AI Cognitive Stunting: What the Research Says.

What Happens When Teachers Use AI

Teachers are under the same mechanical conditions as students when they use these tools. The AI produces the most statistically average consensus output for any given prompt. For lesson plans, that means safe, generic, and uninspired. For parent emails, it means polished but impersonal. For rubrics, it means serviceable but disconnected from the specific dynamics of your class.

A more serious concern surfaced repeatedly in teacher communities through 2025 and 2026: the risk that offloading professional judgment to AI makes the profession appear more replaceable. As one teacher wrote, "Schools will give us higher workloads to compensate for alleged AI benefits... we're contributing to our devaluing by bringing AI into the classroom."

This is not an argument against ever using AI tools. It is an argument for understanding what you are handing over when you do.

Narrow AI vs. General AI: What's Actually in Your School

Much of the parent anxiety about AI in schools comes from confusing what currently exists with what belongs to science fiction.

Every AI tool in schools right now, ChatGPT, Claude, Gemini, Grammarly, adaptive reading platforms, automated grading software, is classified as Narrow AI. It is trained to perform specific tasks within defined parameters. A chatbot cannot drive a car. A traffic algorithm cannot write a sonnet.

| AI Type | What It Is | Current Status | Classroom Example |

|---|---|---|---|

| Narrow AI (ANI) | Trained for specific tasks using pattern recognition. Cannot generalise beyond its programming. | Exists now. Powers every commercial AI tool available. | ChatGPT writing an essay; adaptive math software; grammar checkers. |

| General AI (AGI) | Hypothetical system with human-like reasoning across all domains. Autonomous learning, genuine creativity. | Does not exist. Remains theoretical and speculative. | A sentient tutor that genuinely understands a student's emotional state and adapts accordingly. |

The sentient, all-knowing AI that parents worry about is not in your school. The narrow, statistically-driven prediction engine is. These require different responses, different policies, and different conversations with students and parents.

When technology executives suggest AGI is imminent, they are describing a target, not a delivery date. Scaling up next-word prediction does not automatically produce consciousness or general reasoning. The architecture does not support it.

Video on real LLM capabilities vs hype in 2026 — narrow ai vs general ai explained. Source: Digital Disruption.

The Tells: How to Spot AI Writing Without a Detector

AI detection software had a difficult 2025 and is having a worse 2026. False positive rates are high enough to have destroyed teacher-student relationships in documented cases, with original student work flagged as machine-generated and accusations that could not be walked back.

The more reliable approach is manual. AI writing has specific stylistic patterns that come from its statistical incentives:

Low burstiness. Human writing mixes short punchy sentences with longer complex ones. AI writing produces a uniform, monotonous rhythm that reads as robotic once you know what you are looking for.

Signature vocabulary. LLMs are trained heavily on formal, academic, and corporate text. They aggressively overuse specific words. If a student's essay contains "delve," "myriad," "testament," "pivotal," "tapestry," or "realm," that is a signal worth noting. See the full teacher's blacklist at Words AI Uses Too Much.

Generic transitions. AI defaults to sweeping context-setting phrases like "In the constantly evolving world of..." or "In today's fast-paced digital landscape..."

No texture. Human writing is specific. It contains personal anecdotes, unusual word choices, genuine vulnerability. AI writing is the synthesised average of existing content. It has no lived experience to draw on.

The most effective verification in 2026 is an oral defence. Ask the student to explain their word choices and defend their thesis out loud. If they cannot explain what "delineate" means despite using it perfectly in their essay, the source of the text becomes clear.

AI or Human? Test Your Eye

Read each writing sample. Decide: AI or human? See how well you know the difference.

Common Misconceptions Teachers and Parents Carry

"AI is infallible — my student trusted it, so it must be right."

The Strawberry test answers this. The machine produces plausible text, not verified text. A student who submits an essay citing a book that does not exist is not lying. They did not check. The AI did not signal any doubt because it has no access to doubt.

"AI detectors are reliable evidence of cheating."

They are not. Detection tools analyse writing patterns like sentence length variation and word predictability. Human writers, especially neurodivergent students, ESL students, or those writing formal academic prose, often produce patterns the software flags as machine-generated. Treating detector output as proof is a policy error with serious consequences for students.

"Using AI builds real thinking skills."

Using a tool builds familiarity with that tool. It does not build the underlying cognitive skills the tool replaces. Knowing how to prompt an AI is a different skill from knowing how to write, reason, or research.

"AI-generated educational materials are good enough."

Teachers in 2025 and 2026 reported widespread frustration with district-mandated AI-generated materials: sloppy analogies, stock images that did not match content, reading levels wildly mismatched to the target age group. Students notice. One student summed it up: "I'm not gonna put in effort if they just used AI."

Expert Perspectives on AI in Schools

"As many hype up the possibilities for AI to transform education, we cannot let the negative impact on students get lost in the shuffle. Our research shows AI use in schools comes with real risks, like large-scale data breaches, tech-fueled sexual harassment and bullying, and treating students unfairly."

Elizabeth Laird, Director of Equity in Civic Technology, Center for Democracy and Technology. Education Week, October 2025

"Education has always been, and will remain, a deeply human endeavor. AI offers an opportunity to elevate our practice, not to replace our expertise."

Timothy Neville, Instructional Technology Specialist, Farmington Public Schools. UConn Today, July 2025

"The classroom is taking on an almost sacred dimension for me now, where people are gathering together to be young and human together, and grow up together and learn to argue in a very complicated country together."

Daniela Di Giacomo, Associate Professor, University of Kentucky. Stanford AI+Education Summit, 2026

FAQ: How Does AI Work for Teachers

AI tools like ChatGPT work by breaking text into fragments called tokens, converting them into numbers, and then calculating the most statistically probable next word in a sequence. The model does not understand the question. It predicts what a good answer looks like based on patterns in its training data.

AI has no ability to check whether what it is saying is true. It is optimised to produce plausible-sounding text, not accurate text. When its training data on a topic is sparse, it fills the gap with words that sound like they belong. See Why Does AI Hallucinate Facts? for the full breakdown.

No. Every AI tool currently available to students, ChatGPT, Claude, Gemini, and similar, is Narrow AI. It performs specific tasks using statistical pattern matching. It has no self-awareness, no goals, no emotions, and no understanding of the text it produces. General AI does not exist yet.

Detection tools work by analysing writing patterns like sentence length variation and predictable vocabulary. Students who write in formal, uniform prose, including many neurodivergent students and English language learners, can trigger these tools unintentionally.

Ask them to explain it. An oral defence, asking the student to define words they used and defend their argument verbally, is the most reliable method available in 2026. For specific vocabulary patterns to watch for, see Words AI Uses Too Much: A Teacher's Blacklist.

The research says yes, if students use it to bypass rather than support their thinking. A 2026 RAND Corporation report found a growing number of students recognise AI use is harming their critical thinking skills. See AI Cognitive Stunting: What the Research Says.

Narrow AI performs specific tasks: generating text, recognising images, translating languages. Every AI tool in schools today is narrow AI. General AI would reason across all domains like a human. General AI does not exist in 2026 and remains theoretical.

Sources

- RAND Corporation. AI Use in Schools Is Quickly Increasing but Guidance Lags Behind. 2025. rand.org

- RAND Corporation. More Students Use AI for Homework, and More Believe It Harms Critical Thinking. March 17, 2026. rand.org

- Poynton, T. When AI sounds right but is wrong: What Strawberry reveals. LSE Business Review, March 23, 2026. lse.ac.uk

- Psychology Today. Can AI Really Think? October 2025. psychologytoday.com

- Center for Engaged Learning. AI Hallucinations Matter for More Than Academic Integrity. 2025. centerforengagedlearning.org

- Education Week. Rising Use of AI in Schools Comes With Big Downsides for Students. October 2025. edweek.org

- UConn Today. AI in K-12 Education: Partners in Progress, Not Replacements. July 2025. uconn.edu

- Stanford Report. Five myths about AI and education. March 2026. stanford.edu

- NareshIT. How Large Language Models Work: Simple Guide 2026. nareshit.com

- PMC/NIH. AI-Generated Slop in Online Biomedical Science Educational Videos. 2025. pmc.ncbi.nlm.nih.gov

Want to go deeper?

This post is the foundation. The AI Literacy mini-course picks up where it leaves off: three free sections covering how LLMs work at a conceptual level, what AI can and cannot do, and how to teach students to evaluate AI output critically. No email required. All sections open freely.

Start the AI Literacy Course →