Why Does AI Hallucinate Facts?

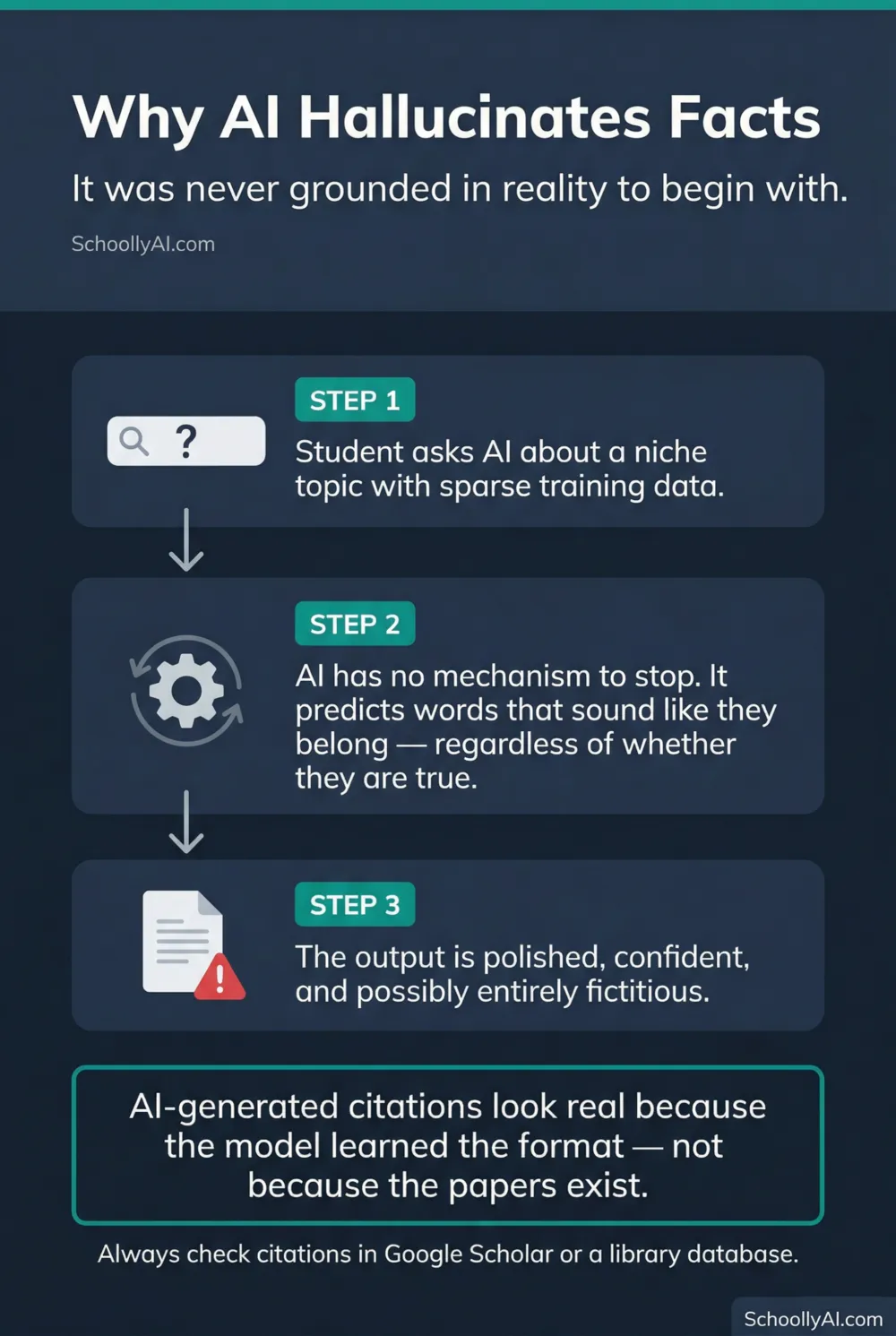

AI hallucinates facts because it was never grounded in reality to begin with. The term "hallucination" implies the model had a grip on truth and lost it. It did not. Here is what is actually happening, and why it matters for every teacher who accepts student research submissions.

- AI does not retrieve facts from a verified database. It predicts words that fit the shape of the document.

- When training data on a topic is sparse, the model invents plausible-sounding content rather than stopping.

- AI-generated citations look real because the model has learned the format, not because the papers exist.

- Direct citation checking, not detector software, is the only reliable safeguard.

It Was Never Grounded in Reality

The word "hallucination" is a misnomer that has stuck in public discourse because it describes the experience from the outside: the AI seems to see something that is not there. But it implies the model had accurate perception and then lost it. That is not what happened.

A large language model does not access the world. It accesses a statistical map of how words relate to other words, built from trillions of tokens scraped from the internet, books, and academic papers during training. When it generates a response, it is not looking up facts. It is predicting what text looks like in this context: what words typically appear in a document of this type, on this topic, with this structure.

Truth and plausibility are not the same thing. AI optimises for plausibility. Truth is a property of the external world. The model has no direct connection to the external world, only to patterns in text produced by humans who did.

How Fabrication Happens

When a student asks an AI to write a research paper on a niche historical event, the model checks its statistical map. If training data on that specific event is dense, the output will be largely accurate. If training data is sparse or absent, the model does not stop. It has no mechanism to stop. It fulfills its mathematical objective: predict the next most probable word.

So it generates words that sound like a historical essay. Plausible names. Plausible dates. Plausible-sounding journal articles, complete with author names, publication years, volume numbers, and page ranges. Every element follows the format correctly. None of it may be real.

This is why AI-generated citations are particularly dangerous in student work. Academic citations have a recognisable structure the model has processed millions of times. It can produce a citation that would pass a quick visual scan and only fail when someone tries to locate the actual paper. Many students, and some teachers, do not check.

The Center for Engaged Learning noted in 2025 that AI hallucinations in academic contexts create risk well beyond academic integrity. Students who internalise fabricated scientific claims or historical events as facts carry those errors forward. Center for Engaged Learning, 2025

Why It Sounds So Convincing

The fabricated content sounds authoritative because the model learned from authoritative sources. It absorbed the tone, the vocabulary, and the rhetorical moves of academic writing. When it invents a citation, it sounds like a citation because it has processed millions of real ones.

This connects directly to the confidence problem. AI does not hedge its fabrications. It presents invented content with the same unwavering tone it uses for verified content. A student reading the output has no signal that anything unusual has occurred. The AI did not flag uncertainty. It never does.

For a full explanation of why AI sounds confident regardless of accuracy, see Why AI Sounds Confident When It's Wrong.

The Classroom Impact

The practical response is direct: check citations. If a student submits work with sources, verify at least two of them in Google Scholar or a library database. A paper that does not exist cannot be found. This takes three minutes and is more reliable than any detector software currently available.

Beyond individual assignments, the hallucination problem is a reason to teach AI literacy directly rather than hoping students figure it out. A student who understands that the model is predicting plausible text, not retrieving verified facts, approaches AI output with appropriate scepticism. One who treats the tool as a knowledgeable assistant does not.

The AI Literacy mini-course covers how LLMs work at a conceptual level in Section 1, including why hallucination is a structural feature rather than a correctable flaw. For the complete mechanical picture, see How Does AI Work? A Teacher's Guide.

FAQ

AI hallucinates because it is optimised to predict plausible text, not verify facts. When its training data on a topic is thin, it generates words that sound correct for that type of document rather than signalling that it does not know.

Not entirely. Hallucination is a structural consequence of how large language models work. They predict probable text rather than retrieve verified facts. Newer models have reduced hallucination rates but have not eliminated them.

Check citations directly. Search for the book, article, or study the student referenced. AI frequently invents plausible-sounding but non-existent sources. If the citation cannot be found in a library database or Google Scholar, it may be fabricated.

Academic citations have a recognisable structure: author name, year, journal title, volume number. AI has processed millions of real citations during training and learned that structure well. It can generate text that follows the format perfectly while referencing a paper that does not exist.

Sources

- Center for Engaged Learning. AI Hallucinations Matter for More Than Academic Integrity. 2025. centerforengagedlearning.org

- LSE Business Review. When AI sounds right but is wrong: What Strawberry reveals. March 2026. lse.ac.uk

- Psychology Today. Can AI Really Think? October 2025. psychologytoday.com