Why AI Sounds Confident When It's Wrong

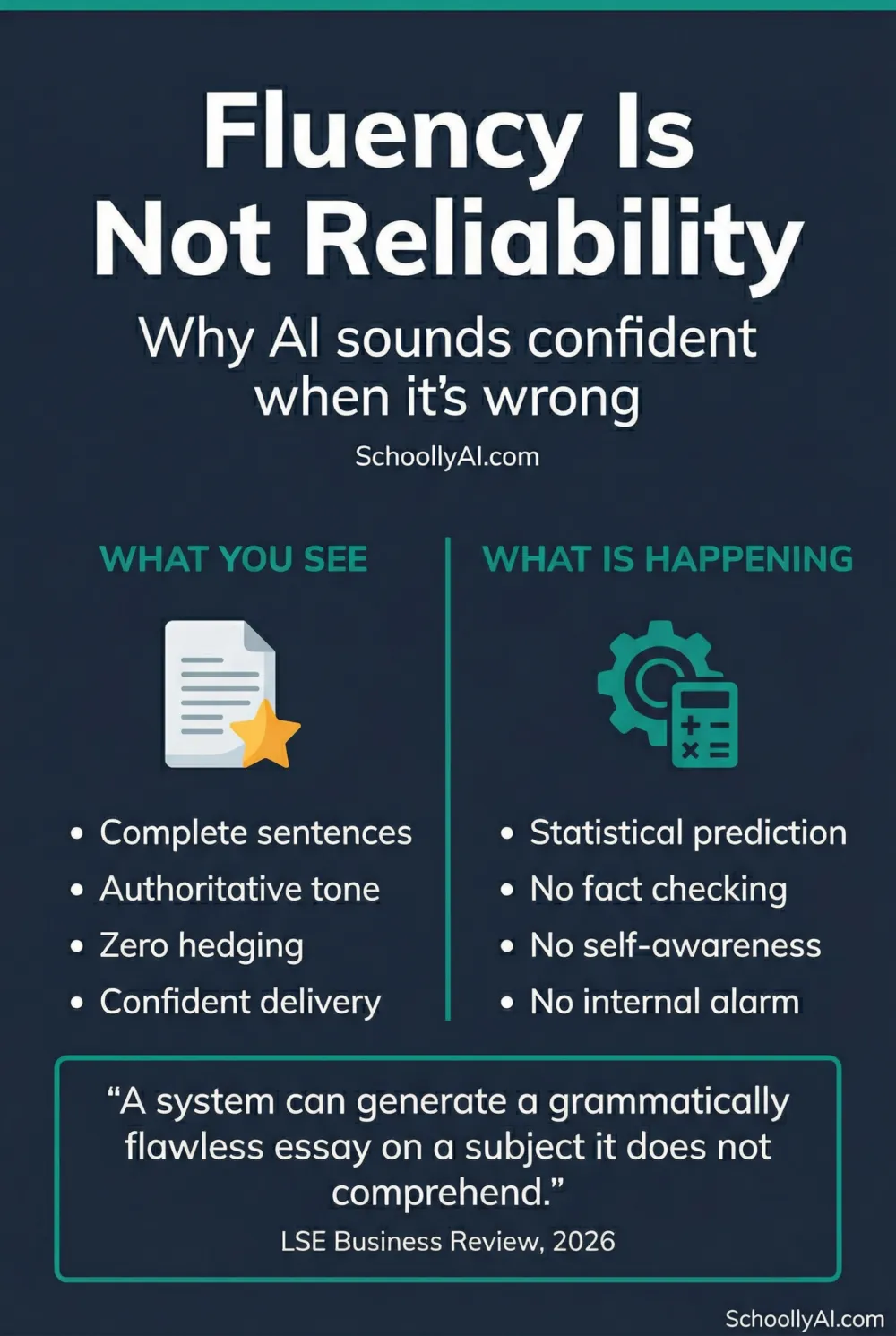

AI sounds confident when it's wrong because fluency is its primary job, not accuracy. Understanding why helps teachers stop being fooled by polished student submissions that contain fabricated facts stated with absolute certainty.

- AI is optimised for plausible text, not accurate text. These are different objectives.

- The model has no internal alarm that fires when its output contradicts reality.

- Confident, coherent language is a biological trust signal. AI produces it regardless of accuracy.

- The fix is not a detector. It is asking students to verify and defend their sources in person.

Fluency Is Not Reliability

The most disorienting thing about AI for teachers is not that it gets facts wrong. Every source gets facts wrong sometimes. The problem is that AI gets facts wrong while sounding completely certain, with the same polished, authoritative tone it uses when it is correct.

A 2026 analysis from the LSE Business Review made this precise: a system can generate a grammatically flawless essay on a subject it does not comprehend. LSE Business Review, March 2026 The polish is not evidence of understanding. It is a statistical output, the model producing the most probable sequence of words for that type of document.

Human readers are wired to read fluency as competence. Complete sentences, varied vocabulary, authoritative tone: these are signals we have learned to associate with expertise in human speakers. That shortcut fails with AI, which produces those signals regardless of whether the underlying content is accurate.

The Strawberry Problem

Ask a large language model how many times the letter "r" appears in "strawberry." It will confidently say two. Ask it to spell the word, S-T-R-A-W-B-E-R-R-Y, three r's in plain view. Ask again. Still two. Then it will write you a coherent paragraph explaining where those two r's are.

This is not a bug. It is the architecture. The model processes text in statistical chunks called tokens, not individual characters. It never sees the letters inside a word, only a unit. So when it answers the counting question, it is not counting. It is predicting what the most statistically probable answer looks like for that question.

The confidence it displays is not based on having checked. It is based on having generated a response that sounds like a correct answer. Those are entirely different things, and the gap between them is exactly where student essays with fabricated citations live.

For the full mechanical explanation of why this happens, see How Does AI Work? A Teacher's Guide.

Why There Is No Internal Alarm

When a human student realises mid-sentence that their claim is unsupported, something fires: doubt, hesitation, the urge to hedge or check. AI has no equivalent. It has no metacognition, no capacity to reflect on what it knows versus what it is guessing.

When training data on a specific topic is sparse or absent, the model does not stop and signal uncertainty. It fills the gap with words that sound like they belong in that type of document. A historical essay prompt gets historical-sounding words, plausible names, plausible dates, plausible citations. The plausibility is real. The content may not be.

As one observer put it directly: "If you tell an LLM 'don't say it if you don't know it,' the command is meaningless because the LLM doesn't know anything." It calculates what a person who does know something would probably say. The distinction matters enormously for anyone evaluating student work.

This is also why AI hallucinations are more dangerous than ordinary factual errors. A human source that gets something wrong usually signals its uncertainty somewhere: hedged language, a missing citation, an acknowledged gap. AI signals nothing. See Why Does AI Hallucinate Facts? for the full breakdown.

What This Means in Your Classroom

The practical implication is straightforward: fluent student writing is not evidence of correct student thinking. It never was, but AI has raised the stakes considerably. A student who outsourced their essay to a language model may have submitted something that reads better than their previous work and contains more errors than their previous work simultaneously.

The response is not a better detector, since detection software has its own serious problems with false positives. The response is an oral defence. Ask the student to explain their argument, define words they used, and locate the sources they cited. AI cannot attend that conversation. A student who genuinely wrote the essay can. The difference is usually immediate and obvious.

This connects directly to a broader principle: teach students what AI actually is before expecting them to use it responsibly. If they understand that the model is optimising for plausibility rather than truth, they are far less likely to trust a confident-sounding fabrication uncritically. The AI Literacy mini-course covers this in Section 1.

FAQ

AI is optimised to produce fluent, plausible-sounding text, not accurate text. It has no internal mechanism to verify facts before stating them. Confidence is a statistical output, not a signal of correctness.

No. AI has no metacognition, no ability to reflect on its own knowledge gaps. When training data on a topic is sparse, it fills the gap with statistically plausible words rather than signalling uncertainty.

Human brains are wired to associate confident, coherent language with genuine expertise. This is a biological shortcut that works well with human speakers but becomes a liability with AI, which produces fluent text regardless of whether the content is accurate.

Treat them as a teaching opportunity about source verification. Ask the student to locate and confirm the specific claim independently. The oral defence, asking students to explain and defend their work verbally, remains the most reliable verification method.

Sources

- Poynton, T. When AI sounds right but is wrong: What Strawberry reveals. LSE Business Review, March 23, 2026. lse.ac.uk

- Psychology Today. Can AI Really Think? October 2025. psychologytoday.com

- RAND Corporation. More Students Use AI for Homework, and More Believe It Harms Critical Thinking. March 2026. rand.org