AI Cognitive Stunting: What the Research Says

Over 60% of students in a 2026 RAND Corporation report expressed concern that using AI was harming their own critical thinking skills. RAND, March 2026 That figure deserves more attention than it has received. Here is what the research actually says about AI and cognitive development, without the panic and without the dismissal.

- Cognitive stunting occurs when students use AI to bypass the productive struggle that builds skills, not after skills are built.

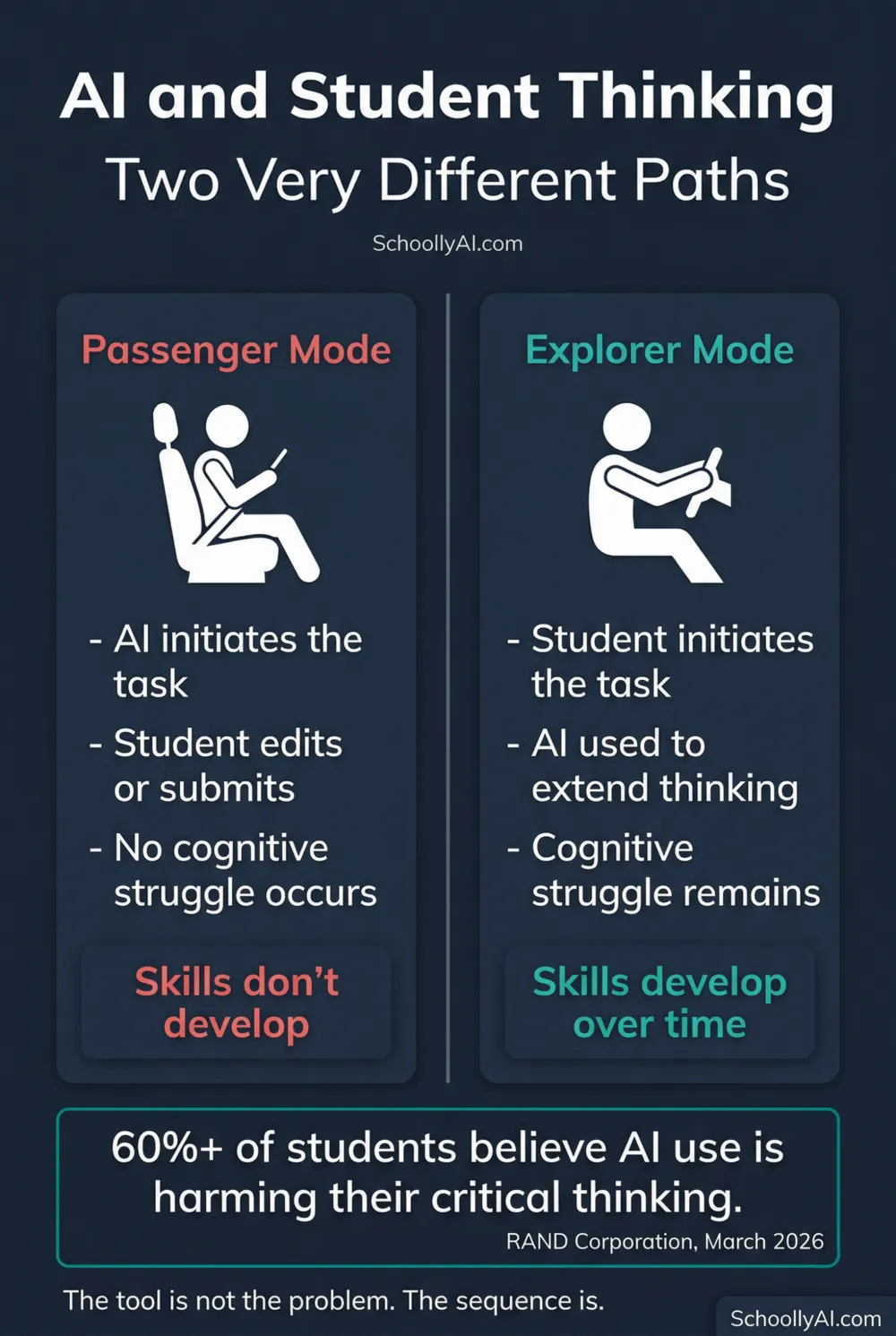

- Researchers describe a "passenger mode" pattern: students present but mentally disengaged, outsourcing initiation and synthesis to the machine.

- The calculator analogy holds: we do not give students calculators to learn arithmetic because it undermines the learning process.

- The sequence matters more than the tool. AI used after skills are developed is different from AI used instead of developing them.

What Cognitive Stunting Means

Cognitive stunting is not a clinical diagnosis. It is a term researchers and educators have adopted to describe a specific pattern: students using AI to complete tasks that would normally require them to build a cognitive skill, and therefore never building that skill.

The mechanism is straightforward. When a student writes an essay, the pedagogical value is not in the finished document. It is in the friction of producing it: parsing the sources, forming an argument, finding the right structure, searching for the word that fits. That friction is where the learning happens neurologically. When a student outsources it to AI, they produce a document without doing any of the work that makes the document worth assigning.

This is not a new problem. The calculator debate in mathematics classrooms covers similar ground. We do not give students calculators during the phase when they are learning arithmetic because the computational struggle builds the numerical intuition that later makes calculators useful. AI in writing, research, and analysis raises the same question at a much larger scale.

One teacher on r/Teachers put it plainly: "We don't give students calculators to learn arithmetic because it undermines the learning process and learning outcomes. This is the same for AI tutoring."

What the Research Shows

The RAND Corporation has tracked AI use in schools across two major studies. Their 2025 report found AI adoption accelerating with minimal guidance to support it. RAND, 2025 Their March 2026 follow-up went further: a growing proportion of students reported believing that their AI use was actively harming their ability to think critically and independently. RAND, March 2026

That second finding is worth sitting with. These are not researchers or parents expressing concern. These are students describing their own experience of their own cognition declining. They are noticing that they cannot initiate tasks the way they used to. They are noticing that they reach for the tool before they have tried.

Education Week reported in October 2025 that the rising use of AI in schools was coming with measurable downsides, not just in academic integrity terms but in student engagement and demonstrated skill development. Education Week, October 2025 Teachers across multiple studies reported that student writing was becoming more uniform, less personal, and less capable of the kind of original argument that good assignments are designed to produce.

Passenger Mode Learning

Researchers studying how students interact with AI tools have described two patterns of use. Explorer mode describes students who use AI to extend their thinking: to test an idea, find a gap in their argument, or discover a source they might not have found otherwise. These students are driving. The AI is a tool they pick up and put down.

Passenger mode describes something different. The student is present but not engaged. They paste the prompt, receive the output, make minor edits if any, and submit. The machine initiated the problem-solving. The machine synthesised the material. The student rode along. Only a small fraction of students in surveyed populations reported consistent explorer mode use. The majority described patterns closer to passenger mode.

The distinction matters because explorer mode use does not appear to produce the same concerns about skill development. The productive struggle is still happening, with the AI augmenting it rather than replacing it. Passenger mode use removes the struggle entirely, and the struggle is the point.

This also connects to why AI-generated text feels slightly off to experienced teachers. It is not wrong in any obvious way. It is smooth in a way authentic student work never quite is, because authentic student work contains the evidence of struggle. See Words AI Uses Too Much: A Teacher's Blacklist for the vocabulary patterns that mark AI output specifically.

What Teachers Can Do

The most direct response is assignment design. Tasks that require specific personal experience, local knowledge, or real-time observation are harder to outsource to AI. Asking a student to connect the material to something that happened in their community this week, or to argue against their own stated position, or to interview someone and integrate what they said: these are harder to delegate to a prediction engine.

The oral defence remains the most reliable verification tool currently available. If a student cannot explain their argument in person, the source of the written version is apparent. Building oral defence into regular assessment practice, not just as a response to suspected cheating, but as a normal part of demonstrating learning, reduces the incentive to outsource in the first place.

Teaching students what AI actually does is also worth the time. A student who understands that the model is producing statistically probable text, not thinking, not understanding, not checking, is better positioned to decide when using it serves them and when it does not. The AI Literacy mini-course covers this directly in Section 1. How Does AI Work? A Teacher's Guide is a good starting point for the conversation in class.

The goal is not to ban AI. That is both impractical and probably counterproductive. The goal is to ensure that students develop the foundational skills that make any tool, including AI, genuinely useful to them. You cannot use a tool effectively to extend capabilities you have not built.

FAQ

Cognitive stunting refers to the failure to develop foundational thinking skills when students use AI to complete tasks before building those skills themselves. When AI does the cognitive work, synthesising, organising, drafting, the student bypasses the neurological process that builds the underlying ability.

Research increasingly says yes, when AI is used to replace rather than support thinking. A 2026 RAND Corporation report found that a growing number of students themselves believe AI use is harming their critical thinking skills.

Passenger mode describes students who are physically present but mentally disengaged, relying on AI to initiate problem-solving and synthesise information on their behalf. They are in the vehicle but not driving. Researchers contrast this with explorer mode, where students use AI to deepen genuine curiosity.

Not always. The harm comes from using AI to bypass the productive struggle of learning, not from using it as a tool after skills are developed. Students who can already write, reason, and research can use AI as a productivity tool. Students who use it before developing those skills may not develop them at all.

Sources

- RAND Corporation. AI Use in Schools Is Quickly Increasing but Guidance Lags Behind. 2025. rand.org

- RAND Corporation. More Students Use AI for Homework, and More Believe It Harms Critical Thinking. March 2026. rand.org

- Education Week. Rising Use of AI in Schools Comes With Big Downsides for Students. October 2025. edweek.org