What Schools Are Getting Wrong About AI Policy

47% of parents report their child's school has provided no information about an AI policy. 57% have never been asked for input. Education Week, March 2026 Meanwhile, students in districts with strict AI bans are deliberately introducing typos into their own writing to avoid false-positive flags from detection software. r/Professors, 2026 The policy response to AI in schools has, in many places, made things measurably worse. Here is what is going wrong and what the districts getting it right are doing differently.

- Blanket AI bans are unenforceable, push use underground, and disproportionately harm students in under-resourced schools.

- AI detectors are not reliable enough for discipline. For Chinese EAL students, the false-positive rate is 61.3%. The tools discriminate by design.

- The districts getting this right replaced prohibition with sequenced AI literacy, teacher-led policy, and process-oriented assessment.

- Policy drafted without teacher input consistently fails in practice. Teachers are experiencing AI as a wicked problem. Administrators keep trying to solve it like a tame one.

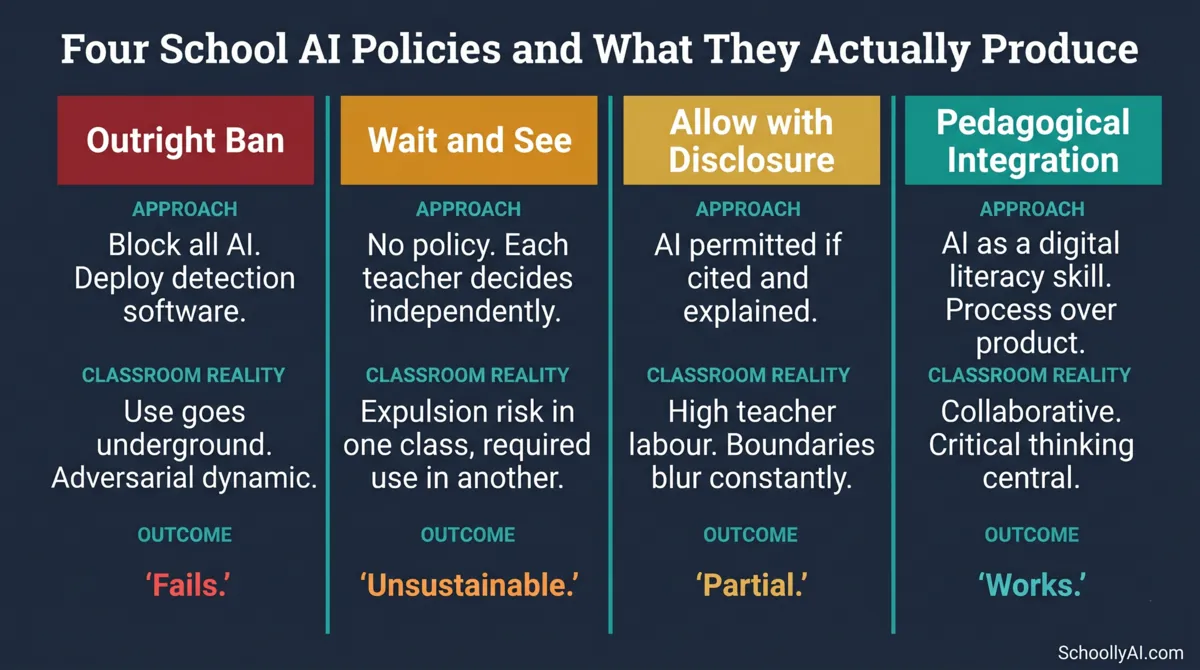

The Four Policy Approaches and What They Produce

Not all schools are handling this the same way. The range of approaches in 2025 and 2026 falls into four distinct categories, and the outcomes differ substantially.

| Policy approach | Classroom reality | Outcome |

|---|---|---|

| Outright ban | Pushes use underground. Creates an adversarial dynamic between students and teachers. | Fails. Easily bypassed. Disproportionately harms EAL and marginalised students. |

| Wait and see | No formal policy. Student may face expulsion in one class and be required to use AI in another. | Unsustainable. Leaves schools legally vulnerable and teachers entirely unsupported. |

| Allow with disclosure | AI permitted for brainstorming if students cite tools and explain their use. | Moderate success. Boundaries blur when AI rewrites student syntax without prompting. |

| Pedagogical integration | AI taught as a digital literacy skill. Assessments redesigned for process over product. | Most effective. Prepares students for the workforce while preserving cognitive development. |

The first two categories describe most schools. The fourth describes the ones producing the best outcomes. The gap between where most schools are and where the evidence points is the subject of this post.

The Diagnostic Error

The fundamental problem with school AI policy is not that administrators are unintelligent or indifferent. It is that they are consistently misdiagnosing the problem.

Researchers at Taylor and Francis describe this as confusing a "tame problem" with a "wicked problem." Taylor & Francis, 2025 A tame problem has a definable solution: write a rule, enforce it, monitor compliance. Administrators frequently frame AI in schools as a tame problem, which is why their first instinct is detection software, usage bans, and disciplinary procedures.

Teachers experience AI as a wicked problem. Wicked problems are defined by permanent uncertainty, shifting variables, and the need for continuous professional judgment rather than one-time policy fixes. The specific AI tool available to students changes every three months. Its capabilities change faster than curriculum cycles. Whether any particular use of AI in any particular assignment context constitutes academic misconduct depends on factors that cannot be captured in a district-wide policy document written by someone who has not been in a classroom recently.

Tame solutions applied to wicked problems produce the worst of both worlds: rules that look solid on paper and are functionally impossible to execute in a room of thirty diverse learners. I have been on the receiving end of these mandates. The gap between what the policy says and what is actually possible in a real classroom is not small.

A further structural problem is who writes these policies. District guidelines are frequently authored by consultants, technology vendors, or administrators who have not managed a classroom in over a decade. Medium, 2026 The resulting documents focus on risk management and institutional liability. They do not focus on instructional design because the people writing them are not instructional designers. For more on this specific problem, see Why Teachers Are Being Left Out of AI Policy Decisions.

The Calculator Analogy Is Wrong

When challenged on bans or restrictive policies, administrators frequently reach for the calculator analogy. AI, they argue, is like the calculator was in the 1970s: feared initially, eventually integrated, now unremarkable. The implication is that concerns about AI in schools reflect the same technophobia, and that integration is inevitable and harmless.

Educational researchers at the University of Western Australia debunked this analogy in August 2025. University of Western Australia, August 2025 A calculator operates on bounded logic. Given a mathematical input, it produces one verifiable correct output. It does not hallucinate alternative answers. It does not invent plausible-sounding but incorrect results. It does not synthesise information from biased sources and present it with false confidence.

Generative AI is a statistical probability engine. It produces semantically plausible approximations. Those approximations regularly contain hallucinations, embedded bias, and synthesised misinformation stated with complete confidence. Treating AI output as equivalent to calculator output (factual, verifiable, reliable) encourages students to accept AI responses as truth rather than as probabilistic drafts requiring critical scrutiny. This is not a minor distinction. It is the entire difference between a tool that extends human capability and one that replaces human judgment with a very confident guess.

The calculator analogy is not just wrong. It is actively harmful when it informs policy, because it removes the urgency of teaching students to evaluate AI output critically.

What Bans Actually Produce

The most striking finding from 2025 and 2026 is not that AI bans fail to stop AI use. That was predictable. It is what bans produce as a side effect: a perverse incentive structure that penalises the students who are working hardest.

When institutions rely on AI detection software to enforce prohibition policies, they create a system in which smooth, polished, grammatically correct writing raises suspicion. Detection tools flag well-edited human writing as machine-generated because their algorithms associate editorial quality with AI output. The practical consequence: high-achieving students who care about writing quality are now intentionally introducing typos, awkward phrasing, and grammatical errors into their wholly original work to avoid false-positive flags. r/Professors, 2026

As one university professor described it: "The false positive problem is genuinely damaging since flagging a student writing about her own cancer diagnosis is hard to defend. The fact that students are now deliberately introducing typos just to pass as human says everything about how broken the system is."

The policy designed to protect academic integrity is producing a classroom where diligent students are penalised for writing well, while students who run AI output through humaniser tools score 0% on detection software and face no consequences at all. This is not a minor implementation problem. It is a fundamental failure of the entire approach.

A large-scale field experiment in high school mathematics made the cognitive cost explicit. Students with standard AI access performed better on daily practice assignments but their exam scores dropped by 17% compared to students with no AI access. Packback, April 2025 The technology improved the product without building the underlying skill. When the scaffold was removed, the deficit was visible.

For the full analysis of what happens when detection software becomes the primary tool of AI policy, see The Real Consequences of School AI Bans.

A detailed webinar on the phases required to implement a collaborative, pedagogically sound district AI policy. Source: EdTechTeacher, 2026.

Who Detectors Actually Catch

The detection software problem is not just about false positives in the abstract. The data on who gets flagged most frequently makes this a civil rights issue.

A 2026 study found a mean false-positive rate of 61.3% for TOEFL essays written by Chinese students, compared to 5.1% for essays written by native-born US students. Thesify, 2026 ESL and EAL students rely on more formalised, structured grammatical patterns and a more limited vocabulary range because those are the patterns they were explicitly taught during language acquisition. Detection tools read that writing as machine-generated because it lacks the idiosyncratic vocabulary and irregular rhythm that detectors associate with native-speaker prose.

The baseline false-positive rate for the general student population is approximately 15%. r/PromptEngineering, 2026 Nearly one in six honest students risks a false accusation in any district using these tools for discipline. For international students, that rate is more than one in two.

In early 2026, an Executive MBA student filed a federal lawsuit against Yale University after the university suspended him based on a GPTZero detection result. The student, a non-native English speaker, argued the university used a biased algorithm to target him in violation of Title VI of the Civil Rights Act. Crowell & Moring, 2026 Whether or not this specific case succeeds, the legal theory it advances is sound: using a demonstrably biased tool to make disciplinary decisions about students is a form of algorithmic discrimination, and institutions that continue doing so are accumulating legal liability.

UC San Diego deactivated Turnitin's detection module in April 2025. Cornell University and the University of Pittsburgh have both explicitly recommended against using detector scores as the basis for disciplinary action. The institutional consensus is forming. The question is whether K-12 districts will follow before they face the same legal exposure.

For a full breakdown of how detectors fail mechanically, see AI Detector False Positives: What Teachers Need to Know.

The Equity Gap

AI bans do not affect all students equally. This is perhaps the most straightforward argument against blanket prohibition and it is almost entirely absent from the policy conversations happening in most districts.

Students in affluent districts whose families are technologically fluent will learn to use AI for sophisticated ideation, data synthesis, and research at home regardless of what the school policy says. Their parents can afford home internet, personal devices, and paid AI subscriptions. The school ban is an inconvenience at most.

Students in rural or high-poverty districts who rely entirely on school-provided technology will be blocked from accessing the tools their peers are using freely. When they enter the workforce, they will compete against graduates who have been using AI professionally since secondary school. The ban did not protect them. It widened the gap.

Data from the 2025-2026 academic year makes this explicit: nearly all low-poverty school districts will have trained their teachers on AI use by the end of the year, while only 60% of high-poverty districts will have done so. SchoolAI, 2026 The districts with the most resources are integrating. The districts with the fewest are banning. The equity gap in AI readiness is widening in the same direction as every other educational equity gap.

The workforce reality reinforces the urgency. Employers are not replacing humans with AI. They are replacing humans who do not know how to use AI with humans who do. r/AccusedOfUsingAI, 2026 A student who graduates without AI fluency from a school that banned the tools is not better prepared for the future. They are less prepared than a peer who spent the same years learning to use AI critically and responsibly. For the full analysis, see The AI Policy Equity Gap: Who Gets Left Behind.

What the Working Districts Are Doing

The districts producing the best outcomes have several things in common. They replaced prohibition with structured integration. They involved teachers in the policy drafting process. They redesigned assessments to evaluate process rather than just product. And they age-gated access rather than applying uniform rules across K-12.

Gwinnett County Public Schools, Georgia implemented a K-12 AI learning progression where Grade 1 students learn sequential logic by describing the steps to assemble a sandwich. By middle school they are using Python to program charts deciphering genetic codes. The district offers a rigorous three-course AI pathway at the high school level. Edutopia, 2026 The policy explicitly permits AI for research, foreign language development, and brainstorming, with the AIAS framework built into assignment design.

Chicago Public Schools released its AI Guidebook in July 2024 with age-gated access by grade level. Elementary students use AI under strict supervision for character interviews. Middle school students run virtual experiments with oversight. High school students have independent access for essay feedback and synthesis. Playlab, 2026 By gating access to the developmental stage of the learner rather than applying a blanket rule, Chicago prevents early-childhood cognitive debt while building the research fluencies high school students need.

Boston Public Schools operates on a five-pillar framework: Academic Excellence, Cybersecurity, Ethics, Bias Acknowledgment, and Human Oversight. The district treats AI use as a case-by-case decision made by teachers based on specific learning goals rather than a blanket mandate. They maintain an approved tools list with a strict vendor vetting process and prioritise teacher autonomy over administrative control. Playlab, 2026

Peninsula School District, Washington uses the Human-AI-Human framework. Every use of AI in schools must start with human inquiry and end with human reflection and evaluation. The policy focuses on process documentation rather than final product surveillance, integrating Universal Design for Learning principles and requiring students to critically evaluate AI output rather than accept it. Washington OSPI, 2024

The pattern across all four is the same: process over product, teacher agency over administrative surveillance, and AI literacy as a curriculum goal rather than an administrative threat. For detailed breakdowns of each approach and what made them work, see School AI Policy Examples That Actually Work.

What a Good School AI Policy Contains

Based on the research and the working district examples, a functional school AI policy needs to contain several things that most current policies do not.

Teacher input in the drafting process. Not token consultation. Actual collaborative drafting by people currently in classrooms. Policies written without this input consistently fail in practice because the people executing them had no say in their design.

AIAS-level assignment guidelines. Clear definitions of what AI use is permitted at each level of each assignment type, communicated to students before the assignment is given. Ambiguity is what most student AI misuse depends on. Remove it explicitly.

Age-appropriate access tiers. A policy that applies the same rules to Grade 2 and Grade 12 students has not been thought through. The developmental stage of the learner should determine access level.

A vendor vetting procedure. Any AI tool used in the classroom with student data must be evaluated against FERPA and COPPA requirements before deployment. The policy should specify this process and maintain an approved tools list.

An assessment redesign component. A policy that does not address assessment is incomplete. The take-home essay as currently structured is not viable in an AI world. The policy needs to acknowledge this and provide teachers with supported alternatives: process grading, oral defence, hyper-local assignments, and in-class writing.

Professional development that is actually delivered. Data shows 67% of schools report offering AI training to staff while 68% of teachers report they have never received any such training. SchoolAI, 2026 This is not a rounding error. It is a systemic failure. A policy that mandates AI integration without funding the training to support it is not a policy. It is a liability transfer.

A parent communication plan. 47% of parents have received no information about their school's AI policy. A policy that does not include a proactive parent communication strategy will encounter preventable conflicts when individual incidents arise.

Where Does Your School Stand?

Select the policy approach that best describes your school or district. Get a brief assessment of what the research says about that approach and what the evidence-backed next step looks like.

FAQ

Blanket AI bans fail because they are unenforceable. AI is embedded into browsers, search engines, and basic productivity software. Students in districts with bans report the same or higher rates of AI use as students in districts with integration policies. Bans push use underground, create an adversarial dynamic between students and teachers, and disproportionately harm students in under-resourced schools who have no home access to the technology their peers in affluent districts are using freely.

No. False-positive rates above 30% have been documented for professional human writing. For TOEFL essays written by Chinese students, the false-positive rate is 61.3%, compared to 5.1% for native US students. UC San Diego deactivated Turnitin's detection module in April 2025. Cornell and the University of Pittsburgh have both explicitly recommended against using detector scores as the basis for disciplinary action. For the full breakdown, see AI Detector False Positives: What Teachers Need to Know.

Teacher input in the drafting process, AIAS-level assignment guidelines, age-appropriate access tiers, a vendor vetting procedure for data privacy, an assessment redesign component, professional development that is actually delivered, and a parent communication plan. Policies drafted without teacher input consistently fail in practice.

No. A calculator operates on bounded logic to produce one verifiable output. Generative AI is a statistical probability engine that produces semantically plausible approximations that regularly contain hallucinations, bias, and synthesised misinformation. Treating AI output as equivalent to calculator output encourages students to accept AI responses as factual rather than as probabilistic drafts requiring critical scrutiny.

Gwinnett County, Georgia has a K-12 AI progression from foundational logic to a three-course high school AI pathway. Chicago Public Schools uses age-gated access by grade level. Boston Public Schools operates on a five-pillar framework with teacher-led case-by-case decisions. Peninsula School District in Washington uses the Human-AI-Human model requiring human reflection to close every AI interaction. Full breakdowns at School AI Policy Examples That Actually Work.

District AI policies are frequently drafted by consultants, technology vendors, or administrators who have not managed a classroom in over a decade. The structural barriers in education policy chronically exclude authentic teacher representation. The result is mandates that focus on risk management and institutional liability rather than instructional design. Data from 2025-2026: 67% of schools report offering AI training to staff while 68% of teachers report they have never received any such training. More on this at Why Teachers Are Being Left Out of AI Policy Decisions.

Sources

- r/Professors. Students Are Deliberately Writing Worse to Avoid AI Detection Flags. 2026. reddit.com

- Education Week. How Do Parents Want Schools to Handle AI? March 2026. edweek.org

- Taylor & Francis. The Wicked Problem of AI and Assessment. 2025. tandfonline.com

- Medium. Education Policy Has a Mic Problem. 2026. medium.com

- University of Western Australia. Generative AI Is Not a Calculator for Words. August 2025. uwa.edu.au

- Packback. Pulling Back the Curtains on Ethical and Pedagogical AI. April 2025. packback.co

- Thesify. How Professors Detect AI Writing. 2026. thesify.ai

- Crowell & Moring. Ivy League Lawsuit Centers on Alleged Impermissible Use of AI in Academia. 2026. crowell.com

- SchoolAI. Overcoming Teacher Resistance to AI in the Classroom. 2026. schoolai.com

- Edutopia. How Forward-Thinking Schools Are Shaping the Future of AI in Education. 2026. edutopia.org

- Playlab. District and School AI Policies. 2026. learn.playlab.ai

- Washington OSPI. A Practical Guide for Implementing AI in the Classroom. 2024. ospi.k12.wa.us

- Brookings Institution. Should Schools Ban or Integrate Generative AI? 2026. brookings.edu

- Education Week. 5 Best Practices for Crafting a School AI Policy. October 2025. edweek.org

Start with the AI literacy foundation

Policy decisions about AI in schools are only as good as the understanding behind them. The AI Literacy mini-course covers how these tools work, what they cannot do, and how to evaluate AI output critically. Three free sections. No email required.

Start the AI Literacy Course →