The AI Policy Equity Gap: Who Gets Left Behind

Nearly all low-poverty school districts will have trained their teachers on AI use by the end of the 2025-2026 academic year. Only 60% of high-poverty districts will have done so. SchoolAI, 2026 The districts with the most resources are integrating AI. The districts with the fewest are banning it. The students paying the price for that gap will not be the ones in affluent schools.

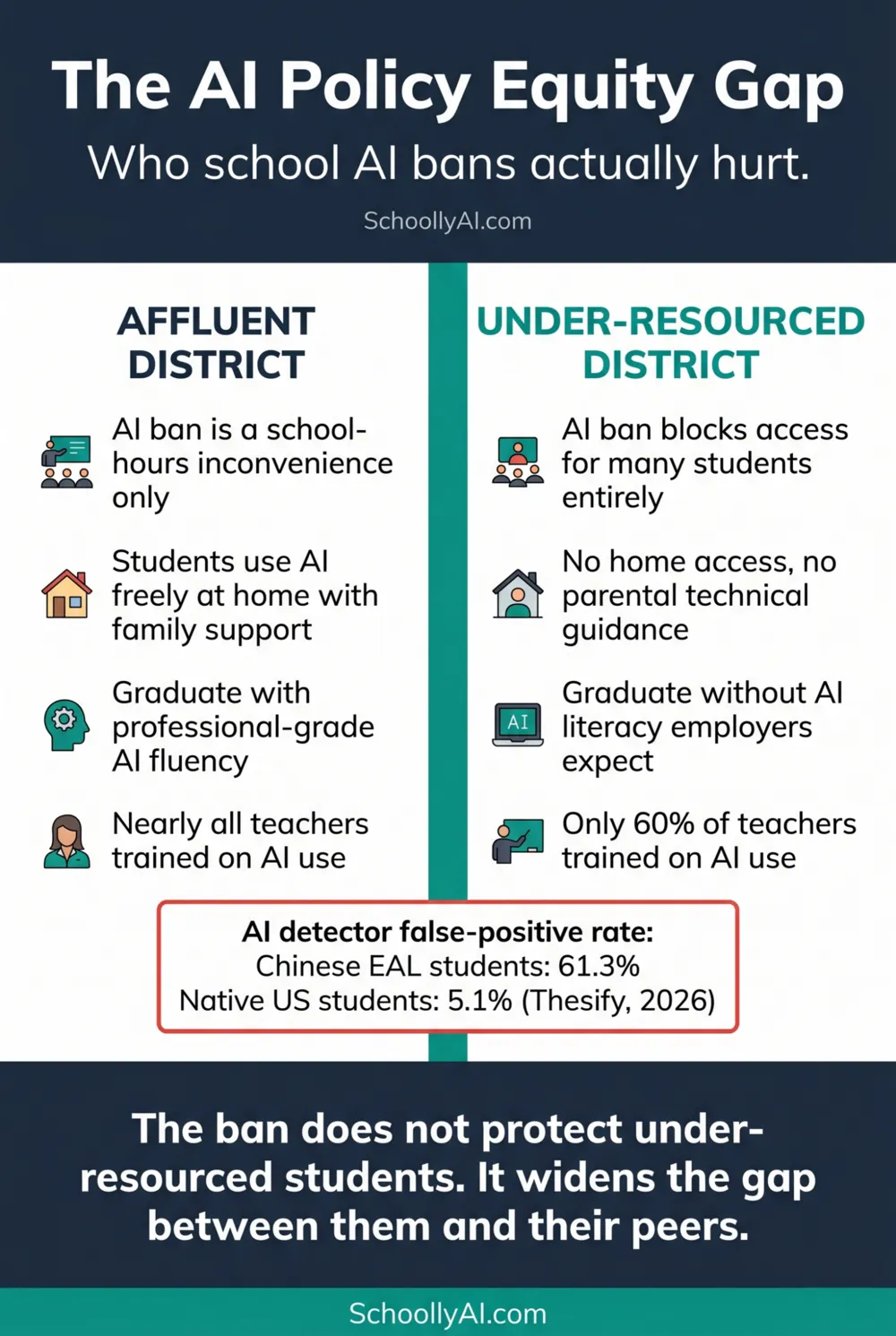

- Affluent students use AI freely at home regardless of school policy. Under-resourced students who rely on school-provided technology get blocked entirely.

- AI detection tools produce a 61.3% false-positive rate for Chinese EAL student essays and 5.1% for native US student essays. The tools discriminate by design.

- The workforce is not replacing humans with AI. It is replacing humans who cannot use AI with humans who can. A ban that denies AI literacy to under-resourced students is not protecting them.

- Equitable AI policy names who has access to what, provides devices and connectivity where students lack them, and treats AI literacy as a core curriculum goal rather than a privilege.

Two Realities in the Same Country

When a school district bans AI, the ban does not apply equally to all its students. It applies to the time those students spend in school buildings on school-provided devices. It does not follow them home.

Students in affluent households have parents who are professionally familiar with AI tools. They have personal devices, home internet connections, and in many cases direct exposure to AI in their parents' workplaces. When their school bans AI, they stop using it on school computers and continue using it everywhere else. By the time they graduate, they have several years of practical AI fluency that no school policy prevented.

Students in under-resourced households often rely on school-provided technology as their primary or only access point. When the school bans AI, those students are blocked entirely. They graduate with no meaningful exposure to the tools their employers will expect them to use from day one.

The equity gap this creates is not hypothetical. Data from the 2025-2026 academic year shows that nearly all low-poverty school districts will have trained their teachers on AI use by year end, while only 60% of high-poverty districts will have done so. SchoolAI, 2026 The districts with the resources to integrate are integrating. The districts without resources are defaulting to prohibition. The AI literacy gap is widening in the same direction as every other educational equity gap.

The Detection Disparity

The equity problem in school AI policy is not limited to access. It extends into the disciplinary systems schools use to enforce their AI rules.

AI detection tools produce a mean false-positive rate of 61.3% for TOEFL essays written by Chinese students, compared to 5.1% for essays written by native-born US students. Thesify, 2026 The disparity exists because detection algorithms flag low-perplexity, grammatically precise writing as machine-generated. ESL and EAL students write in more formalised, structured patterns because those are the patterns they were explicitly taught during language acquisition. They are being penalised for following the rules of English grammar they were taught in language learning contexts.

The practical consequence is that a school using detection software for disciplinary action has built a system that accuses international and EAL students of academic fraud at twelve times the rate it accuses native speakers. That is not a minor calibration issue. It is a form of algorithmic discrimination that a Yale student turned into a federal civil rights lawsuit in early 2026. Crowell & Moring, 2026

The baseline false-positive rate for the general student population is approximately 15%. r/PromptEngineering, 2026 Nearly one in six honest students faces the risk of a false accusation in any district using these tools for discipline. For the international and EAL students who are already navigating the challenges of studying in a second language, the additional risk of algorithmic misidentification is not a theoretical concern. It is a concrete, documented, ongoing injustice.

The Workforce Gap

The argument that AI bans protect students by preserving their foundational cognitive skills runs into an empirical wall: the skills being preserved are not the ones employers are prioritising.

The workforce is not replacing humans with AI. It is replacing humans who cannot use AI with humans who can. r/AccusedOfUsingAI, 2026 The ability to use AI tools critically and responsibly is not a luxury skill for a narrow range of technology jobs. It is increasingly a baseline expectation across a wide range of roles in healthcare, law, finance, education, engineering, and professional services.

A student who graduates from a school with a strict AI ban is not better prepared for that workforce than a peer who spent the same years learning to use AI critically. They are less prepared, and the gap compounds. The peer with AI fluency enters the workforce able to use tools their employer already relies on. The student who was banned exits school needing to learn on the job what they should have been learning in a supported classroom environment.

The White House 2026 National AI Legislative Framework specifically pushed for building an "AI-ready workforce." Education Week, April 2025 The federal priority is clear: students need AI literacy. The students in schools with the bans are the ones most likely to be denied it, and those students are disproportionately the ones who already face the greatest educational disadvantages.

What Equitable AI Policy Does

Equitable AI policy starts by naming the access gap explicitly and treating its resolution as a precondition for any other policy element. A policy that defines what AI use is permitted without addressing whether all students have device and connectivity access to use it has not solved an equity problem. It has papered over one.

In North Dakota, career and technical education teachers used AI tools to streamline lesson planning, giving them back hours of administrative time that they shifted directly into face-to-face student engagement. Governing, November 2025 The equity benefit there is not direct student access to AI tools. It is the downstream effect of teachers having more time with the students who need them most. Equitable policy uses the technology in every way that produces better outcomes for the students with the least institutional support.

Equitable AI policy treats AI literacy as a curriculum goal with the same universality as reading literacy. Every student learns to read. Every student should learn to evaluate AI output critically, understand what AI can and cannot do, and use AI tools in ways that serve their own thinking rather than replace it. A policy that reserves AI fluency for students in affluent districts, or for students whose parents have professional AI experience, is not a neutral policy. It is a policy that transfers advantage.

For the full picture of what working districts have built and what they have in common, see School AI Policy Examples That Actually Work and the main policy analysis at What Schools Are Getting Wrong About AI Policy.

FAQ

Affluent students use AI at home regardless of school policy, building fluency their peers in under-resourced schools cannot access. Nearly all low-poverty districts are training teachers on AI. Only 60% of high-poverty districts are doing so. The AI literacy gap is widening in the same direction as every other educational equity gap.

Detection tools flag low-perplexity, grammatically precise writing as machine-generated. EAL students write in more formalised, structured patterns because those are the patterns they were taught during language acquisition. The result is a 61.3% false-positive rate for Chinese student essays compared to 5.1% for native US student essays. The tools discriminate by design, not by accident.

No. The workforce is replacing humans who cannot use AI with humans who can. A student who graduates without AI fluency from a ban school is less prepared than a peer who spent the same years learning to use AI critically and responsibly. The ban denies students in under-resourced schools the preparation their peers in affluent schools are receiving regardless of school policy.

It names the access gap explicitly and treats device and connectivity access as a precondition for any other policy element. It treats AI literacy as a universal curriculum goal rather than a privilege. It uses AI tools to give teachers more time with the students who need them most. And it audits its disciplinary tools for bias before deploying them.

Sources

- SchoolAI. Overcoming Teacher Resistance to AI in the Classroom. 2026. schoolai.com

- Thesify. How Professors Detect AI Writing. 2026. thesify.ai

- Crowell & Moring. Ivy League Lawsuit Centers on Alleged Impermissible Use of AI in Academia. 2026. crowell.com

- Governing. North Dakota Schools Say AI Is Giving Teachers More Time With Students. November 2025. governing.com

- Education Week. What Trump's Draft Executive Order on AI Could Mean for Schools. April 2025. edweek.org

- SchoolAI. Why Banning AI in Schools Misses the Point. 2026. schoolai.com