School AI Policy Examples That Actually Work

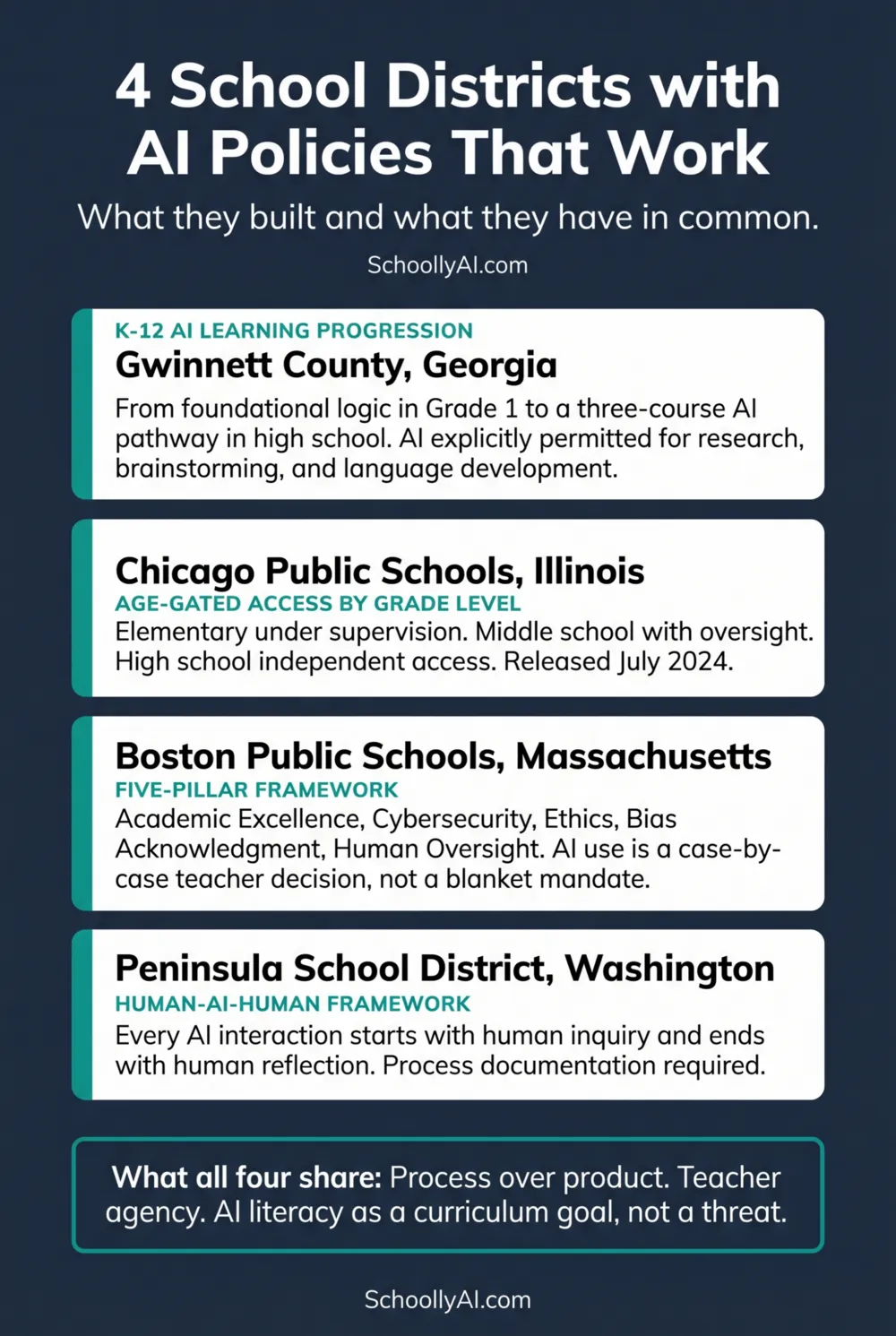

While most schools are either banning AI or waiting to see what happens, a smaller group of districts built functioning policy frameworks and are running them in live classrooms. Here is what they built and what they have in common.

- All four districts replaced prohibition with structured integration, treating AI literacy as a curriculum goal rather than an institutional threat.

- All four age-gate access in some form, recognising that the appropriate policy for an 8-year-old is not the same as for a 17-year-old.

- All four shift the assessment focus from the polished final product to the documented process of thinking.

- The common thread: teacher agency over administrative surveillance, with clear guidelines rather than blanket rules.

Gwinnett County Public Schools, Georgia: The K-12 Progression

Gwinnett County built the most structurally ambitious AI policy of any large US district, and they started with the most useful question: what should a student know about AI at each stage of their development, and how does that build year on year?

The answer is a learning progression that begins in Grade 1. First graders are not using AI tools. They are learning the foundational concept of sequential logic by describing the specific steps required to assemble a sandwich: a task that teaches procedural thinking without any technology. By middle school, students are using Python to program charts that decipher genetic codes. At Seckinger High School, a rigorous three-course AI pathway is available for students interested in building AI tools, not just using them. Edutopia, 2026

The district policy explicitly permits AI for research, foreign language development, and brainstorming, with the AI Assessment Scale framework built into how specific assignments are labelled. Students know before they start an assignment what AI use is permitted on it. The ambiguity that drives most academic misconduct is removed at the assignment level, not managed through retrospective detection.

The Gwinnett model works because it treats AI as a subject to be taught progressively, not a tool to be permitted or denied uniformly across twelve grade levels with vastly different developmental contexts.

Chicago Public Schools: Age-Gated Access

Chicago Public Schools released its AI Guidebook in July 2024, making it one of the earliest large district policy frameworks in the US. The distinguishing feature of the Chicago approach is age-gating: the permitted level of AI autonomy scales explicitly with developmental stage rather than applying a uniform standard across 300,000 students. Playlab, 2026

Elementary school students use AI only under direct teacher supervision, for specific structured tasks like character interviews where the teacher controls the interaction and debriefs the content. Middle school students access AI for virtual experiments and collaborative tasks, with teacher oversight maintained. High school students have independent access for essay feedback, synthesis of research, and exploratory work.

The logic is developmental: an 8-year-old does not yet have the critical evaluation skills to assess AI output independently. A 17-year-old can develop those skills and should be doing so in a supported classroom environment rather than encountering AI for the first time in an unsupported workplace. Chicago's framework builds the scaffolding that makes independent high school use responsible rather than risky.

The age-gating approach prevents early-childhood cognitive debt without banning AI from the educational environment entirely. Young children build foundational skills in structured, supervised AI interactions rather than developing an unexamined reliance on the tool before they have the critical thinking skills to question its output.

Boston Public Schools: The Five-Pillar Framework

Boston Public Schools released its AI guidance in August 2025, and the framework reflects a deliberate philosophy: AI policy in a school district is not primarily a technology decision. It is an educational values decision.

The five pillars are Academic Excellence, Cybersecurity, Ethics, Bias Acknowledgment, and Human Oversight. Playlab, 2026 Each one reflects a specific commitment. Academic Excellence means AI use must serve genuine learning outcomes, not just grade outcomes. Cybersecurity means students and teachers are protected through vendor vetting and data privacy compliance. Ethics and Bias Acknowledgment mean AI tools are examined for what they encode and what populations they disadvantage. Human Oversight means a person is always accountable for what AI produces.

Critically, the Boston policy treats AI use as a case-by-case decision made by teachers based on specific learning goals rather than a blanket mandate applied district-wide. This is the teacher-agency principle in practice. The policy provides a framework of values and a list of approved, vetted tools. The judgment about whether AI serves the learning goal of a specific assignment on a specific day with a specific class is left to the professional in the room.

The district maintains an approved tools list with a strict vendor vetting process. No tool enters the classroom without being evaluated for data privacy compliance. This protects students and removes the ambiguity teachers face when well-intentioned colleagues use unapproved tools with student data.

Peninsula School District, Washington: Human-AI-Human

Peninsula School District's framework is the most conceptually clear of the four. Every AI interaction in a school context must begin with human inquiry and close with human reflection. The AI occupies the middle of the process. It does not start it and it does not conclude it. Washington OSPI, 2024

The practical application is straightforward. A student cannot open an AI tool and ask it to generate an essay plan. They must first do the human work of forming a question, identifying what they already know and do not know, and articulating what they need. The AI then assists with specific, bounded tasks. The student then does the human work of critically evaluating the AI output, integrating it with their own thinking, and taking responsibility for what they submit.

The framework integrates Universal Design for Learning principles, which means it is designed from the start to serve students with varying abilities and learning needs. AI tools are positioned as accessibility supports and cognitive scaffolds, not shortcuts. The assessment focus is on the process documentation: what the student did before the AI, what they asked the AI, what they changed or rejected from the AI output, and what they concluded independently.

The Human-AI-Human model sidesteps the detection problem entirely. There is nothing to detect when the policy requires evidence of the human-authored components at every stage. A student who submits without the process documentation has failed to meet the assignment requirements regardless of whether a detector flags the content.

What to Take from These Examples

No district policy can be copied wholesale into a different institutional context. Gwinnett's K-12 progression works partly because it was built with the specific community and staffing of Gwinnett County in mind. But the structural decisions these districts made are transferable.

Age-gate access rather than applying uniform rules across K-12. Build AI literacy into curriculum progressively rather than treating it as a one-time training event. Give teachers clear, specific guidance at the assignment level rather than vague principles. Evaluate AI tools for bias and data privacy before deploying them. Design assessment to evaluate process rather than polished final product. Keep a human accountable at the start and end of every AI-assisted task.

None of those decisions require a large budget or a dedicated technology department. They require an institutional willingness to accept that the old approach is not working and to involve the people closest to the problem in building the replacement.

For the full analysis of what most schools are doing instead and why it is failing, see What Schools Are Getting Wrong About AI Policy. For a look at who gets hurt most by the failure, see The AI Policy Equity Gap: Who Gets Left Behind.

FAQ

Gwinnett County, Georgia has a K-12 AI learning progression from foundational logic in Grade 1 to a three-course high school pathway. Chicago Public Schools uses age-gated access by developmental stage. Boston Public Schools operates on a five-pillar framework with teacher-led case-by-case decisions and a vetted tools list. Peninsula School District in Washington uses the Human-AI-Human model requiring human inquiry and human reflection to frame every AI interaction.

All four districts share: AI literacy treated as a curriculum goal rather than a compliance problem, teacher agency over administrative surveillance, age-appropriate access rather than uniform rules, assessment focused on process documentation rather than polished final product, and a vendor vetting procedure for data privacy.

Peninsula School District's framework requires every AI interaction to begin with human inquiry and close with human reflection. The student forms a question and articulates what they know before opening any AI tool. After AI assistance, the student critically evaluates the output, integrates it with their own thinking, and takes responsibility for what they submit. The process documentation is part of the assessment.

Elementary students access AI only under direct teacher supervision for specific structured tasks. Middle school students use AI for experiments and collaborative work with teacher oversight. High school students have independent access for essay feedback, research synthesis, and exploratory tasks. The permitted autonomy scales with the student's developmental stage and critical evaluation capacity.

Yes. The structural decisions these districts made are transferable without requiring large budgets or technology departments: age-gate access, build AI literacy into curriculum progressively, give teachers clear assignment-level guidance, evaluate tools for bias and data privacy, design assessments for process rather than product, and keep a human accountable at the start and end of every AI-assisted task.

Sources

- Edutopia. How Forward-Thinking Schools Are Shaping the Future of AI in Education. 2026. edutopia.org

- Playlab. District and School AI Policies. 2026. learn.playlab.ai

- Washington OSPI. A Practical Guide for Implementing AI in the Classroom. 2024. ospi.k12.wa.us

- Education Commission of the States. Artificial Intelligence Technologies in Education. July 2025. ecs.org

- Education Week. 5 Best Practices for Crafting a School AI Policy. October 2025. edweek.org