The Real Consequences of School AI Bans

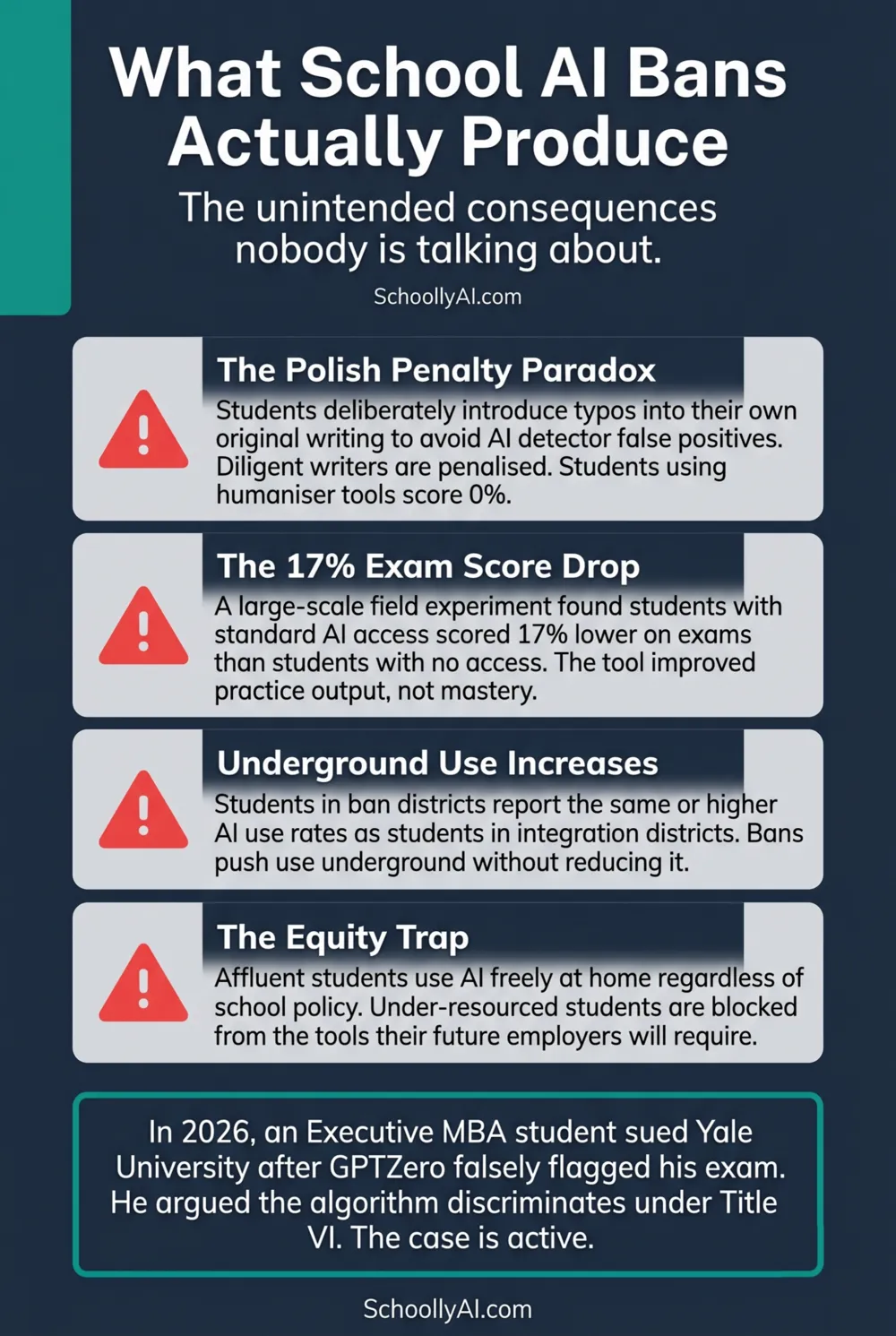

Students in schools with strict AI bans are deliberately introducing typos, awkward phrasing, and grammatical errors into their own original writing to avoid false-positive flags from detection software. r/Professors, 2026 That is what a ban produces. Not less AI use. Less good writing.

- Blanket bans do not reduce AI use. Students in ban districts report the same or higher AI use rates as students in integration districts.

- Detection software punishes the students writing most carefully while students using humaniser tools score 0% and face no consequences.

- A field experiment found a 17% exam score drop in students with standard AI access compared to those with none. The tool improved daily output without building underlying knowledge.

- The Yale lawsuit exposed the legal liability of using biased detection tools for disciplinary action. The case is still active in 2026.

What Bans Cannot Do

A blanket AI ban assumes two things: that students will comply, and that compliance can be verified. Both assumptions are wrong in 2026.

AI is not a standalone application students can be blocked from accessing on school devices. It is embedded into browsers, search engines, writing tools, and basic productivity software. Google Docs has AI writing suggestions built in. Grammarly rewrites student prose without being explicitly prompted. Microsoft Word's Copilot is available in the same interface students use to type their essays. A school that bans AI is attempting to ban a capability that is now embedded in the infrastructure of writing itself.

The compliance problem is just as intractable. 30% of students report using AI daily in 2026, a figure that rises regardless of school policy. Programs.com, 2026 Students in districts with explicit bans report using AI at the same or higher rates as students in districts with integration policies. The ban does not reduce use. It changes where the use happens and whether students feel any obligation to be transparent about it.

What bans do produce is an adversarial dynamic. Teachers are positioned as surveillance agents rather than educators. Students learn to hide their tool use rather than develop judgment about it. The relationship damage from a single false-positive accusation can take months to repair, if it is repaired at all.

The Polish Penalty Paradox

The most striking unintended consequence of detection-enforced bans is what researchers have started calling the polish penalty: AI detectors flag well-edited, grammatically precise writing as machine-generated because their algorithms associate editorial quality with AI output.

The practical result is students deliberately degrading their own original work. They introduce typos. They use simpler vocabulary than they are capable of. They leave in the awkward phrasing they would normally revise out. As one university professor described the situation: "The false positive problem is genuinely damaging since flagging a student writing about her own cancer diagnosis is hard to defend. The fact that students are now deliberately introducing typos just to pass as human says everything about how broken the system is." Medium, 2026

The paradox runs in both directions simultaneously. Diligent students who care about writing quality are penalised for editorial effort. Students who generated their work using AI and then ran it through a humaniser tool score 0% on detection software and face no scrutiny. The policy designed to protect academic integrity is functioning as a penalty on authentic achievement.

For a full breakdown of how detection tools fail mechanically and who they disproportionately flag, see AI Detector False Positives: What Teachers Need to Know.

The Cognitive Debt Finding

The argument that AI bans protect learning outcomes runs into a specific empirical problem: the evidence for cognitive harm from AI use is not evidence for cognitive protection from bans. It is evidence for the need for better-configured AI use.

A large-scale field experiment evaluated the impact of standard, unconstrained AI access on high school mathematics students. Students with AI access performed better on daily practice assignments but their exam scores dropped by 17% compared to students with no AI access. Packback, April 2025 The tool improved their daily output without building the underlying knowledge. When the scaffold was removed for the exam, the deficit was visible.

This finding is real and administrators who cite it in defence of bans are not wrong about the risk. But the study did not test Slow AI, where AI is constrained by a Socratic control prompt and used to force retrieval rather than replace it. The evidence for structured AI tutoring points in the opposite direction: a Harvard University trial found AI-tutored students achieved higher mastery in less time than classroom-instructed peers. Kestin et al., Science, 2025

The cognitive debt problem is a problem with unconstrained Fast AI. The solution is structured Slow AI, not prohibition. A ban that prevents both the harmful and the beneficial uses of the technology is not a solution. It is a refusal to teach the distinction.

The Yale Lawsuit

In early 2026, an Executive MBA student filed a federal lawsuit against Yale University after the university suspended him based on a GPTZero detection result on a final exam. The student, a non-native English speaker, argued that the university had used a demonstrably biased algorithm to make a disciplinary determination against him, constituting discrimination under Title VI of the Civil Rights Act. Crowell & Moring, 2026

The legal theory is straightforward. Detection tools produce false-positive rates of 61.3% for TOEFL essays written by Chinese students compared to 5.1% for essays by native US students. Using a tool with that disparity to make disciplinary decisions that disproportionately harm international students is not a neutral institutional act. It is a form of algorithmic discrimination with legal consequences.

Whether this specific case succeeds in court, the institutional logic it challenges is already being abandoned by the most legally cautious universities. UC San Diego deactivated Turnitin's detection module in April 2025. Cornell and Pittsburgh have both formally recommended against using detector scores for disciplinary action. The direction of institutional movement is clear. K-12 districts that continue using detection-based discipline are accumulating liability while the universities that set those practices are walking them back.

For the full discussion of the policy implications and what teachers should use instead of detectors, see What Schools Are Getting Wrong About AI Policy.

What Works Instead

The alternative to a ban is not no policy. It is a policy focused on what actually changes student behaviour and learning outcomes: explicit expectations, redesigned assessment, and AI literacy taught as a curriculum goal.

The districts producing the best outcomes in 2026 share a common structure. They define what AI use is permitted at each level of each assignment type before the assignment is given, using a framework like the AIAS. They redesign major assessments to evaluate the process of thinking rather than just the polished final product. They treat AI literacy as something to be taught rather than something to be feared. And they involve teachers in the policy process rather than handing down mandates from administrators who are not in the room.

None of this is technically complex. It requires institutional will and a willingness to accept that the take-home essay as currently structured is not viable in an AI world. That is not a small ask. But it is a smaller ask than sustaining a policy that is producing the polish penalty paradox, the equity gap, and the legal exposure of the Yale lawsuit simultaneously.

For the detailed breakdown of the four districts with working policies, see School AI Policy Examples That Actually Work.

FAQ

No. Students in ban districts report the same or higher AI use rates as students in integration districts. Bans push use underground and remove transparency without reducing the behaviour. 30% of students report daily AI use in 2026 regardless of school policy.

AI detectors flag well-edited, grammatically precise writing as machine-generated. Students who care about writing quality are penalised for editorial effort, while students who run AI output through humaniser tools score 0% and face no scrutiny. The policy designed to protect academic integrity penalises authentic achievement.

An Executive MBA student filed a federal lawsuit after Yale suspended him based on a GPTZero detection result. The student, a non-native English speaker, argued the university used a demonstrably biased algorithm in violation of Title VI of the Civil Rights Act. Detection tools produce a 61.3% false-positive rate for Chinese student essays compared to 5.1% for native US student essays.

Unconstrained AI use does. A field experiment found a 17% exam score drop in students with standard AI access. Structured Socratic AI use produces the opposite result: a Harvard trial found AI-tutored students achieved higher mastery in less time than classroom-instructed peers. The issue is configuration, not the tool itself.

Sources

- r/Professors. Students Are Deliberately Writing Worse to Avoid AI Detection Flags. 2026. reddit.com

- Programs.com. The Latest AI in Education Statistics. 2026. programs.com

- Medium / Teacher Toolkit. The Honest Truth About AI Detectors in Schools. 2026. medium.com

- Packback. Pulling Back the Curtains on Ethical and Pedagogical AI. April 2025. packback.co

- Kestin, G. et al. AI Tutoring Outperforms Active Learning. Science, 2025. science.org

- Crowell & Moring. Ivy League Lawsuit Centers on Alleged Impermissible Use of AI in Academia. 2026. crowell.com