What AI Detectors Actually Measure (And Don't)

AI detector accuracy is the central question behind every academic misconduct decision made with these tools, and most people answering that question are working with vendor marketing rather than independent research. The honest answer is that detection tools measure statistical patterns in text, not authorship. And the statistical patterns they flag are the same ones that appear in careful, formal human writing. This post is the technical foundation behind the broader problem covered in AI Detectors Are Failing Honest Students.

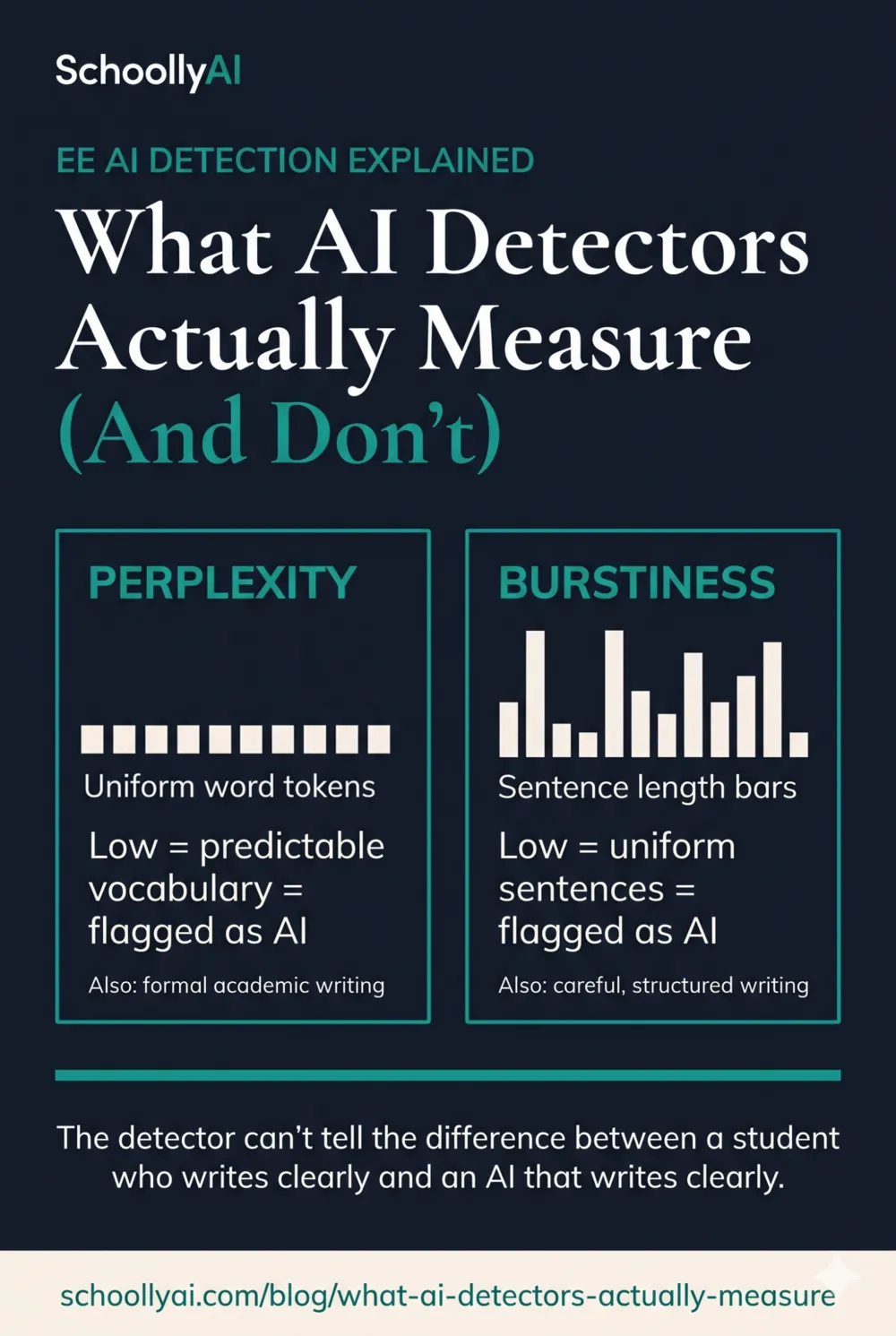

- AI detectors measure perplexity (vocabulary predictability) and burstiness (sentence length variation), not authorship.

- Detection accuracy drops to 60-80% once a student manually edits AI text, and 10-20% of authentic human writing is flagged as AI in independent testing.

- A 30% AI score does not mean 30% of the paper was written by an AI. It means 30% of sentences matched statistical patterns associated with AI output.

- Different detectors produce contradictory results on identical text. Copyleaks scored 100% accuracy on one dataset and 0% on a modified version of the same text.

- Human reviewers are barely better than chance at identifying AI writing without tools, which means the "human-in-the-loop" safeguard often compounds errors rather than correcting them.

Detection tools measure two statistical signals: perplexity and burstiness. Both signals are elevated in AI output and in careful, formal human writing. The tool cannot tell the difference.

When a Teacher Gets an 80% AI Score, This Is What It Actually Found

A teacher sees a detection report saying a paper is 80% AI-generated. The reasonable assumption is that the software found something definitive: digital evidence, a database match, a hidden watermark proving machine authorship.

It found none of those things. AI detectors do not have access to hidden metadata, authorship logs, or databases of AI-generated essays. They read the text and ask a single question: how statistically predictable is this writing?

That is a useful question in some contexts. It is a terrible question for determining whether a specific student wrote a specific essay. Predictable writing is not AI writing. Formal academic writing is predictable by design.

What Perplexity Actually Means

Perplexity is a measure of how surprising the word choices in a text are. A low perplexity score means the vocabulary is standard, expected, and likely to appear in that sequence based on the model's training data. A high perplexity score means the word choices are unusual, unexpected, or unconventional.

AI text generators are designed to produce low-perplexity text because low-perplexity text reads as coherent and natural. The model selects the statistically most probable next word at each step. The result is smooth, standard, predictable prose.

Students who carefully follow academic writing rubrics also produce low-perplexity text. Rubrics that reward topic sentences, standard transitions, formal vocabulary, and clear thesis statements are essentially instructions to write in a way that minimises perplexity. The algorithm reads their compliant, rubric-following work and sees the same statistical signature it sees in AI output.

This is also why the U.S. Constitution fails AI detection tests. Its formalized, highly predictable language has been absorbed so deeply into LLM training data that detectors read it as machine-generated. If that document fails the test, a student who carefully followed a teacher's formatting rubric is also going to fail the test.

What Burstiness Actually Means

Burstiness measures sentence length variation. Human writing tends to swing widely between short punchy sentences and longer explanatory ones. The rhythm is irregular.

AI text tends toward uniformity. Sentence lengths cluster around 15 to 20 words. The rhythm is smooth and consistent. Detectors use this signature to flag AI output.

The practical problem is that many academic writing styles also produce low burstiness. A student who writes carefully structured, consistently formal sentences because they believe it will help their grade is producing the same burstiness signature as an LLM.

There is a second, darker problem. Students who want to bypass detection tools are now deliberately using "AI humanizer" software that introduces awkward phrasing, grammatical errors, and sentence length variation into AI-generated text. The tool makes deliberately worse writing look more human. So schools end up in a situation where carefully polished human writing gets flagged and deliberately degraded AI writing passes. That inversion is not a bug someone can fix. It is a consequence of how the system works.

The Actual Accuracy Numbers

Vendor claims and independent testing don't agree, and the gap is not minor. Here is what the research shows.

| Tool / Finding | Claimed Accuracy | Independent Finding | Source |

|---|---|---|---|

| Turnitin AI detection | Under 1% false positive rate | 10-20% false positive rate on human text | Weber-Wulff et al., 2024 |

| Originality.ai (top commercial model) | Marketed as highest accuracy | 96% accuracy, but 8% false positive on human authors | Originality.ai Peer Review, 2026 |

| Copyleaks | High accuracy | 100% on one dataset; 0% on modified version of same dataset | JISC AI Detection Assessment, 2025 |

| OpenAI's own classifier | Not publicly claimed | 26% correct ID of AI text; 9% false positive on human text. Shut down. | UCLA Humanities Tech, 2024/2025 |

| All major detectors after manual editing | High accuracy in lab | Accuracy drops to 60-80% after student manually edits text | Thesify.ai, 2026 |

The Copyleaks result deserves specific attention. The same tool, on essentially the same text, produced completely opposite accuracy scores depending on minor modifications. That is not a tool with a small error rate. That is a tool that is fundamentally unreliable under real-world conditions where students revise, edit, and refine their writing before submission.

The Base Rate Problem That Makes Everything Worse

Even if a detection tool were more accurate than current evidence suggests, there is a mathematical problem with using it in most classrooms that no engineering improvement can fix.

A paper published in the International Journal for Educational Integrity in 2026 walked through the math. If a classroom has 1,000 papers and only 10% of students are actually using AI, a flagged paper has only a 47.6% probability of genuinely being AI-generated, even with a technically accurate detection tool. That drops below a coin flip.

This happens because of base rates. When most students in a class are honest, every false positive is statistically more likely than a true positive. The prevalence of actual AI use in your specific classroom determines how meaningful any given detection score is. In a class where cheating is rare, detection scores are almost noise.

This is also why the "30% AI score means 30% was written by AI" interpretation is wrong. It means 30% of sentences matched statistical patterns the tool associates with AI. The probability that those sentences were actually written by an AI depends entirely on how many students in the class are genuinely using AI. A score of 30% could represent true detection or a false positive depending on context the tool has no access to.

What Detection Tools Cannot Do

They cannot identify which specific AI tool was used. They cannot identify when the text was generated. They cannot distinguish between a student who wrote their own work and then used Grammarly for proofreading versus one who generated the entire essay with ChatGPT. They cannot account for ghostwriting, tutoring, or editing assistance that has existed long before AI.

They also cannot reliably clear a student who is innocent. A low detection score doesn't mean the work was human. It means the statistical patterns were not flagged. A student who generated AI text and then heavily edited it manually may produce a low score not because they wrote the work but because the editing introduced enough burstiness and vocabulary variation to fall below the detection threshold.

The asymmetry is the problem. These tools can wrongly accuse honest students. They can wrongly clear dishonest ones. The error doesn't run in a single direction. That makes them unreliable as a basis for any disciplinary decision, in either direction.

For how this specifically affects ESL students, see ESL Students and AI Detection Bias: The Algorithm Penalty, which covers why non-native writers are structurally more exposed to false positives.

How Administrators and Teachers Should Actually Read a Detection Report

Turnitin runs two completely separate analyses and presents them in the same report: a Similarity check and an AI Detection check. These measure different things. A high Similarity score means text was found in existing databases. A high AI score means statistical patterns resembled AI output. A paper can score high on one and zero on the other.

When a paper is flagged, the AI score is the beginning of an investigation, not its conclusion. The next step is manual review for indicators that detection software cannot assess: citations that don't exist when you look them up, a sudden shift in the student's historical writing voice, arguments that are generic and non-specific, or a thesis that doesn't reflect anything taught in the actual class.

Those human indicators are more reliable than a percentage score. A student who used AI extensively tends to produce floating, confident-sounding claims that don't connect to specific class content. The writing is often technically competent but strangely hollow. That's a more useful signal than a number from a probabilistic classifier.

Frequently Asked Questions

In controlled lab conditions, some detectors claim over 95% accuracy. In real classroom conditions, accuracy drops to 60-80% once students manually edit text. The best commercial model tested, Originality.ai, still produced an 8% false positive rate on authentic human writing.

AI detectors measure two things: perplexity and burstiness. Perplexity measures how predictable the vocabulary is. Burstiness measures how uniform the sentence lengths are. Low perplexity plus low burstiness is flagged as AI. Both are features of clear, formal, rubric-compliant human writing too.

Perplexity measures how unsurprising the word choices in a text are. A low perplexity score means the vocabulary is standard and predictable. AI generates low-perplexity text because it selects statistically likely words. Students who follow rubric instructions and use formal academic vocabulary also generate low-perplexity text.

Each detector uses a different training set and a different threshold for what counts as AI text. The JISC AI Detection Assessment in 2025 found that Copyleaks scored 100% accuracy on one dataset and 0% on a slightly modified version of the same dataset. The tools are not measuring the same thing in the same way.

No. A 30% AI score means approximately 30% of the sentences in the paper matched statistical patterns the tool associates with AI output. It does not mean 30% of sentences were written by an AI. The number describes a probability match, not a confirmed percentage of machine-generated text.

Sources

- Weber-Wulff, D., et al. Largest Independent Evaluation of AI Detection. 2023/2024. Analysed by Structural Learning, 2026. structural-learning.com

- JISC. AI Detection and Assessment: An Update for 2025. National Centre for AI. June 2025. nationalcentreforai.jiscinvolve.org

- Originality.ai. AI Detection Studies Round Up. 2026. originality.ai

- International Journal for Educational Integrity / Taylor and Francis. Heads we win, tails you lose: AI detectors in education. 2026. tandfonline.com

- UCLA Humanities Technology. The Imperfection of AI Detection Tools. 2024/2025. humtech.ucla.edu

- Thesify.ai. How Professors Detect AI Writing: 2026 Guide. 2026. thesify.ai

- Zou, James, et al. GPT Detectors Are Biased Against Non-Native English Writers. Stanford University HAI. 2024. hai.stanford.edu

Defending Academic Integrity in 2026

Understanding what detection tools measure is only the start. The AI Literacy mini-course covers how to build assessment approaches that don't depend on unreliable software. Free. No email required.

Start the AI Literacy Course →