ESL Students and AI Detection Bias: The Algorithm Penalty

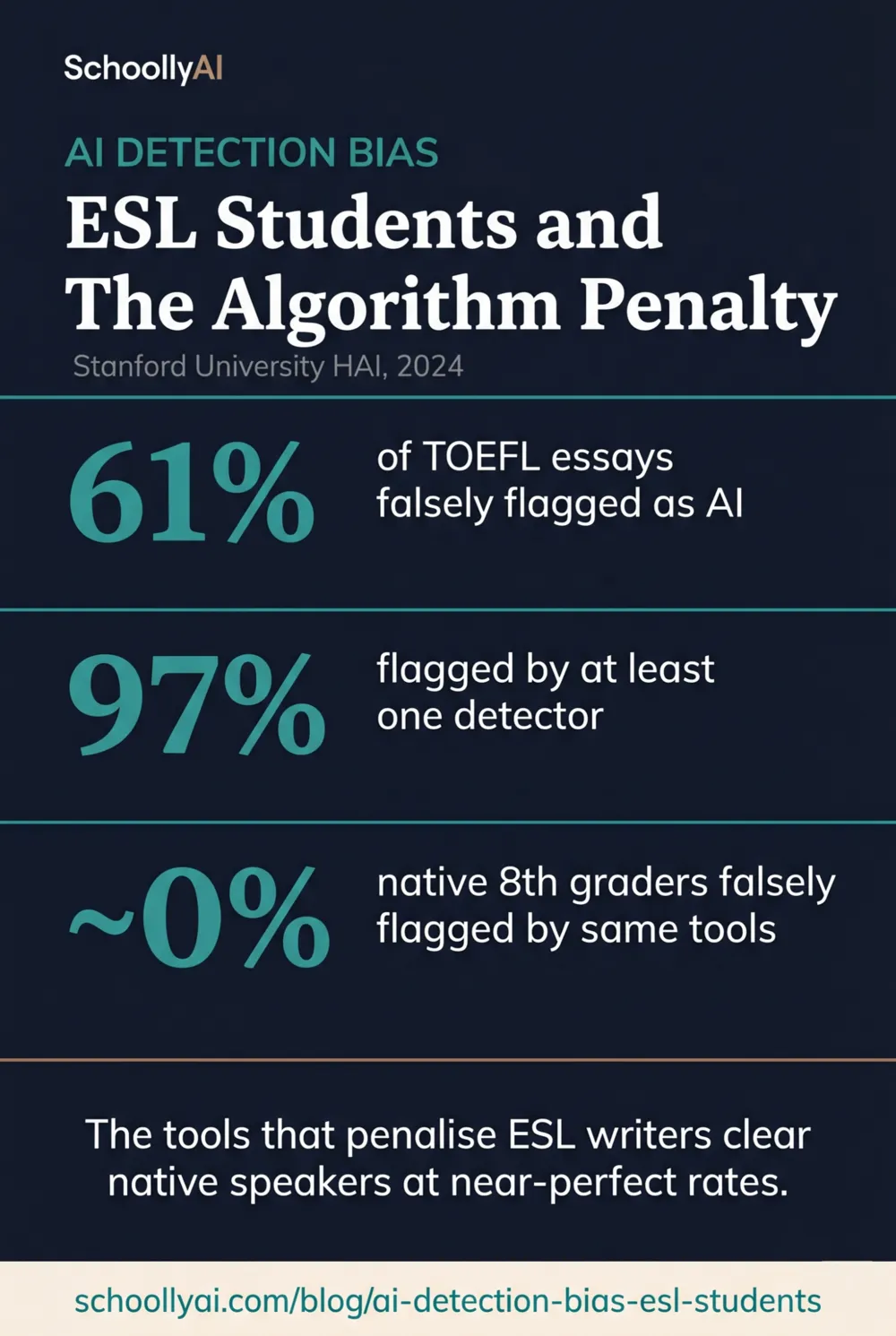

If your school runs AI detection on ESL student work, you are not running an integrity check. A Stanford University HAI study from 2024 found that AI detectors flagged over 61% of TOEFL essays written by non-native English speakers as AI-generated, while the same tools cleared native eighth-grade writers at near-perfect rates. The bias is not a product-specific quirk. It is built into how these tools work, and it lands hardest on students who are already navigating the greatest linguistic challenges.

- 61% of TOEFL essays by non-native English speakers were falsely flagged as AI-generated in the Stanford University HAI study, 2024.

- All seven major AI detectors unanimously misidentified 19% of ESL essays, meaning the bias is not limited to one platform or one set of training data.

- 97 out of 91 TOEFL essays were flagged by at least one detector in the same study. The false positive exposure for ESL students is near-total.

- The bias exists because ESL instruction and AI generation share the same goal: clear, standard, predictable language. Detection algorithms cannot tell them apart.

- Schools should disable AI detection for ESL and ELL students. Supervised in-class writing baselines are the only reliable alternative.

The algorithm that flags AI writing uses the same statistical signals that ESL instruction teaches students to produce. The two are indistinguishable to the tool.

The Built-In Trap: Why ESL Students Can't Win

Learning to write in a second language means learning to be predictable. You lean on standard vocabulary because it's safe. You construct sentences using patterns you've been taught work. You avoid unusual phrasing because unusual phrasing gets misunderstood. This is not a failure of creativity. It is exactly what good ESL instruction looks like.

AI detection tools measure two things: perplexity and burstiness. Low perplexity means predictable vocabulary. Low burstiness means uniform sentence structure. Both are flagged as AI indicators.

ESL students produce low perplexity and low burstiness by design. The tool reads their diligent, rule-following, second-language work and sees the same statistical signature it sees in AI output. The student cannot avoid this by writing better. Writing better in the formal academic register they're being taught makes the problem worse. For the technical breakdown of how perplexity and burstiness work, see What AI Detectors Actually Measure (And Don't).

What Stanford Found

The research on this issue is among the most robust in the AI detection literature. This is not a single study with edge case results. Multiple independent teams have replicated the finding across different tools and student populations.

The landmark study came from James Zou and colleagues at Stanford University's Institute for Human-Centered AI in 2024. They tested seven major AI detection platforms against TOEFL essays written by non-native English speakers and essays written by native U.S.-born eighth graders.

| Finding | Data Point |

|---|---|

| TOEFL essays flagged as AI by at least one detector | 97% (89 of 91 essays) |

| ESL essays unanimously misidentified by all seven detectors | 19% (18 of 91 essays) |

| Native eighth-grade essays wrongly flagged | Near zero (described as "near-perfect" clearance) |

| TOEFL essays flagged as AI by majority of detectors | 61.22% |

The unanimity finding is particularly significant. When all seven tools agree, you can't attribute the false positive to one platform's methodology. The bias is not in the product. It is in the approach shared by all current detection tools.

Research from D-Lab at UC Berkeley confirmed the pattern from a different angle. Ramírez-Castañeda and colleagues found in 2024 and 2025 that the same tools calling ESL students cheaters were performing competently on native-speaker writing. The problem is not detection quality overall. The problem is unequal accuracy across student populations.

Why ESL Writing Gets Flagged: The Technical Reason

Non-native speakers score lower on measures of lexical richness, syntactic complexity, and grammatical diversity. These are not failures of intelligence or effort. They reflect where a student is in their language acquisition process.

AI text generators also score low on lexical richness and syntactic complexity. They are optimised to produce text that is clear, coherent, and unsurprising. The result is text that sits in the same statistical space as the writing of a careful, rule-following language learner.

Researchers agree that because this overlap is a property of both ESL instruction and AI generation as goals, it cannot be engineered away by better detection tools. A tool that measures predictability will always penalise students who have been taught to write predictably. ESL students have been explicitly taught to do exactly that.

The Double Penalty: When Instructors Reinforce the Algorithm

The algorithmic bias is bad enough on its own. What the MDPI Information Journal's qualitative synthesis found in 2025 is that it gets compounded by human judgment: ESL instructors themselves often judge grammatically accurate, sophisticated writing from second-language learners as AI-generated.

So an ESL student can produce genuinely good work, have it flagged by the detection tool, and then have that flag confirmed by a human reviewer who intuitively associates formal writing quality with AI output. The student faces an algorithmic accusation and a human accusation simultaneously, both based on the same faulty premise.

The Center for Innovative Teaching and Learning at Northern Illinois University documented the resulting atmosphere in 2024: the algorithmic bias against marginalized student groups "could foster an atmosphere of distrust between faculty and students, discourage academic participation, and undermine the perception of fairness." They observed the same impact on neurodivergent students, whose non-standard linguistic patterns also produce detection flags.

What ESL Students Are Actually Doing to Survive This

University and high school forums from 2024 to 2026 show a consistent and disturbing pattern. ESL students have started deliberately introducing grammatical errors, misused idioms, and awkward phrasing into their essays just to introduce enough "burstiness" to pass the school's AI detector.

Let that sink in. Students are making their writing intentionally worse to prove they wrote it themselves.

This destroys the core purpose of the writing assignment. A task meant to develop communication skills is now optimised for defeating a flawed algorithm. The student submits a degraded version of their best work. The teacher grades it. Both of them spend energy on a process that has nothing to do with learning.

The chilling effect extends beyond writing assignments. ESL students report participating less in class, deeply distrusting faculty, and experiencing significant psychological anxiety about disciplinary processes they cannot defend against through any approach that doesn't involve compromising their own work.

What Teachers and Schools Must Do

The position of the research community is clear. Multiple independent researchers, and the Northern Illinois University CITL, recommend disabling AI detection entirely for students in ESL or ELL programs. The false positive rates in this population are too high to justify any disciplinary application of detection scores.

If a school is not prepared to disable detection for ESL students, it should not use detection at all. Selective, population-aware application of a tool that produces systematically different error rates across student demographics is not a partial solution. It is a structured form of inequity.

The practical alternative for ESL contexts is supervised baseline writing. Conduct an in-class supervised writing task early in the course. Keep it. When out-of-class work is submitted, compare it to that baseline rather than running a detection algorithm. A student whose in-class and out-of-class writing share voice, vocabulary level, and structural approach is demonstrably consistent. A student whose supervised and submitted work show dramatic divergence is worth a conversation.

Build AI literacy programs that teach all students how to use AI tools transparently rather than teaching them to hide their use. A student who knows how to cite AI assistance, who understands what AI can and cannot do, and who sees transparent use as acceptable in defined contexts is much less likely to use AI deceptively. The broader framework is at AI Detectors Are Failing Honest Students.

James Zou from Stanford summarised the problem in 2024 with the kind of directness that school administrators need to hear: "These numbers pose serious questions about the objectivity of AI detectors and raise the potential that foreign-born students and workers might be unfairly accused of, or worse, penalized for cheating. The detectors are just too unreliable at this time, and the stakes are too high for the students, to put our faith in these technologies without rigorous evaluation and significant refinements."

That evaluation has happened. The data is in. The refinements have not materialized. The stakes for ESL students have not gone down.

Frequently Asked Questions

Yes. A Stanford University HAI study found that AI detectors flagged over 61% of TOEFL essays written by non-native English speakers as AI-generated. The same detectors cleared native English-speaking eighth graders at near-perfect rates. The bias is structural and consistent across all major detection platforms.

ESL learners are taught to use standard grammar, predictable vocabulary, and consistent sentence structure to ensure clarity. Detection tools measure perplexity and burstiness, and flag low-perplexity, low-burstiness text as AI-generated. Standard ESL writing produces exactly those signatures, not because it was written by AI, but because ESL instruction and AI generation both optimise for clear, predictable language.

Yes. Multiple independent researchers and the Northern Illinois University Center for Innovative Teaching and Learning recommend disabling AI detection tools entirely for students in ESL or ELL programs. The false positive rates for this population are too high to justify any disciplinary application.

Establish a baseline by conducting supervised in-class writing assignments early in the course. This gives you a reference point for each student's authentic voice. When subsequent out-of-class assignments are submitted, compare them to the supervised baseline rather than running a detection algorithm.

Yes. The Northern Illinois University CITL flagged in 2024 that neurodiverse students are disproportionately flagged by AI detection tools due to algorithmic biases against diverse linguistic patterns. Students with conditions that affect writing style or produce non-standard phrasing face similar exposure to false positives as ESL students.

Sources

- Zou, James, et al. GPT Detectors Are Biased Against Non-Native English Writers. Stanford University HAI. 2024. hai.stanford.edu

- Ramírez-Castañeda, V., et al. The Creation of Bad Students: AI Detection and Non-Native English Speakers. D-Lab Berkeley. 2024/2025. dlab.berkeley.edu

- Northern Illinois University CITL. AI Detectors: An Ethical Minefield. December 2024. citl.news.niu.edu

- MDPI Information Journal. Evaluating the Effectiveness and Ethical Implications of AI Detection Tools in Higher Education. 2025. mdpi.com

- arXiv. AI Detectors Fail Diverse Student Populations: A Mathematical Framing of Structural Detection Limits. 2026. arxiv.org

Defending Academic Integrity in 2026

The AI Literacy mini-course covers how to build equitable, detection-free integrity practices that serve all students in your classroom. Free. No email required.

Start the AI Literacy Course →