Fast AI vs Slow AI: Why the Way You Study Matters More Than the Tool

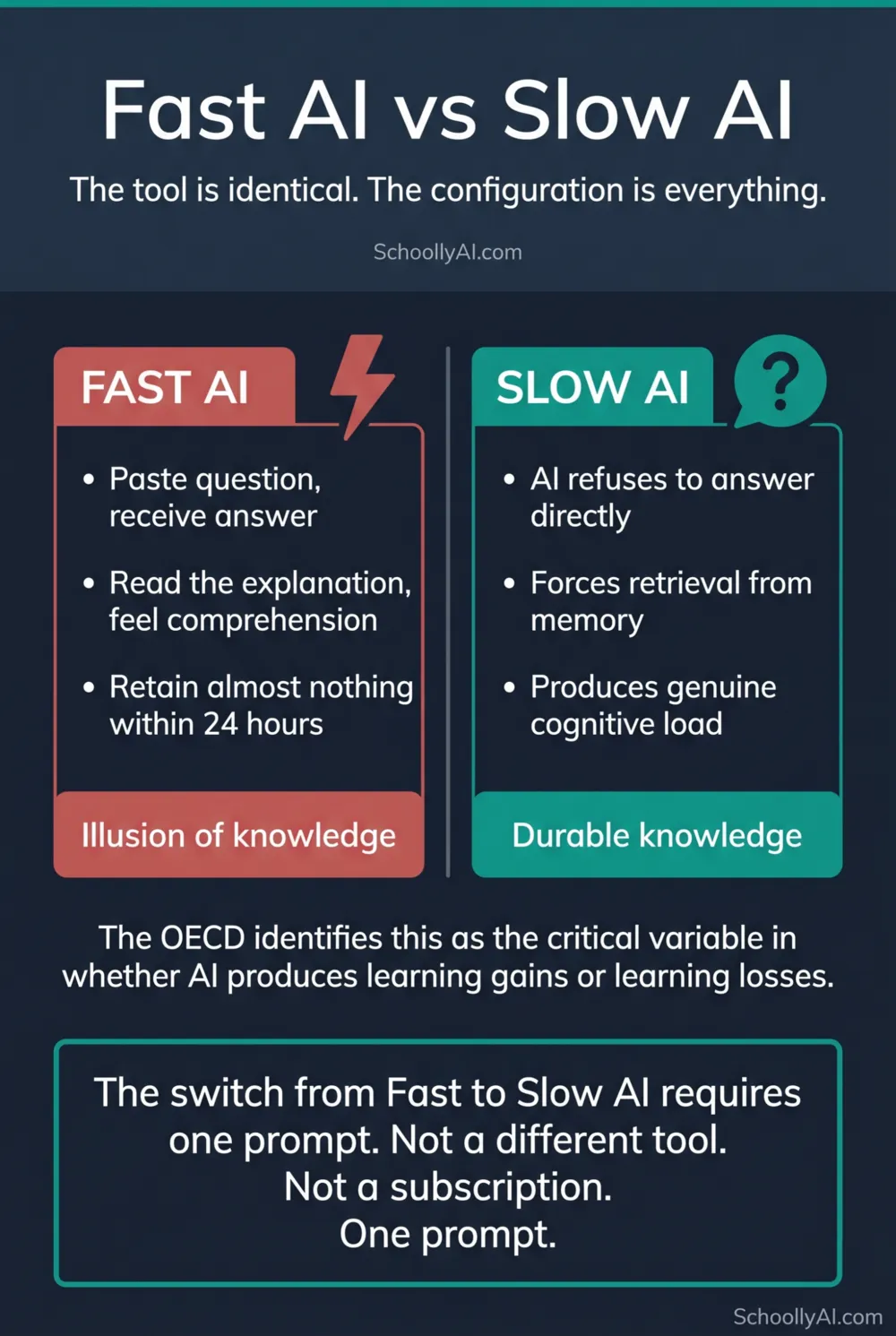

The OECD categorises AI study behaviour into two distinct patterns: Fast AI, where the technology completes the work for the student, and Slow AI, where the technology forces the student to do the work themselves. Thesify / OECD, 2026 The tool is identical in both cases. The configuration is everything.

- Fast AI: paste question, receive answer, move on. Produces the illusion of learning. Retains almost nothing within 24 hours.

- Slow AI: the same tool, configured to ask questions rather than give answers. Produces genuine cognitive load and durable knowledge.

- The illusion of knowledge is a well-documented cognitive trap. Reading a clear explanation feels like understanding it. It is not the same thing.

- The switch from Fast AI to Slow AI requires one prompt. It does not require a different tool, a subscription, or extra time.

The Distinction

Fast AI describes the default way most students use AI tools. A question gets pasted into the chat. The model produces a well-written, complete answer. The student reads it, feels a sense of resolution, and moves on to the next topic. The session takes three minutes and produces a transcript full of polished explanations the student did not generate themselves.

Slow AI describes the same tools used differently. The model is constrained by a prompt that prohibits direct answers. It responds to every question with a question. The student is forced to retrieve information from memory, construct hypotheses, and defend their reasoning step by step. The session takes thirty minutes and produces genuine understanding of the material.

The OECD analysis of generative AI in student learning identifies this distinction as the critical variable in determining whether AI use produces learning gains or learning losses. Thesify / OECD, 2026 The tool is not the variable. The configuration is.

Why Fast AI Feels Like Learning

The reason Fast AI is so persistent even when it is ineffective is that it feels like learning while it is happening. Reading a clear, well-structured AI explanation of a complex topic produces a sense of comprehension. The information feels familiar. The explanation makes sense. The student closes the tab feeling like they understand the material.

Cognitive scientists call this the fluency illusion, or the illusion of knowledge. When information is presented clearly and legibly, the brain interprets the ease of processing as a sign of understanding. It is not. The ease reflects the quality of the explanation, not the depth of the student's encoding.

The test is simple: close the explanation and try to reproduce the core argument from memory thirty seconds later. For most passive AI reading sessions, most students cannot. The feeling of comprehension was real. The comprehension itself was not.

One student on r/studytips captured this precisely: "Stop treating AI as a storage unit. Treat it as a consultant." r/studytips, 2026 A storage unit receives information passively. A consultant interrogates you until you figure out the answer yourself. Only one of those produces a change in what you know.

The Neuroscience

What makes Slow AI educationally effective is not a pedagogical preference. It is a function of how the brain builds durable knowledge.

Deep learning requires what researchers call "desirable difficulty." When a student struggles to retrieve information from memory, construct an argument, or work through the steps of a problem, the brain expends genuine cognitive effort. That effort is what creates the neural pathways that constitute lasting knowledge. The struggle is not a side effect of learning. It is the mechanism.

Passive reading bypasses this mechanism. The information enters working memory, produces a brief sense of familiarity, and exits without being encoded into long-term memory. Cornell University research tracking long-term cognitive effects found that students who passively over-relied on AI assistance exhibited lower levels of brain engagement and underperformed compared to cohorts who engaged in active recall without technological shortcuts. Cornell University, 2026

Slow AI forces the active recall that passive reading skips. When a Socratic AI tutor asks "Which kinematic equation applies here and why?" the student cannot answer by re-reading the explanation. They have to retrieve the equation from memory and construct a justification. That retrieval attempt is what builds the neural pathway. Whether the first attempt succeeds or fails is less important than the fact that it was attempted.

As one computer science student described the experience: "The frustration helps you to learn. It helps you remember. And the feeling you get when you do solve it, burns it even more deeply into your brain." r/learnprogramming, 2025

How to Force the Switch

The switch from Fast AI to Slow AI is entirely a configuration decision. The tool does not change. The prompt does.

Before opening a study session with any AI tool, paste a Socratic control prompt that explicitly prohibits direct answers and commands the model to ask guiding questions. The prompt is the behavioral override that converts the tool from an answer engine to a tutor. Without it, the model defaults to Fast AI regardless of how it is used.

A minimal version that works across subjects:

"You are my Socratic tutor for [subject]. NEVER give me the direct answer to any question. Instead, ask me a question that guides me toward finding the answer myself. Keep responses under 80 words. Ask one question at a time. Begin by asking me what I am most confused about."

That is twelve seconds to paste. The rest of the session runs on productive struggle rather than passive consumption.

For subject-specific templates and a full breakdown of the four structural pillars that make the prompt work, see The Socratic AI Tutor Prompt: How to Build One That Works. For the complete five-step session framework that surrounds this prompt with context setup and flashcard export, see How to Set Up a Personal AI Tutor in 20 Minutes.

FAQ

Fast AI is using an AI tool as an answer engine: the student pastes a question, receives a complete answer, and moves on. Passive reading produces the illusion of comprehension but little durable retention. Slow AI is using the same tool with behavioral constraints that force the student to reason through the material themselves, producing genuine cognitive load and lasting knowledge.

Passive reading triggers a sense of familiarity and recognition that the brain interprets as comprehension. This is called the fluency illusion. The student feels like they understand the material because the explanation was clear, but that feeling does not reflect genuine encoding. Testing yourself immediately after reveals the gap.

Productive struggle is the period of effortful, sometimes frustrating cognitive work that precedes genuine understanding. When a student cannot immediately answer a question and has to retrieve information from memory and construct a hypothesis, they are building neural pathways that passive reading does not produce. The frustration is not a sign of failure. It is the mechanism of learning.

Apply a Socratic control prompt before starting any study session. This prompt explicitly prohibits the AI from providing direct answers and commands it to ask guiding questions instead. The switch from Fast AI to Slow AI is entirely a function of how the tool is configured, not which tool is used.

Sources

- Thesify / OECD. Impact of Generative AI on Student Learning. 2026. thesify.ai

- Cornell University. AI and Academic Integrity. 2026. cornell.edu

- r/studytips. The Study Tips for 2026. 2026. reddit.com

- r/learnprogramming. CS Student Here: AI Tutor Experience. 2025. reddit.com