Why Most Teacher AI Training Misses the Point

By fall 2025, about 50% of teachers had received at least one AI professional development session. Education Week, November 2025 Meanwhile, 53% of teachers still lack confidence using AI in the classroom. Michigan Virtual, 2025 These two facts together tell you something about what most of that training contained. Tool demos do not build confidence. Understanding does. And most teacher AI training is still almost entirely about tools.

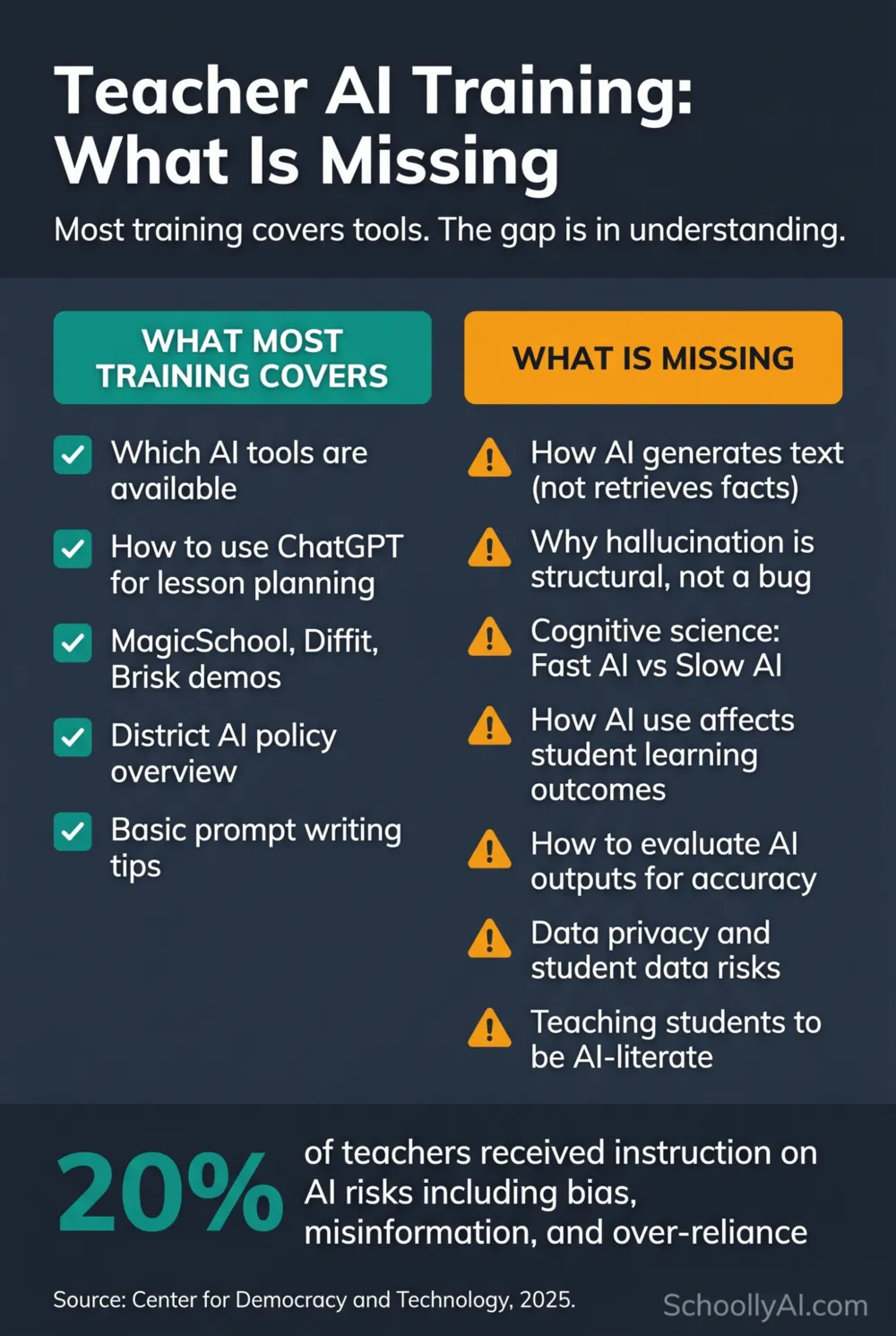

What Most Teacher AI Training Actually Covers

The typical teacher AI professional development session in 2025 looked like this: a 60 to 90 minute session covering two or three tools, usually MagicSchool, ChatGPT, and one differentiation platform. A demo of what the tools can do. A brief mention of academic integrity. Possibly a policy overview. Time to try the tools with a simple task.

Pat Yongpradit, Chief Academic Officer at Code.org, described the state of training with some precision: "We're still at the beginning of all this stuff. A third of teachers have only gone to one one-time session, and that one-time session could've been really basic." Education Week, November 2025

This is tool adoption training, not AI literacy training. The distinction matters because the skills required to use a tool and the skills required to evaluate a tool's outputs critically are not the same thing. A teacher who has attended three tool demos can navigate a MagicSchool interface. That same teacher may have no framework at all for deciding whether the lesson plan MagicSchool generated is actually well-designed, or whether the AI-adapted reading passage contains subtle errors that would mislead students.

What Is Missing From Most Teacher AI Training

Three categories of knowledge are systematically absent from most teacher AI training, and each of them is more consequential than knowing which tools to use.

How AI actually generates outputs. Only 20% of teachers received instruction on AI risks including bias, misinformation, and over-reliance, according to the Center for Democracy and Technology. CDT, 2025 Even fewer received instruction on hallucination specifically, which is the most practically important AI limitation for classroom use. A teacher who does not understand that AI generates statistically probable text rather than retrieving verified facts has no principled basis for evaluating what the AI produces. They are working on faith, not knowledge.

The cognitive science of AI and learning. This is the missing piece that most directly affects instructional decisions. How students use AI changes what they learn, not just how efficiently they work. Students who use AI to get quick answers learn less than students who use AI to think harder, as the Wharton chess study and the PNAS math study both demonstrated. Wharton School, October 2025 A teacher who does not know this has no basis for setting up AI use in assignments in ways that support rather than undermine learning. This is not an advanced topic for researchers. It is the most practically useful thing a classroom teacher can know about AI in 2026.

Data privacy and student data risks. Which tools are FERPA-compliant. What should never be pasted into a public AI interface. Which tools collect student data for model training and which do not. Most teachers working with AI tools right now have not received explicit guidance on any of these questions. They are making data privacy decisions by default rather than by knowledge, often in ways that may not be compliant with their own district's data policies.

The Confidence Gap This Produces

The result of tool training without foundational understanding is exactly the confidence gap the data shows. 53% of teachers lack confidence using AI in the classroom despite growing adoption. Teachers who attended tool demos know how to generate a lesson plan with AI. They do not know how to evaluate whether that lesson plan is good, spot a hallucinated citation in an AI-suggested reading list, or tell a student why using AI to answer their essay question will reduce rather than improve their understanding of the material.

That is not a tools problem. It is a knowledge problem. And tool demos do not solve knowledge problems.

The University of Michigan's October 2025 report found that teacher comfort with AI grew from 2.9 out of 10 to 5.6 out of 10 in a single year. Michigan Virtual, 2025 That is meaningful growth. A score of 5.6 out of 10 still means the average teacher feels only slightly more comfortable than not. Getting to genuine confidence requires the foundational understanding that most training does not provide.

Among teachers who use AI, 69% feel comfortable using it for lesson planning and grading, but only 53% feel comfortable teaching students to use AI responsibly. YSU, August 2025 The gap between those two numbers is the gap that foundational AI literacy training closes. Using a tool for yourself is one thing. Teaching a student to use it critically is another. The second requires understanding what the tool is actually doing.

What Effective AI Training for Teachers Looks Like

Darling-Hammond et al., in their influential 2017 Learning Policy Institute study on effective professional development, identified seven features that predict whether PD actually changes classroom practice: content focus, active learning, collaboration, modelling of effective practice, coaching and expert support, feedback and reflection, and sustained duration. Learning Policy Institute, 2017 Most one-time AI tool sessions hit one or two of these at best.

A 2025 meta-analysis of 102 online teacher professional development studies found medium effects on teacher-level outcomes, with the critical predictors of actual practice change being cognitive activation and collaboration, not content volume. ScienceDirect, 2025 Teachers do not change practice because they heard about a tool. They change practice when they understand a concept well enough to make decisions about it confidently.

The SchoolWorks Lab PD Blueprint from September 2025 identified the principle that most effective AI training follows: purpose before products. Identify the specific instructional problem. Understand the relevant AI concepts. Then find the tool that addresses the problem in a way consistent with what you know about how AI works and how students learn. SchoolWorks Lab, September 2025

That sequence (understand, then use) is the opposite of what most current training provides. And it is the sequence that actually produces confident, effective classroom practice.

The free AI Literacy mini-course follows this sequence. Section 1 covers how AI actually works, before any tool recommendation. Section 2 covers the cognitive science of AI and learning. Section 3 covers practical tools and first steps. The order is deliberate. For the full context on where teachers are and what AI literacy actually requires, see the AI Literacy for Teachers guide.

INTERACTIVE

Did Your AI Training Cover What Matters?

Six yes/no questions. See what your training did and did not cover.

Frequently Asked Questions

Is one tool training session better than nothing?

Yes, but the ceiling is low. A single tool demo gives a teacher access to a tool. It does not give them the knowledge to evaluate what the tool produces, the confidence to use it in ways that support rather than bypass student learning, or the framework to teach students to use it critically. A tool demo is a starting point. It is not AI literacy.

What is the single most important thing missing from most AI training?

The cognitive science of AI and student learning. Specifically: students who use AI to get quick answers learn less than students who use AI to think harder. This finding from the Wharton chess study and the PNAS math study changes every instructional decision a teacher makes about when and how to allow AI use in their classroom. Almost no tool training covers it.

How long does it take to build the foundational AI literacy that training is missing?

The three-section SchoollyAI AI Literacy mini-course covers the core foundation in about 90 minutes. Section 1 covers how AI generates text and why it hallucinates. Section 2 covers the cognitive science. Section 3 covers practical tools and first steps. That 90 minutes provides more foundational AI literacy than most one-time professional development sessions deliver.

Should I wait for my district to provide better training before using AI?

No. The gap between what districts are providing and what teachers need is wide enough that waiting produces its own costs. Your students are already using AI. Building your own foundational understanding independently, using free resources, is both possible and worth doing now.

Sources

- Education Week — Teacher AI Training Is Rising Fast, But Still Has a Long Way to Go (November 2025)

- Michigan Virtual — AI in Education: A 2025 Snapshot (2025)

- Center for Democracy and Technology — AI in K-12 Schools (2025)

- Wharton School — When Does AI Assistance Undermine Learning? (October 2025)

- YSU — Teachers and AI: A Study on Confidence Gaps (August 2025)

- Darling-Hammond et al. — Effective Teacher Professional Development (Learning Policy Institute, 2017)

- ScienceDirect — Effects of Online Teacher Professional Development: A Meta-Analysis (2025)

- SchoolWorks Lab — PD Blueprint for K-12 Teachers (September 2025)

- Gallup/Walton Family Foundation — The AI Dividend (2025)