Student-Safe AI Tools in 2026: What Teachers Need to Know

A 2026 analysis of nearly 1.2 million student AI interactions across 1,300 school districts found that roughly 1 in 5 interactions involved cheating, cyberbullying, or other problematic behaviour. Curriculum Associates, 2026 The data flowing through AI tools in schools is not neutral. Here is what teachers need to know to stay on the right side of the law and keep students safe.

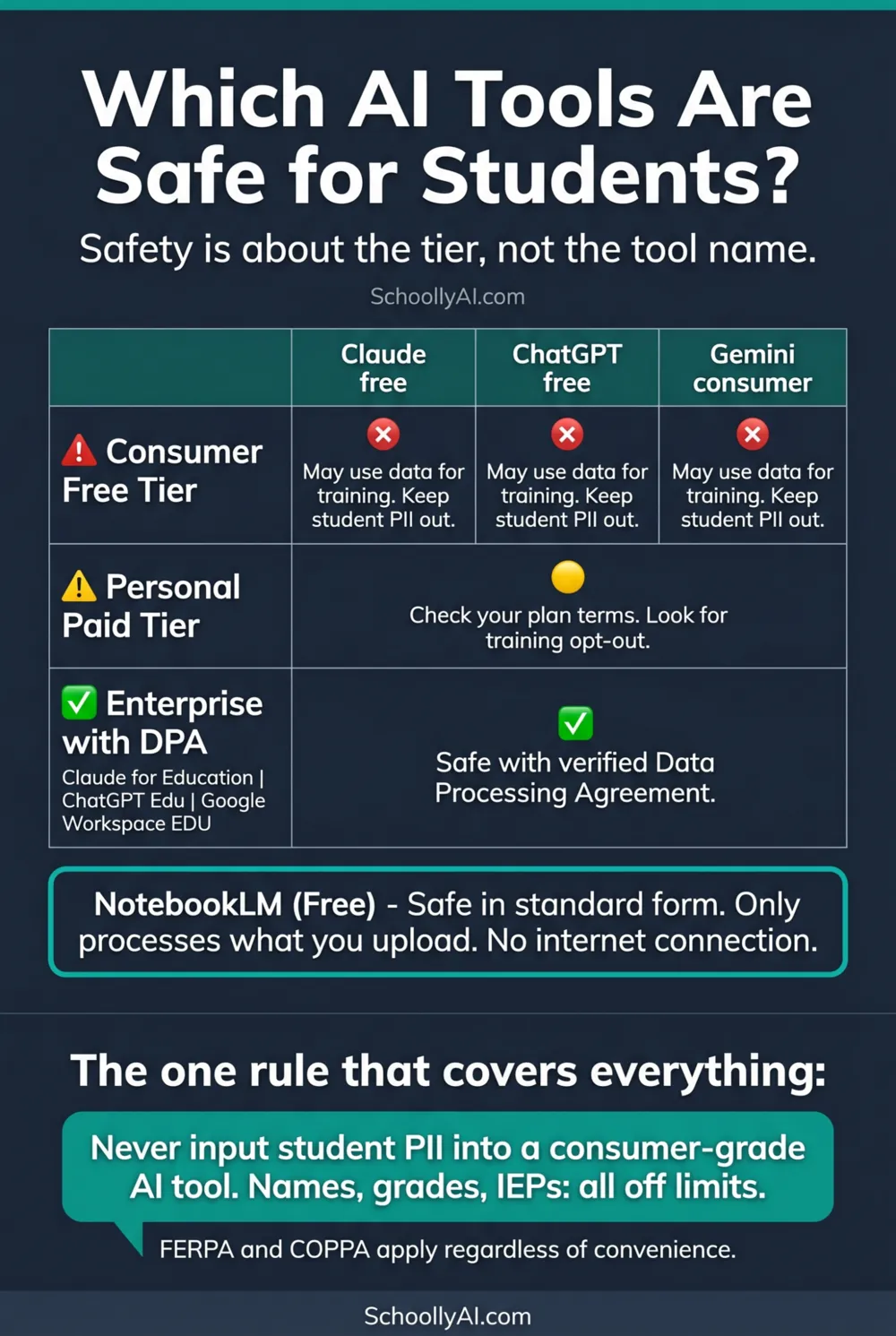

- Never input student personally identifiable information into a consumer-grade AI tool. This violates FERPA and COPPA regardless of convenience.

- Safety is not about the tool name. It is about the deployment tier. The same product can be safe in its enterprise form and unsafe in its free form.

- A Data Processing Agreement signed by your district is the minimum requirement before any student data touches an AI platform.

- NotebookLM is the one tool on this list that is safe in its standard free form, because it only processes what you upload and does not connect to the broader internet.

The Core Rule

There is one rule that covers almost every student privacy question in AI tool use. Never input student personally identifiable information into a consumer-grade AI tool.

That rule does not require a legal degree to follow. It requires knowing which tier of a tool you are using and what student information you are about to enter. If you are on a free tier and you are about to type a student's name, grade, diagnosis, or any other identifying detail, stop.

FERPA applies to any educational record that identifies a student. COPPA applies to data collected from children under 13. Neither law cares about the intent of the teacher or the convenience of the workflow. A FERPA violation caused by pasting student essay drafts into ChatGPT's free tier is still a FERPA violation even if the teacher had no idea the data was being used for model training.

What PII Actually Means in Practice

Personally identifiable information in an educational context is broader than most teachers initially assume. It includes:

The obvious: Student names, student ID numbers, dates of birth, home addresses, email addresses, photographs.

The less obvious: Grades tied to a name, IEP details, discipline records, letters of recommendation that identify a student by name, any document that contains enough information to identify a specific student even without a name.

The invisible: Behavioural metadata. When students interact with an AI tool on a school device, the platform may capture interaction patterns, response times, query types, and other behavioural signals that build predictive profiles. As one researcher noted: "AI-driven platforms routinely gather the obvious data but they capture a layer of behavioural metadata that most people never notice. This invisible data helps build a predictive profile." Chalkbeat, December 2024

The practical response to the invisible data problem: use enterprise deployments on school-managed devices where possible, and treat any platform without a signed DPA as a consumer tool regardless of how it is marketed.

Consumer Tiers vs Enterprise Tiers

The most important thing to understand about AI tool safety is that the same product can be safe in one form and unsafe in another. The tool name is not the variable. The deployment tier is.

| Tier | Data training use | Safe for student PII? | What to check |

|---|---|---|---|

| Consumer free tier | May use conversations for model training | No | Read the terms of service |

| Paid personal tier | Varies by provider and plan | Check your plan terms | Look for training opt-out options |

| Enterprise with DPA | Explicitly prohibited by contract | Yes, with verification | Confirm DPA is signed by your district |

The verification step is not optional. Ask your IT department whether a DPA exists before entering any student data into an AI tool, regardless of how the tool is marketed to educators.

Tool-by-Tool Privacy Status

Claude (consumer tier): Standard Claude uses conversation data for model improvement unless explicitly opted out. Keep student PII out of it. Claude for Education, offered through institutional partnerships, includes enterprise-grade privacy controls and a DPA. Anthropic, 2024

ChatGPT (free tier): The free tier uses conversation data for training. The ChatGPT Team and Edu tiers include data privacy agreements that prevent student data from being used to train future models. Do not use the free tier with student work that contains identifying information.

Gemini (consumer): Standard Gemini follows Google's consumer data policies. Google Workspace for Education, which most K-12 districts already have, includes FERPA and COPPA compliance for Gemini within that environment. Check with your IT department whether your school's Gemini access is through the Workspace for Education agreement.

Perplexity: Perplexity's free tier is a web search tool. Student data entered into it is subject to standard consumer data handling. The Education Pro tier includes data protections appropriate for verified students and faculty. For direct student use in research tasks, verify the tier before deploying.

NotebookLM: This is the safest tool on the list for standard classroom use. NotebookLM does not reach out to the internet. It only processes what you upload into a specific notebook. No external data collection occurs during synthesis. It is completely free. For teachers who need AI output grounded in specific materials without privacy risk, this is the starting point.

The Invisible Data Problem

Even when a tool meets FERPA requirements for named student data, the behavioural metadata question remains. When a student uses an AI tool to complete assignments, the platform records more than just the text of the query.

Interaction frequency, time spent on specific topics, types of questions asked, response patterns, whether the student accepted or rejected AI suggestions: all of this builds a behavioural profile. In enterprise deployments without careful data governance specifications, this profile may be retained, shared with third parties, or used in ways the original DPA does not explicitly address.

The Canadian government's AI and Culture Advisory Council, formed following the March 2026 National Summit on AI and Culture in Banff, is actively working on regulatory frameworks to address exactly this gap. Government of Canada, March 2026 Until those frameworks are in place, the practical guidance is to treat behavioural metadata as a data privacy concern, not just named PII.

For the full context on the five tools and their classroom applications, see The 5 AI Tools I Use in My Classroom.

FAQ

Safety depends on the deployment tier, not the tool name. NotebookLM is safe for student use in its standard free form. Claude for Education, ChatGPT Edu, and Google Workspace for Education are safe when your institution has signed a Data Processing Agreement. Consumer-grade free tiers are not safe for student personally identifiable information.

Not into the free consumer tier. OpenAI's free tier uses conversation data to train future models unless a Data Processing Agreement explicitly prohibits this. Putting student names, grades, IEP details, or other identifying information into the free tier violates FERPA and COPPA. The ChatGPT Edu tier, available through institutional agreements, includes the necessary data protections.

A Data Processing Agreement is a contract between your school or district and an AI provider that specifies how student data will be handled. A properly written DPA explicitly prohibits the provider from using school-uploaded data to train future AI models. Without a DPA, assume the tool is consumer-grade and keep student PII out of it.

It depends on the tool and the deployment. Using a consumer-grade AI tool to grade student work and entering student names, grades, or identifying details into that tool is a FERPA violation. Using an enterprise tier with a signed DPA, or anonymising student work before uploading it, protects you. When in doubt, remove all student identifiers before entering work into any AI tool.

Sources

- Curriculum Associates. AI and Student Data Privacy: Building Trust through Responsible Design. 2026. curriculumassociates.com

- Chalkbeat. AI Tools Used by Teachers Can Put Student Privacy and Data at Risk. December 2024. chalkbeat.org

- Anthropic. Introducing Claude for Education. 2024. anthropic.com

- Government of Canada. Leaders, Creators and Innovators at Canada's First National Summit on AI and Culture. March 2026. canada.ca