How to Teach AI Literacy to Your Students in 2026

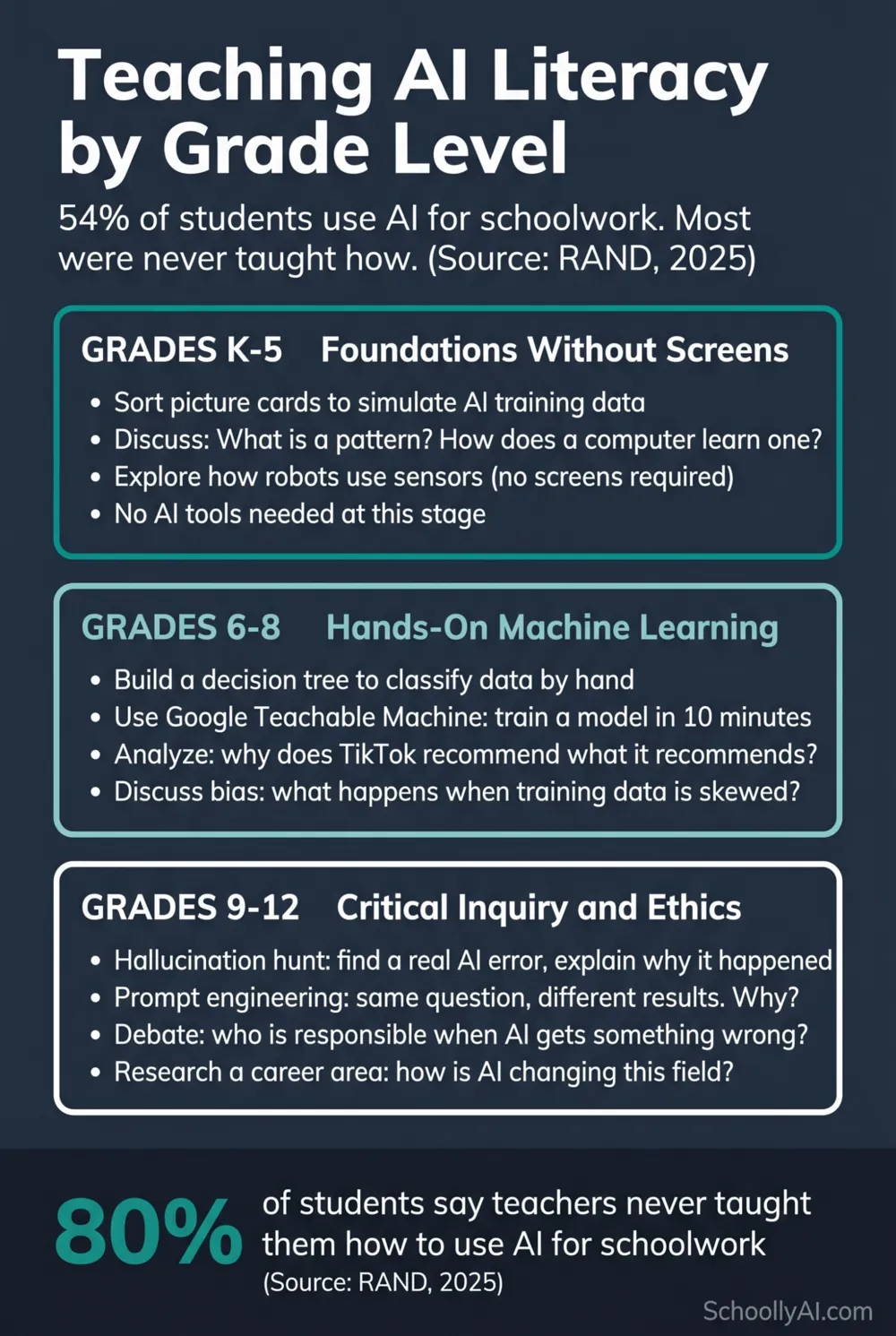

Over 80% of students say their teachers never explicitly taught them how to use AI for schoolwork. RAND, 2025 They are using it anyway. About 54% of K-12 students used AI for school in 2024 to 2025, and 26% of teens used ChatGPT for schoolwork specifically, double the prior year. Pew Research Center, January 2025 The question is not whether to teach AI literacy to students. It is what that actually looks like at each grade level and what works.

The Use vs Understanding Gap

Students arrive with a mental model of AI shaped almost entirely by science fiction and social media. Many believe AI has feelings, consciousness, or access to a live database of facts. A significant number think ChatGPT is always right, or always wrong, with very little understanding of why it is sometimes one and sometimes the other.

SRI International's April 2025 report on K-12 AI literacy found that students' classroom AI experiences vary substantially based on these prior beliefs, and that identifying misconceptions is a critical first step in instruction before any tool use begins. SRI International, April 2025

The Digital Education Council's 2024 Global AI Student Survey found that 86% of university students use AI in their studies, but 58% feel they do not have sufficient AI knowledge or skills. Campus Technology, August 2024 Students are proficient users of tools they do not understand. That is a different problem from students not using tools at all, and it requires a different instructional response.

What Students Get Wrong About AI Most Often

Three misconceptions show up consistently across grade levels and contexts. Addressing them directly, before tool use, is what separates AI literacy instruction from AI tool training.

AI knows things. The most persistent and most consequential misconception. Students treat AI outputs as retrieved facts rather than generated text. This leads directly to submitting hallucinated citations, accepting incorrect information, and losing the critical evaluation habit that makes AI useful rather than dangerous. The correction is simple and needs to be repeated: AI predicts what words should come next. It does not look anything up.

AI is either always right or always wrong. Students who have caught AI in an error often overcorrect to complete distrust. Students who have not caught an error often extend complete trust. Neither position is useful. AI is a probabilistic system that produces outputs of highly variable accuracy depending on the topic, the training data, and the nature of the question. The skill is calibration, not blanket trust or distrust.

Using AI for an answer is the same as using AI for help. This is the Fast AI versus Slow AI distinction from the research, and most students have never heard it framed this way. A student who asks AI to explain a concept and then asks follow-up questions is using AI very differently from a student who pastes a question and copies the answer. The learning outcomes are also very different. The Wharton chess study found students with structured AI guidance improved 64%, while those with unrestricted access improved only 30%. Wharton School, October 2025 Students find this research genuinely interesting when you share it with them. It is about their own learning outcomes, not rules.

AI Literacy Instruction by Grade Level

Elementary (K-5): Foundations without AI tools

SRI International's 2025 report was direct on this: "GenAI tools are not needed to teach AI literacy in elementary school. AI literacy can focus on foundational concepts rather than specific tools." The concepts that matter at this level are pattern recognition, how computers learn from examples, and what sensors are.

The AI4K12 initiative structures this through its Five Big Ideas, with K-2 students identifying patterns in labeled data by inspection and expressing rules informally. Sorting 50 picture cards into categories is a direct simulation of supervised learning. It requires no device and takes about 20 minutes. Teachers in Passaic, New Jersey are running lessons like this with grades K through 8, integrated into existing computer applications classes. Education Week, October 2025

Middle school (6-8): Hands-on machine learning

This is where students can start interacting with AI systems directly. Google's Teachable Machine lets students train an image classifier in a browser in about 10 minutes, with no code required. The value is not the tool itself but the experience of understanding that the model learned from the examples they gave it, and that biased or insufficient examples produce biased or limited outputs.

Teachers in Washington County, Maryland run eight lessons adapted from Common Sense Media's AI literacy curriculum, covering what AI is, how it is trained, what chatbots are, AI bias, and facial recognition, all through the lens of digital citizenship. Education Week, October 2025 None of these lessons requires students to use generative AI directly.

High school (9-12): Critical inquiry and ethics

Massachusetts launched a semester-long "Principles of Artificial Intelligence" course for grades 8 and up in September 2025, reaching approximately 1,600 students across 30 districts in its first year. Mass.gov, September 2025 The course covers LLMs, bias, ethics, and societal impact, with no prior computer science required.

At the high school level, the most effective AI literacy instruction moves beyond tool use into structured inquiry. Students who research how AI is changing a specific career field, debate who bears responsibility when AI produces harmful outputs, or analyse why two different prompts to the same AI produce different results, are building critical thinking skills that transfer across every AI encounter they will have.

Five Activities That Build Real AI Understanding

These are not hypothetical. They are classroom-tested approaches from districts and teachers who have been running AI literacy instruction since 2024.

1. The card sort. Give students 50 picture cards (animals, vehicles, foods, whatever fits your subject). Ask them to sort into categories, then create rules for their categories. Then: what happens when the sorting rules are ambiguous? What happens when you add a card that does not fit any category? This is supervised learning, experienced physically, without any technology. Works K through 8.

2. The hallucination hunt. Give students a topic they know well. Ask an AI a question about it. Ask them to find the error and explain why it might have happened. The point is not gotcha. The point is understanding the mechanism. When students can explain why a hallucination occurred, they are starting to understand how AI actually works. Works grades 6 through 12.

3. The prompt experiment. Give students the same question to ask an AI in three different ways. Vague question. Specific question. Question with context provided. Compare the outputs. What changed and why? Students who understand that AI output depends heavily on input framing are less likely to treat any single AI response as definitive. Works grades 7 through 12.

4. Teach the AI, not the student. Ask a student to explain a concept to an AI as if the AI is confused about it. The AI asks follow-up questions. The student has to keep explaining. This is the Socratic prompt structure from the pillar post, applied as a student activity. The generation effect tells us students who construct their own explanations retain information 20 to 40% better than students who read explanations passively. Works grades 5 through 12.

5. The career AI audit. Students pick a career they are interested in. Research: how is AI being used in this field right now? What can it do? What can it not do? What decisions still require a human? This is the most directly motivating AI literacy activity for high school students because it connects to something they care about immediately. Works grades 9 through 12.

Having the AI Conversation with Students

Teachers who lead with policy (rules, consequences, what is permitted) consistently report worse outcomes than teachers who lead with the research.

The cognitive science of Fast AI versus Slow AI lands well with students when it is framed as being about their own learning outcomes, not about classroom rules. The Wharton chess finding is concrete: students who got quick answers improved half as much as students who worked with structured guidance. Students find this interesting when framed as "here is what the research says about how you learn," not "here is why I am not letting you use AI."

Pati Ruiz, Director of Learning Technology Research at Digital Promise, put the pedagogical principle clearly: "Generative AI doesn't replace their need to do critical thinking and struggle with learning. Learning takes time, and GenAI is not going to speed that up." EdTech Magazine, October 2025 That framing, delivered directly to students, tends to produce better conversations than any policy document.

For the teacher foundation that makes these conversations possible, the AI Literacy for Teachers guide and the free mini-course cover everything you need before bringing these topics into the classroom.

Frequently Asked Questions

Do I need a dedicated AI literacy course or can I integrate it into existing subjects?

Both approaches work, and the research supports integration over standalone courses for most schools. Science classes can cover machine learning concepts. English classes can address AI through source evaluation and essay integrity. Ethics and privacy can be threads across multiple subjects. Dedicated courses like the Massachusetts pilot work at the high school level where depth is needed and scheduling allows it.

What if students know more about AI tools than I do?

They probably know more about which tools are popular. That is different from understanding how any of those tools work. The misconceptions above are nearly universal regardless of how many tools a student has used. Start with the misconceptions and you will quickly be in territory where the teacher's analytical framework matters more than the student's tool familiarity.

How much class time does AI literacy instruction require?

The card sort and hallucination hunt each take about 20 minutes. The prompt experiment runs well in a single 45-minute period. The career AI audit can be a project spanning a week. None of these require a dedicated course or removing existing content. They work as warm-up activities, discussion starters, or integrated assignments within existing unit plans.

At what age should students start learning about AI?

The AI4K12 initiative structures content for every grade band from K through 12. Foundational concepts like pattern recognition and supervised learning are appropriate from kindergarten, without any technology required. Direct engagement with AI tools is appropriate from middle school, with clear framing of what the tools are and are not doing.

Check out the free AI Literacy mini-course that gives teachers a better undertsaning on how to bring AI conversations into the classroom.

Sources

- RAND Corporation — AI Use in Schools Is Quickly Increasing but Guidance Lags Behind (2025)

- Pew Research Center — Teens and ChatGPT for Schoolwork (January 2025)

- SRI International — Promoting AI Literacy in K-12 (April 2025)

- Digital Education Council — Global AI Student Survey (August 2024)

- Wharton School — When Does AI Assistance Undermine Learning? (October 2025)

- Education Week — How These Schools Are Teaching AI Literacy (October 2025)

- Mass.gov — New AI Curriculum Pilot (September 2025)

- EdTech Magazine — AI Literacy for K-12 Students (October 2025)

- AI4K12 Initiative — Five Big Ideas in AI